Introduction

We are finally here! Large Language Models (LLMs) are about to make the headlines and change our lives forever. Dive in!

Quick Recap:

So far, in this fascinating story of LLMs and their predecessors, we’ve covered:

The Early 1930s to Late 1960s: The Rise and Fall of Mechanical Translation from which history started.

Bonus episode about AI winters 1966 to 1988: The Story of AI Winters and What it Teaches Us Today.

From 1990 to the mid-2000s: There Would Have Been No LLMs Without This

Pre-trained language models

The impact of the Transformer model introduced in the research paper "Attention is All You Need" on natural language processing (NLP) was profound and far-reaching. It has laid the foundation for subsequent models such as BERT, GPT-2, and GPT-3, all of which have built upon and expanded the Transformer architecture (check History of LLMs #3). These advancements have resulted in significant performance improvements across various NLP tasks. Transformers’ influence has shaped the way we approach language modeling today.

GPT-1

Back in 2018, the OpenAI team (company profile) demonstrated their agility by swiftly adapting their language modeling research to leverage the transformer architecture. This led to the introduction of GPT-1, where GPT stands for Generative Pre-Training Transformer.

Image credit: original GPT-1 paper

GPT-1 was a significant milestone, as it embraced a generative, decoder-only Transformer architecture. This choice of architecture allowed the model to generate text creatively and coherently.

To train GPT-1, the team employed a hybrid approach. They first pre-trained the model in an unsupervised manner, exposing it to vast amounts of raw text data. This pre-training phase enabled the model to develop a strong understanding of the statistical patterns and structures present in natural language. Following this, the model underwent a supervised fine-tuning* phase, where it was further refined on specific tasks with labeled data. This two-step process allowed GPT-1 to leverage both the power of self-supervised learning and the guidance of human-labeled data.

*Fine-tuning refers to the process of adapting a pre-trained machine learning model on a specific task or dataset to improve its performance.GPT-1's role as the foundation for subsequent models in the GPT series is very important, but at that moment, the researchers were still nowhere close to the following success.

BERT

In 2019, the introduction of BERT (Bidirectional Encoder Representations from Transformers) by Google researchers brought about a paradigm shift in the field of NLP. BERT's impact was profound, as it combined multiple innovative ideas that propelled NLP performance to new heights.

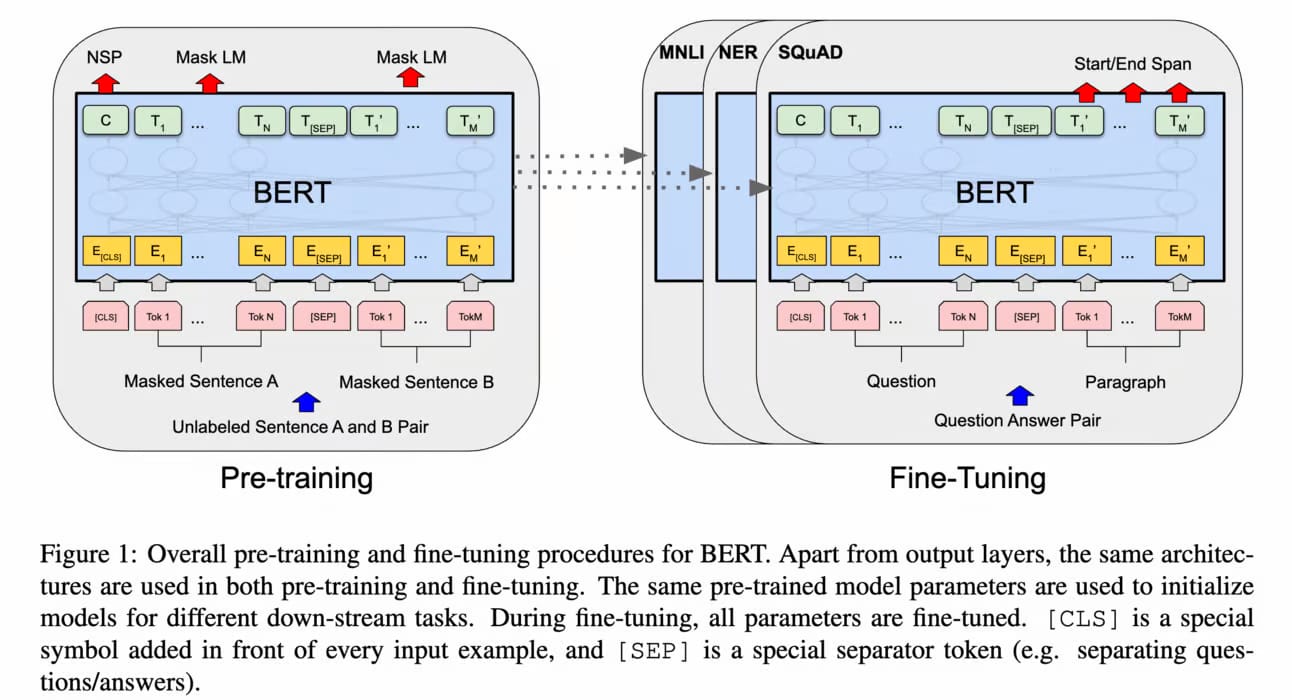

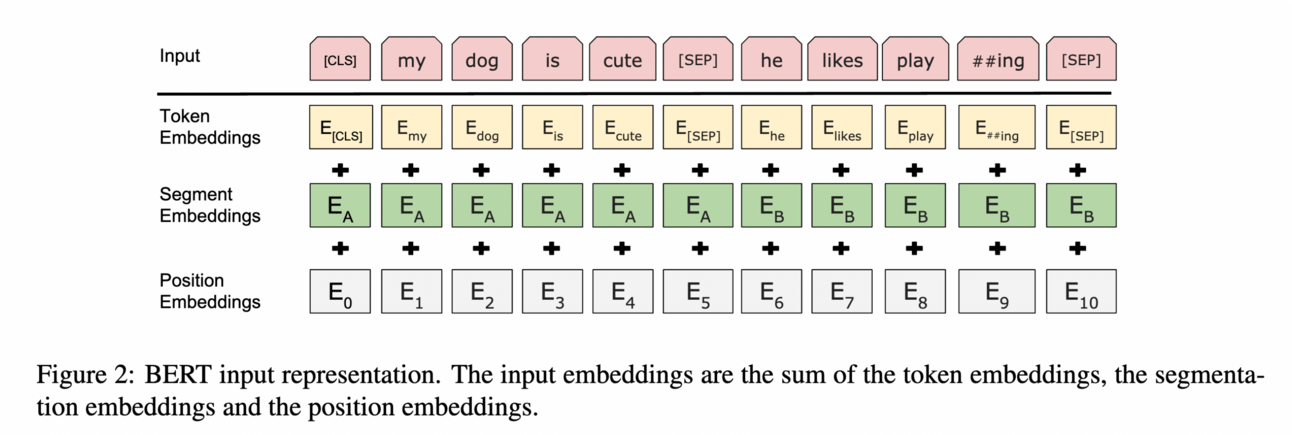

Image credit: Original BERT paper

One of the key advancements in BERT was its bidirectional nature, which allowed it to consider both the input and output context when making predictions. This bidirectional approach significantly improved the model's ability to understand the relationships between words and capture the nuances of language. It's fascinating how this holistic view of context enhanced BERT's performance across various NLP tasks.

BERT's neural network architecture with consistent width is another aspect that I find intriguing. This design choice made the model highly adaptable to diverse tasks. The consistent width of the network-enabled effective parameter sharing, facilitating efficient transfer learning and fine-tuning on specific downstream tasks. This flexibility is a testament to the thoughtful architectural design of BERT.

What made BERT even more impressive was its pre-training on diverse unstructured data. This pre-training phase equipped the model with a comprehensive understanding of word relationships, allowing it to capture the nuances of language usage. By learning from a vast amount of unlabeled data, BERT gained valuable insights into the statistical patterns and semantic relationships present in natural language.

Image credit: Original BERT paper

BERT's accessibility played a pivotal role in its popularity among researchers and practitioners. The pre-trained model could be easily fine-tuned for specific tasks by adding an output layer. This simplicity and effectiveness in fine-tuning made BERT a go-to choice for NLP applications. It empowered researchers and practitioners to achieve state-of-the-art results across a wide range of tasks, fueling further advancements in the field.

GPT-2

OpenAI's release of GPT-2 in 2019 was met with eager anticipation and great excitement as the world witnessed a remarkable leap from GPT-1 to a model with an astounding for that moment 1.5 billion parameters (for comparison: today NVIDIA offers Megatron-Turing Natural Language Generation (MT-NLG) model with 530 billion parameters).

The researchers employed a variant of the Transformer model to train GPT-2 on a diverse corpus of internet text. The model's ability to generate coherent and contextually relevant sentences was truly exceptional. Its outputs often left people questioning whether they were crafted by a human or the model itself. The versatility of GPT-2 was truly astonishing, effortlessly handling a wide range of tasks, including essay writing, question answering, language translation, and even poetic composition.

The release of GPT-2 was not without its fair share of controversy. OpenAI's initial decision to withhold the full model and release only smaller versions was undoubtedly a cautious approach driven by valid concerns about potential misuse (which can’t be said about their current intentions with newer, commercialized LLMs). There was a genuine fear that GPT-2 could be used to generate misleading news articles, facilitate online impersonation, or automate the production of abusive and spam content. These concerns sparked a necessary and vigorous debate surrounding the responsible use of AI.

Foundation Models

When BERT first made its debut, it garnered attention as the largest language model available, boasting an impressive parameter count of 340 million. However, since 2018, there has been an ongoing trend among NLP researchers to push the boundaries even further by developing increasingly larger models fueled by the scaling law.

Through their exploration, researchers have discovered that these larger Pre-trained Language Models (PLMs), commonly referred to as "large language models" (LLMs), exhibit emergent capabilities that were previously unseen in smaller models. A prime example of such emergent behavior is GPT-3, which showcases the remarkable ability to solve few-shot* tasks through in-context learning. In contrast, GPT-2 struggles to achieve the same level of effectiveness in this domain.

Few-shot learning is a machine learning approach that enables models to learn from a small number of labeled examples to generalize to new, unseen tasks or classes.The advent of LLMs has ignited a race within the research community, with researchers striving to develop the largest models and use bigger datasets. This pursuit is driven by the quest to better understand the impact of this change on the capabilities of these models. It represents a collective effort to uncover the potential and boundaries of LLMs. As it became possible to use LLMs as foundations for many AI applications, they also started to be called Foundation Models.

GPT-3

OpenAI introduced GPT-3 in 2020, surpassing its predecessor, GPT-2, by an order of magnitude, with an astounding 175 billion parameters for the biggest model in this series of eight models (table 2.1). GPT-3 could generate not only coherent paragraphs but entire articles that were contextually relevant, stylistically consistent, and often indistinguishable from human-written content.

Image credit: Original GPT-3 paper

However, alongside its impressive achievements, GPT-3 also revealed the challenges inherent in LLMs. It occasionally produced biased, offensive, or nonsensical outputs, and its responses could be unpredictable. These issues underscored the significance of ongoing research into the safety and ethical implications of LLMs.

GPT-3 pioneered the concept of using LLMs for few-shot learning, achieving impressive results without the need for extensive task-specific data or parameter updating. Subsequent LLMs like GLaM, LaMDA, Gopher, and Megatron-Turing NLG have further advanced few-shot learning by scaling the model size, resulting in state-of-the-art performance on various tasks. However, there is still much to explore and understand about the capabilities that emerge with few-shot learning as researchers continue to push the boundaries of model scale.

Pathways Language Model (PaLM)

As the scale of the model increases, the performance improves across tasks while also unlocking new capabilities. Image credit: Google Research announcement

In 2021, Google Research introduced its vision for Pathways, aiming to develop a single model with broad domain and task generalization capabilities while maintaining high efficiency. As part of this vision, they unveiled the Pathways Language Model (PaLM), a dense decoder-only Transformer model with an impressive 540 billion parameters. PaLM underwent evaluation across numerous language understanding and generation tasks, demonstrating state-of-the-art few-shot performance across most tasks, often by significant margins.

💣 The ChatGPT moment 💥

In March 2022, OpenAI released the InstructGPT model and a paper Training language models to follow instructions with human feedback that at that moment were not quite noticed. This model is not merely an extension of GPT -3's language capabilities; it fundamentally transforms the architecture by infusing it with a capacity to comply with instructions. In essence, InstructGPT blends the language prowess of GPT-3 with a new dimension of task-focused compliance, setting a new standard for the future of language model development. To better understand written instructions, another revolutionary technique known as reinforcement learning from human feedback (RLHF) was used to fine-tune GPT-3. The secret sauce that powered up ChatGPT!

Here we are, the historical moment: November 2022, OpenAI releases ChatGPT into the wild without fully considering the consequences.

ChatGPT, a conversation model based on the GPT series (GPT-3.5 and GPT-4), was trained similarly to its sibling model InstructGPT but with a specific focus on optimizing for dialogue. Unlike InstructGPT, ChatGPT's training data included human-generated conversations where both the user and AI roles were played. These dialogues were combined with the InstructGPT dataset in a dialogue format for training ChatGPT.

To identify any weaknesses in the model, OpenAI conducted "red-teaming" exercises where everyone at OpenAI and external groups tried to break the model. Additionally, they had an early-access program where trusted users were given access to the model and provided feedback.

Following the frenzy, Microsoft, who had partnered with OpenAI in 2019, built a version of its Bing search engine powered by ChatGPT. Meanwhile, business leaders took a sudden interest in how this technology could improve profits.

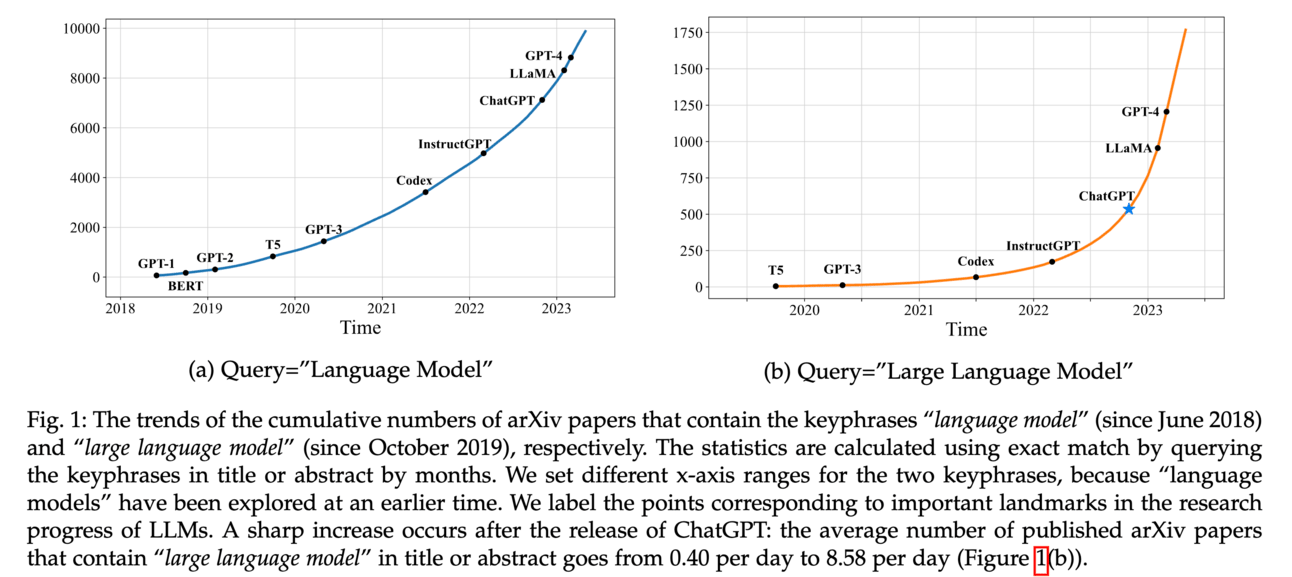

Image Credit: A Survey of Large Language Models

The emergence of ChatGPT produced a real sensation in the media and research community. According to research in this paper, a sharp increase occurred after the release of ChatGPT: the average number of published arXiv papers that contain a “large language model” in title or abstract goes from 0.40 per day to 8.58 per day.

Chasing ChatGPT

Following the ChatGPT moment, many researchers and tech companies joined the race and began to publish LLMs one after another. Stanford made a table with all current LLMs, it’s more than a lot, and we will be covering the best of them later. Just a few of the important LLMs that were hastily released in February 2023:

Cohere introduced a beta version of its summarization product, leveraging a customized LLM specifically designed for that task. This model allowed users to summarize up to 18-20 pages of text, surpassing the limitations of ChatGPT and GPT-3.

Google unveiled Bard, its chatbot powered by its own Language Model for Dialogue Applications (LaMDA). But shortly after release, Bard was suspended due to the error it made directly in the video presentation of the technology. Later, Google rolled out an experimental version, first available for users in the US and UK. Then, it became available worldwide.

Meta introduced Large Language Model Meta AI (LLaMA) to the scene. Unlike a direct replica of GPT-3, LLaMA aimed to provide the research community with manageable-sized large language models. It offered four different sizes, with the largest variant containing 65 billion parameters, still significantly smaller than GPT-3. Initially, LLaMA was not intended to be open-sourced, but a leak via 4chan sparked a frenzy of thousands of downloads and ignited a wave of groundbreaking innovations in LLM agents built upon its foundation.

GPT-4

In March 2023, OpenAI released GPT-4, a significant advancement that expanded the capabilities of the GPT series. GPT-4 introduced support for multimodal signals, allowing for input beyond text alone. Compared to GPT-3.5, GPT-4 demonstrated stronger performance on complex tasks and achieved notable improvements across various evaluation metrics.

Image credit: GPT-4 Technical Report

A recent study conducted qualitative tests with human-generated problems to assess the capacities of GPT-4. The results showcased its superior performance compared to previous GPT models, including ChatGPT, across a diverse range of difficult tasks. Notably, GPT-4 exhibited enhanced safety in responding to malicious or provocative queries due to a six-month iterative alignment process and the inclusion of an additional safety reward signal in the RLHF training.

OpenAI addressed concerns regarding the potential issues of LLMs by implementing intervention strategies. They introduced mechanisms such as red teaming to mitigate harmful or toxic content generation. Furthermore, GPT-4 was developed on a well-established deep learning infrastructure, incorporating improved optimization methods. A notable addition was the introduction of a mechanism called predictable scaling, enabling accurate performance prediction with a small proportion of computing during model training. These measures aimed to address issues like hallucinations, privacy concerns, and overreliance on the model.

The age of giant LLMs is already over?

After the release of GPT-4, OpenAI CEO Sam Altman expressed his belief that the era of extremely large models was coming to an end. Altman stated that the approach of continuously increasing the size of models and feeding them more data had reached a point of diminishing returns. OpenAI was facing challenges related to the physical limitations of data centers and their capacity to build more. There is a simple lack of GPUs.

Altman's perspective was echoed by Nick Frosst, a co-founder at Cohere, who agreed with the sentiment: “There are lots of ways of making transformers way, way better and more useful, and lots of them don’t involve adding parameters to the model,” he says. Frosst says that new AI model designs, or architectures, and further tuning based on human feedback are promising directions that many researchers are already exploring.

Just recently, Meta introduced the first model based on Yann LeCun's vision for more human-like AI: the Joint Embedding Predictive Architecture (I-JEPA). I-JEPA is a step towards offering an alternative to the widely used transformer architecture. Will this bring us to a new era?

This is something to keep an eye on!

Conclusive Thoughts

There is another question: Are we in the middle of an AI bubble that could burst any second and submerge us in AI winter? This topic both ignites our imagination and stirs a hint of trepidation. Numerous concurring factors suggest the possibility of a bubble phenomenon:

Towering stakes placed upon AI and the astounding levels of funding pouring into startups that sometimes wield AI as a mystical talisman as if uttering the sacred word guarantees an abundance of investor favor. Yet, amidst this seemingly feverish pursuit, the profitability of these companies remains a looming question mark.

Meanwhile, a frenzied interest from the public and media amplifies the sensation of AI's omnipresence, perpetuating an atmosphere of grandiose claims and exaggerated expectations. As Nick Couldry, Professor at the London School of Economics and co-author of The Costs of Connection told Turing Post:

“Over and above the obvious benefits for Microsoft and its languishing search engine Bing, I see ChatGPT as an ambitious attempt to 'sell' the idea of LLMs and their integration everyday work and life. I doubt very much whether this will lead to a more effective and trustworthy form of search or information retrieval. But it will certainly push further the pressure to anthropomorphize AI, and that can only distract us from the real debate we need about LLMs, AGI and knowledge production today.

In this swirl of commotion, one can hardly escape the swift-talking wave of research, with social media feeds awash with tweets proclaiming, "I have birthed my very own chatbot—behold my GitHub!" Amidst this cacophony, it becomes increasingly difficult to discern the genuine contributions of dedicated researchers and to truly grasp the genuine transformations transpiring within the realm of AI.

History of LLMs by Turing Post:

To be continued…

If you liked this issue, subscribe to receive the third episode of the History of LLMs straight to your inbox. Oh, and please, share this article with your friends and colleagues. Because... To fundamentally push the research frontier forward, one needs to thoroughly understand what has been attempted in history and why current models exist in present forms.