Generative models have a rich history dating back to the 1980s and have become increasingly popular due to their prowess in unsupervised learning. This category includes a wide array of models, such as Generative Adversarial Networks (GANs), Energy-Based Models, and Variational Autoencoders (VAEs). In this Token, we want to concentrate on Transformer and Diffusion-based models, which have emerged as frontrunners in the generative AI space.

It’s time to be more technologically specific. We will:

explore the origin of Transformer architecture;

discuss Decoder-Only, Encoder-Only, and Hybrids;

touch the concepts of tokenization and parametrization;

explore diffusion-based models and understand how they work;

overview Stable Diffusion, Imagen, DALL-E 2 and 3, and Midjourney.

Transformer-Based Models

Basics

On the basic level, Transformer is a specific type of neural network. We encourage you to read The History of LLMs, where we trace the story of neural networks from the very beginning to Statistical Language Models (SLMs), and to the introduction of Neural Probabilistic Language Models (NLMs) that signified a paradigm shift in natural language processing (NLP). Rather than conceptualizing words as isolated entities, as is done in SLMs, neural approaches encode them as vectors in one space to capture the relationships between them.

This brings us to our first key concept in understanding Transformers: word embeddings. They are dense vector representations of words that capture semantic and syntactic relationships, enabling machines to understand and reason about language in NLP tasks.

Word embeddings are the output of specific algorithms or models, with word2vec being one of the most prominent examples of its time. These embeddings vary in dimensionality, and it's worth noting that higher-dimensional embeddings offer richer, more nuanced contextual relationships between words.

How do Transformers work?

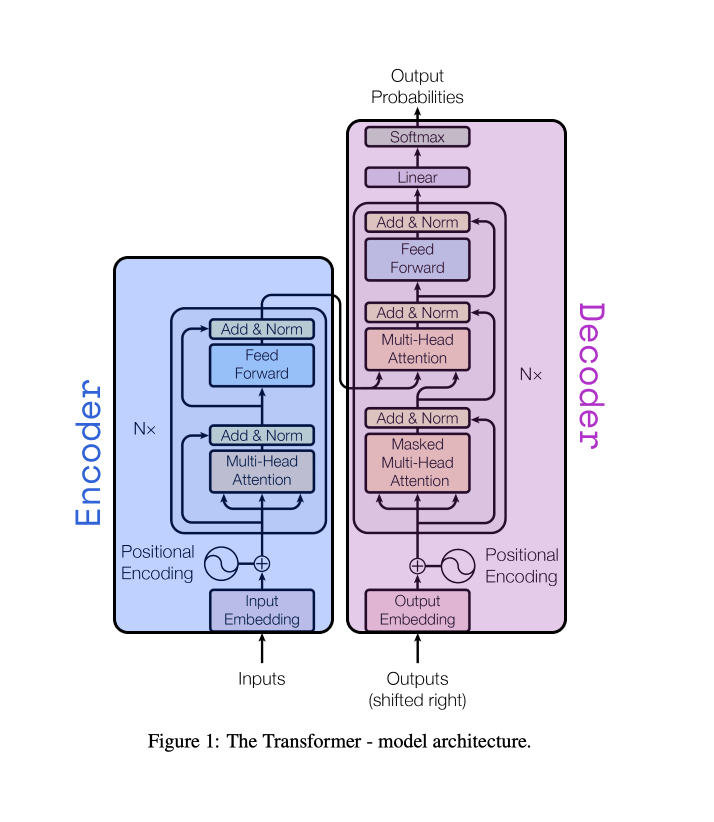

The encoder-decoder framework serves as the backbone of the Transformer architecture.

Source: original paper

Source: original paper

According to the Deep Learning book, this framework was initially proposed by two independent research teams in 2014. Cho et al. introduced it as an "encoder-decoder," while Sutskever et al. named it the "sequence-to-sequence" architecture. It was originally envisioned in the field of recurrent neural networks (RNNs) as a solution for mapping a variable-length sequence to another variable-length sequence for translation task.

Encoder-decoder architecture. Source: DL book

Let’s review the roles of the encoder and decoder and how they work.

Encoder

In a transformer model, the encoder takes a sequence of text and converts it into numerical representations known as feature vectors or feature tensors. Each vector or tensor corresponds to a specific word or token in the original text. The encoder operates in a bi-directional manner, leveraging a self-attention mechanism.

What does bi-directional mean here? It means the encoder considers words from both directions – those that come before and those that come after a given word – when creating its numerical representation.

And what about self-attention? It allows each feature vector to include context from other surrounding words in the sequence. Self-attention mechanism computes weights using Query, Key, and Value vectors, then applies these weights to get the output. In simpler terms, each feature vector isn't just about one word; it also encapsulates information from its neighboring words.

Decoder

Following the encoder's lead, the decoder takes these feature vectors as its input and translates them back into natural language. Unlike the encoder, which works bi-directionally, the decoder operates in a uni-directional fashion. This means it decodes the sequence one step at a time, either from left to right or from right to left, considering only the words in that particular direction as it goes along.

This uni-directionality also goes hand-in-hand with masked self-attention. For any given word, the decoder incorporates context from only one direction – either the words that precede it or those that follow it.

Additionally, the decoder is auto-regressive, which means it factors in the portions of the sequence it has already decoded when processing subsequent words. In other words, each step in the decoding process not only relies on the feature vectors from the encoder but also on the words that the decoder has already generated.

Illustration of how encoder and decoder work together. Blue rectangles represent the encoder part, green rectangles – are the decoder part, and red rectangles – are the output of the model. Source: Hackmd