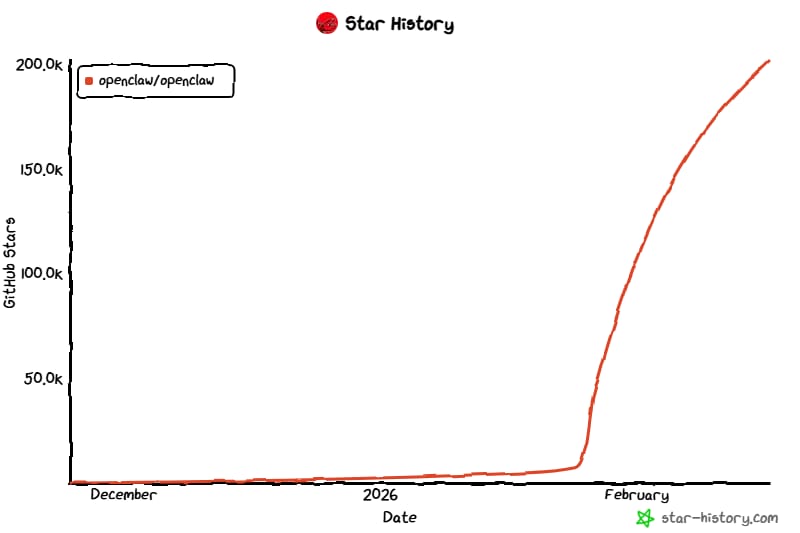

There is probably no one in the AI community who hasn’t heard of OpenClaw – a project that quickly became one of the fastest-growing repositories on GitHub, surpassed 200,000 stars, and sparked a wave of new experiments and similar local agent projects.

In a matter of days, OpenClaw’s founder, Peter Steinberger, was interviewed by Lex Fridman (over 600k views) and hired by OpenAI (who even knows for how many millions) – a sign that this was more than just another incremental agent project.

What distinguished OpenClaw was its relatively light safety scaffolding, which made the underlying capabilities more visible and easier to experiment with. In that sense, Steinberger’s trajectory loosely echoes OpenAI’s early ChatGPT moment: a decision to release something imperfect but powerful, and let the consequences reshape the conversation. And the whole world of personal assistants.

And what a blunder by Anthropic. Initially, the project was called Clawdbot – a literal homophone of Claude. Instead of embracing the momentum around it – by backing the project, hiring Peter, or integrating it into their coding-agent ecosystem – they sent lawyers and forced a rename. Facepalm.

In any case, today, we want to dedicate some time to unpack why OpenClaw matters and how it works under the hood, because the architecture shapes what this system can and cannot do. There are a few very clever ideas. We’ll also suggest a few lightweight alternatives and cut through the noise to understand what the OpenClaw debate is really about.

Whether you’ve played with it or not, there’s plenty to discover. Let’s go!

In today’s episode, we will cover:

The Boom

How is OpenClaw built? Architectural breakdown

SOUL.md – why it’s new

HEARTBEAT.md – scheduled agent turns

MEMORY.md

Alternatives to OpenClaw

Where to use OpenClaw?

Not without limitations

Conclusion: What does “Local Agent Boom” mean today?

Sources and further reading

What Is OpenClaw?

Quick Overview OpenClaw is an open-source framework for building a personal AI assistant that can act across messaging apps and workflows. You can deploy an agent that connects to channels like WhatsApp, Telegram, Discord, Microsoft Teams, and web chat, then automate tasks on your behalf. Its core advantage is personalization: the assistant can be configured to your personality, your tools, preferences, and operating context.

The Boom

OpenClaw’s rise did not come from a massive corporate launch or a carefully staged rollout. As Peter Steinberger describes it, he built the initial prototype – a WhatsApp relay connected to Anthropic’s Claude – in about an hour. And that revealed that instead of switching between apps, you could simply message an agent and have it act on your behalf.

Peter could never have imagined that this small experiment would soon disrupt the entire AI industry.

Image Credit: OpenClaw – Personal AI Assistant GitHub

This marks a broader shift in how we interact with software. Peter Steinberger points out that many apps exist mainly to gatekeep interfaces. Once an agent has unified context – your location, sleep, calendar, preferences – it can reason and act across domains in ways no siloed app ever could.

Some apps will shrink into backend APIs; others will simply disappear. OpenClaw is the clearest embodiment of the practical, context-aware automation people have wanted for years.

The project has unleashed a surge of builder energy. California’s scene went through the roof: San Francisco’s Claw’s event drew 700+ developers, with follow-up meetups in the Bay Area and LA still selling out overnight and turning away hundreds. Vienna’s ClawCon packed 500 people who came to demo what they’d built. As Peter said: “The lobster is taking over the world.”

Beyond the tech itself, Peter’s personal story has become a major source of inspiration. After more than a decade scaling PSPDFKit, a commercial toolkit for working with PDF documents inside apps, he hit burnout and lost the joy of coding. He stepped back, traveled, and only rediscovered the fun through hands-on experiments with AI agents. Because he was financially secure, he could afford to run OpenClaw at a personal infrastructure cost of $10–20K per month — a tradeoff he was okay with because he believed deeply in the direction of personal agents. That mix of playful experimentation and genuine generosity, without the usual money-first pressure, is exactly what many builders say has been missing.

It all lands perfectly in this new era of open exploration and the renewed power of open source.

And then another big thing happened: Peter was hired by OpenAI while moving OpenClaw to an independent open-source foundation that OpenAI will continue to support. This is far more than a big company hiring talent. (Stay tuned to the end to see exactly why it matters so much.)

How is OpenClaw built? Architectural breakdown

The single biggest design decision in OpenClaw is this: it treats the agent itself as a collection of files on disk rather than as code you have to write or prompts you have to keep re-injecting.

Identity, memory, skills, heartbeat rules, tool policies – everything lives in a workspace directory as ordinary Markdown files and folders. That one shift turns the agent from a disposable script into durable, versionable, inspectable infrastructure.

Since in every AI101 episode we break down the behind-the-scenes stuff and how AI actually works, that’s where we’ll kick things off with OpenClaw explanation.

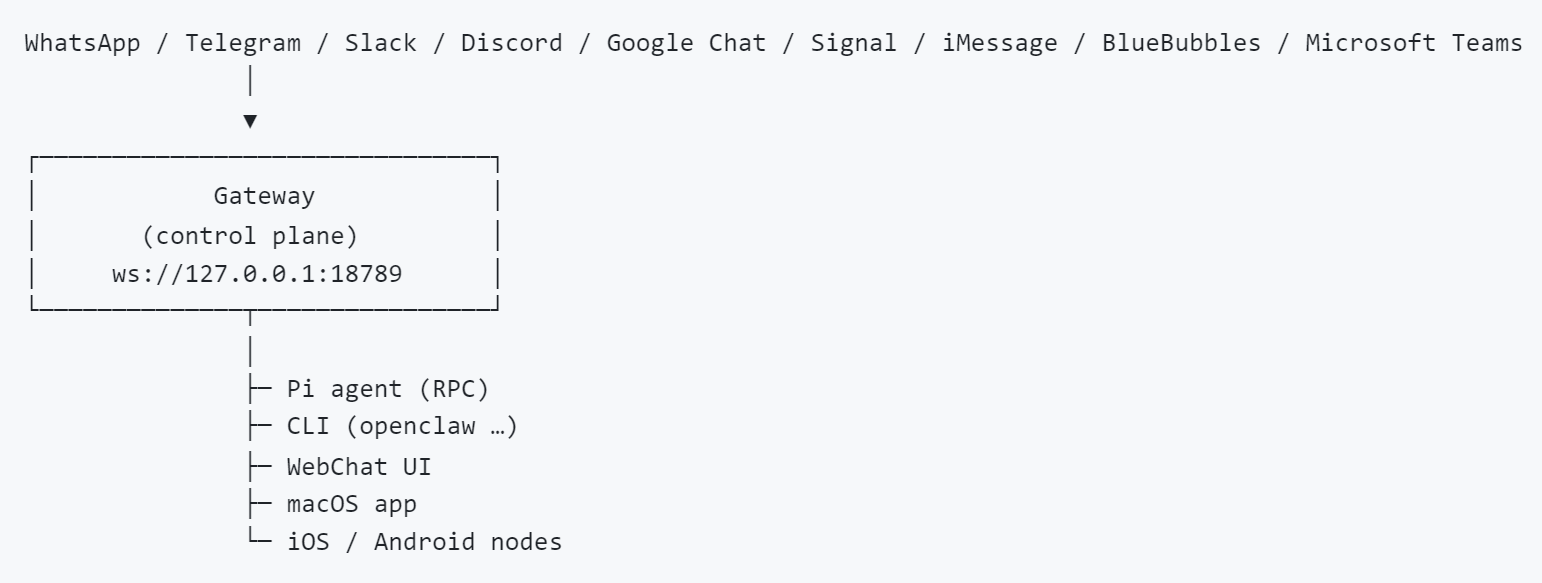

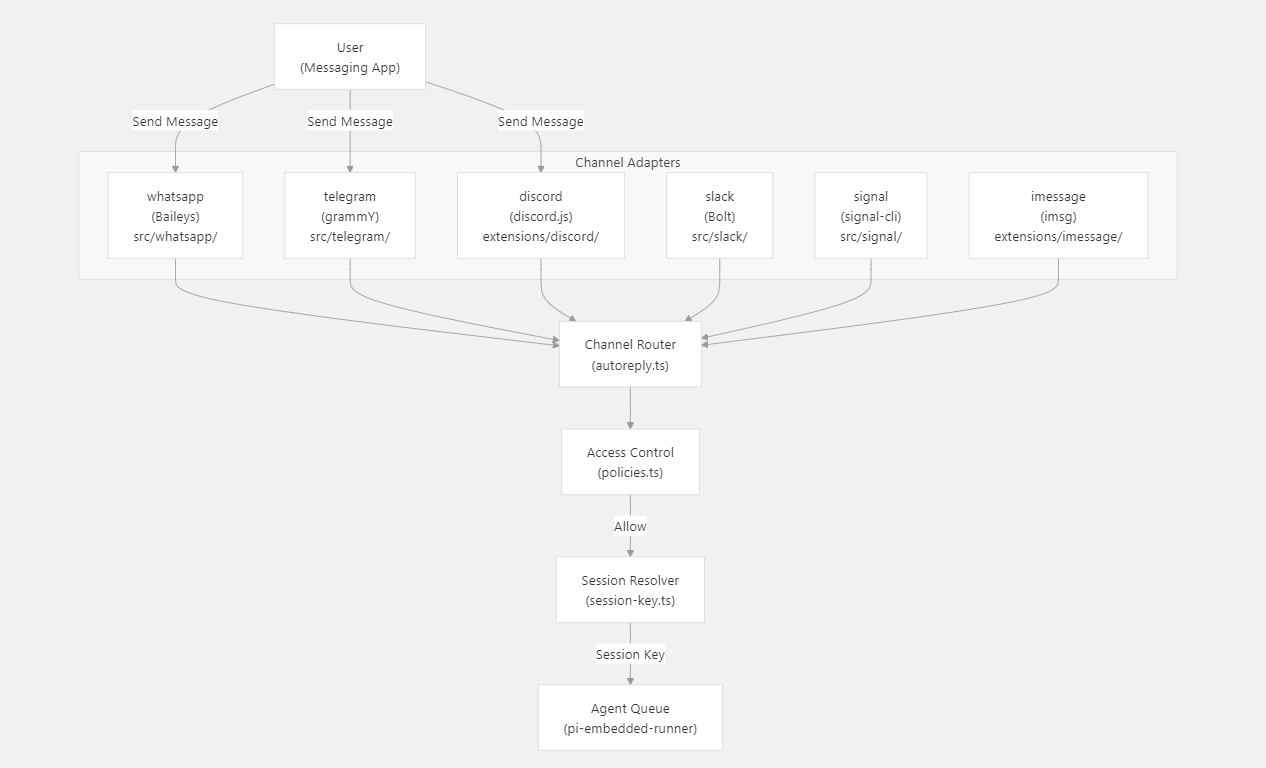

Basically, when you send a message in a messenger (like Telegram or WhatsApp), the channel adapter forwards it to OpenClaw’s central Gateway. The Gateway checks who you are, figures out which agent and session should handle it, loads the conversation history and memory, applies tool and sandbox rules, and then runs the AI agent. The agent may call tools, search memory, or execute actions, and the final response is sent back through the same messenger, all coordinated by that single Gateway process.

Image Credit: OpenClaw GitHub

That’s the simple explanation. Now let’s go one level deeper and look at how OpenClaw actually works.

What’s important to understand is that OpenClaw is not just a chatbot, even if it may appear that way at first glance. It is a self-hosted AI gateway — a control plane that sits between language models, messaging platforms, tools, devices, and long-term memory.

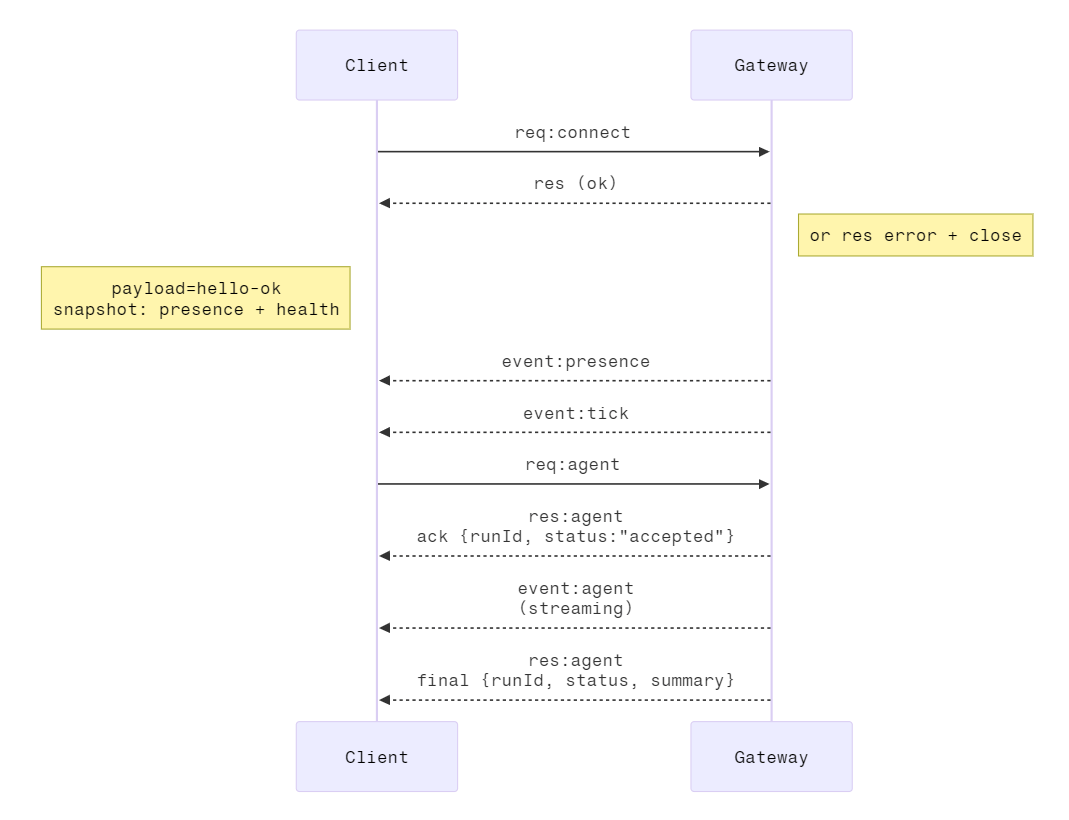

At the center of the system is a single long-running process called, you got it, the Gateway. Everything flows through it. The Gateway is a WebSocket server that exposes a typed JSON-RPC protocol. Clients, channels, device nodes – all connect to it. Agents are executed through it. Configuration changes are applied through it. Nothing can bypass it. So if OpenClaw is running, the Gateway is the authority over sessions, routing, tool invocation, and state.

Messaging platforms, such as WhatsApp, Telegram, Discord, Slack, Signal and others, are like adapters. Each one translates a platform-specific protocol into a normalized internal envelope. When a message arrives, the channel adapter extracts identity, thread or group context, attachments, and metadata, then forwards a structured event into the Gateway. From that moment all messages look the same inside the system.

Image Credit: OpenClaw DeepWiki

Then routing happens. The Gateway resolves a session key that encodes:

which agent is responsible

which provider the message came from

whether it is a direct message or group, and

which thread it belongs to

A Slack thread, a Telegram group, and a WhatsApp DM all become different session scopes. These keys determine which transcript is loaded, which memory applies, and how isolation is enforced.

Sessions here are persisted JSONL transcripts stored under the agent’s directory. They track history, model overrides, tool results, and runtime flags. OpenClaw can process sessions sequentially, concurrently or in a batched “collect” mode depending on routing configuration. Sessions can reset on idle time, daily schedules, or manual triggers.

Agents are execution runtimes. Each agent has its own identity, workspace directory, model configuration, and tool policy. Multiple agents can coexist within a single Gateway instance without sharing memory or context unless explicitly configured to do so.

Image Credit: OpenClaw Website

The workspace is where the agent's identity becomes concrete. Files like AGENTS.md, SOUL.md, TOOLS.md, and other Markdown documents are read during system prompt construction. Skills inside the workspace/skills/ directory are automatically discovered and injected into the prompt bootstrap process. A skill is a bundle containing a SKILL.md and metadata; its documentation becomes part of the agent’s awareness. The model figures out capabilities, assembled from real files on disk.

In general, OpenClaw is built on the idea that agents should continuously acquire and use structured skills to expand what they can actually do.

Tool execution is filtered through a layered policy chain:

Global allow and deny rules are applied first.

Provider-specific restrictions come next.

Agent-level overrides refine them further.

Group-specific overrides can narrow them again.

And finally, sandbox restrictions determine what survives.

Tool groups expand into concrete capabilities, and the resulting tool registry is constructed deterministically before the agent runs. By default, tools run on the Gateway host. But OpenClaw can sandbox tool execution inside Docker containers with configurable isolation scopes: per session, per agent, or shared. Workspace access can be read-only, read-write, or disabled. Execution can also be routed to device nodes like companion apps running on macOS, iOS, or Android that expose capabilities like camera access, notifications, shell commands, or system queries. In that case, the Gateway moves from a local process runner role to a coordinator for distributed execution.

SOUL.md – Why It’s New

Don’t settle for shallow articles. Learn the basics and go deeper with us. Truly understanding things is deeply satisfying.

Join Premium members from top companies like Microsoft, Nvidia, Google, Hugging Face, OpenAI, a16z, plus AI labs such as Ai2, MIT, Berkeley, .gov, and thousands of others to really understand what’s going on in AI.