Quick answer: What is Gemma 4

Gemma 4 is Google DeepMind’s new open model family designed around intelligence per parameter and practical local deployment, not just raw model size. The lineup spans small edge-focused models (E2B, E4B) and larger local-reasoning models (26B A4B, 31B), combining long context, multimodality, structured outputs, function calling, and efficient attention patterns that make strong agent workflows possible on real hardware.

Subscribe for weekly operator-grade AI systems analysis:

https://www.turingpost.com/subscribe

Key concepts in this article

Gemma 4: Google DeepMind’s new open model family for local and edge deployment.

Intelligence per parameter: getting more capability from smaller active compute and tighter memory budgets.

E2B / E4B: edge-optimized Gemma 4 variants for phones, laptops, and embedded systems.

26B A4B: a sparse Mixture-of-Experts model with about 4B active parameters at inference.

31B dense: the largest Gemma 4 variant for stronger local reasoning and coding workloads.

Local + global attention: Gemma 4 alternates cheap sliding-window attention with periodic full-context synchronization.

Grouped Query Attention (GQA): a memory-saving attention design that reduces KV-cache cost.

Multimodal pipeline: Gemma 4 supports text and images across the family, with audio support in the smaller models.

Why OpenClaw users care: local inference, Apache 2.0 licensing, long context, tool-use features, and lower dependence on paid APIs.

On April, 2, 2026 Google DeepMind released Gemma 4 – a family of four models which expands the open stack with multiple sizes from 2B to 31B parameters. Gemma has always been a good model. But when it was first launched, there was no use case like OpenClaw, or other open-source agents, the way there is now. So we decided to cover it used with OpenClaw to make it more practical.

One thing is super interesting about Gemma 4. It is pushing a different axis of progress: intelligence per parameter and per unit of compute. Previously, the focus for many open models was to achieve maximum capability at a given scale. Now it has shifted to the hardware possibilities. The same core ideas, such as sparse activation, efficient attention, and multimodal processing, are expressed differently depending on whether the target is a phone, a laptop GPU, or a high-end accelerator. This means that DeepMind makes high-level intelligence accessible across the entire hardware spectrum, betting on adoption among ordinary users and local developers.

That’s why the Gemma 4 release rapidly raised a wave of adoption in OpenClaw, becoming the new default local candidate to try first.

Today we explain how Gemma 4 models get frontier-level performance out of much smaller effective compute budgets through architectural choices, and what makes them so appealing for users to switch to (or at least try) in OpenClaw. Here is your guide to one of the most capable model your hardware can sustain.

In today’s episode:

What is Gemma 4 notable for?

How Gemma 4 Works: Architecture Is Everything

Attention Mix: Local + Global

Five Special Optimizations for Global Attention

The vision pipeline

E4B and E2B Model Specifics

Why many OpenClaw users are now switching to it?

Why Some Users are Pushing Back

Conclusion

Sources and further reading

What is Gemma 4 notable for?

Gemma 4 is presented as a family of open models built from the same research and technology stack as Gemini 3. But the more interesting point is what Google is optimizing for: intelligence per parameter and per unit of compute. In practice, that means pushing more reasoning, coding, and multimodal capability into models that can run on smaller hardware budgets.

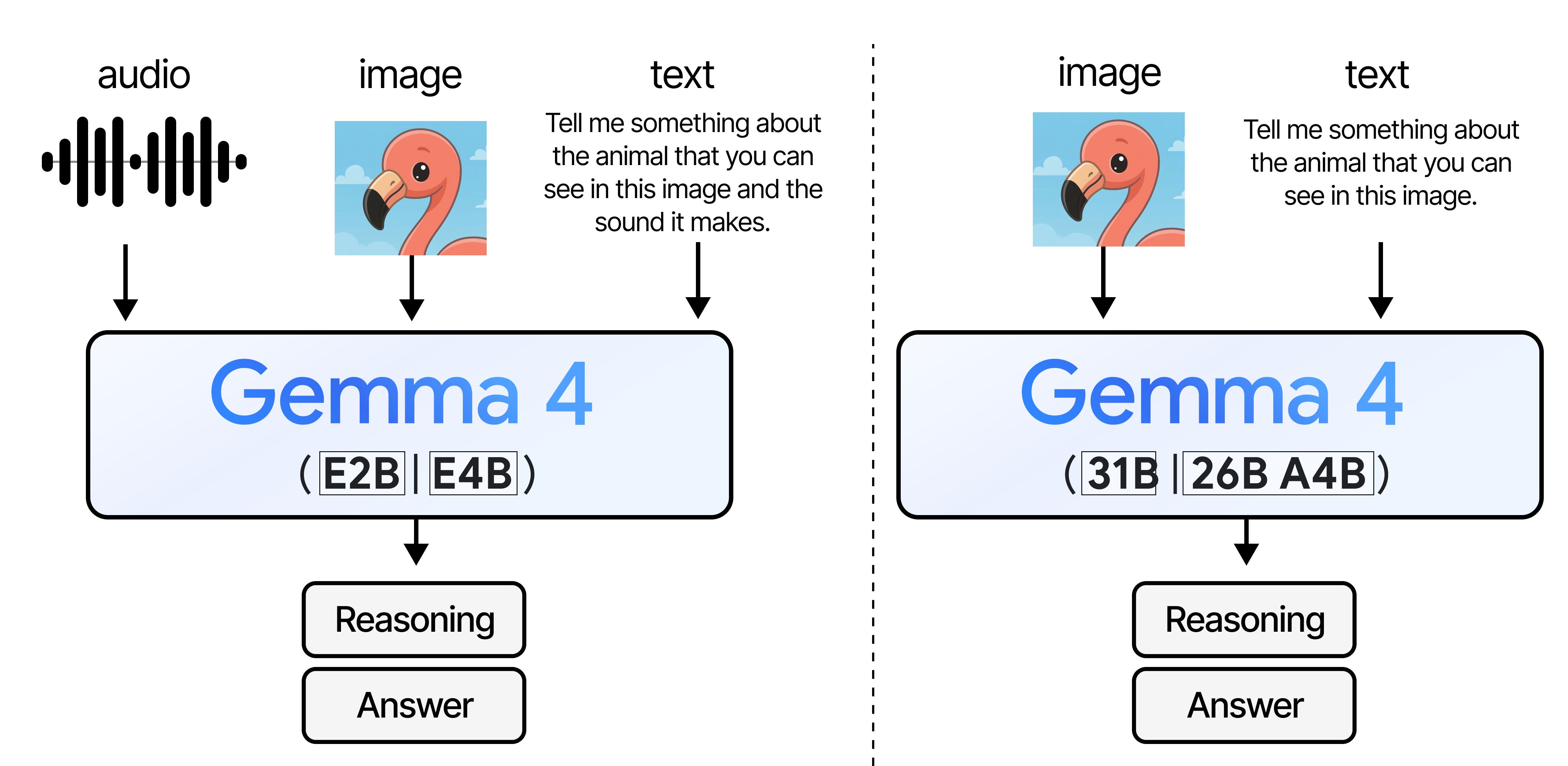

It is a deployment-aware family structured around distinct hardware targets and inference budgets and divided into two groups:

E2B (effective 2B) and E4B (effective 4B)

These models are designed for edge devices, providing near-zero latency, low memory usage and battery efficiency. They are multimodal (vision + audio) and run fully offline on devices like phones, Raspberry Pi, Jetson board or small embedded systems.

26B A4B (Mixture-of-Experts) and 31B (dense)

These two variants are designed for local frontier-level reasoning performance. In BF16 precision format, both fit within a single 80GB H100’s memory budget (about 48 GB for 26B A4B and 58.3 GB for 31B), and lower-bit quantized versions can run on smaller local GPUs, turning a workstation into your local AI server. They can process images but don’t process audio data.

Image Credit: A Visual Guide to Gemma 4 by Maarten Grootendorst

Gemma 4, in line with today’s demands, is structured around agentic workflows: models support native function calling, structured JSON outputs, system-level instruction handling. This turns the new Gemma 4 into a general-purpose reasoning engine with multimodal capabilities by default and support for more than 140 languages.

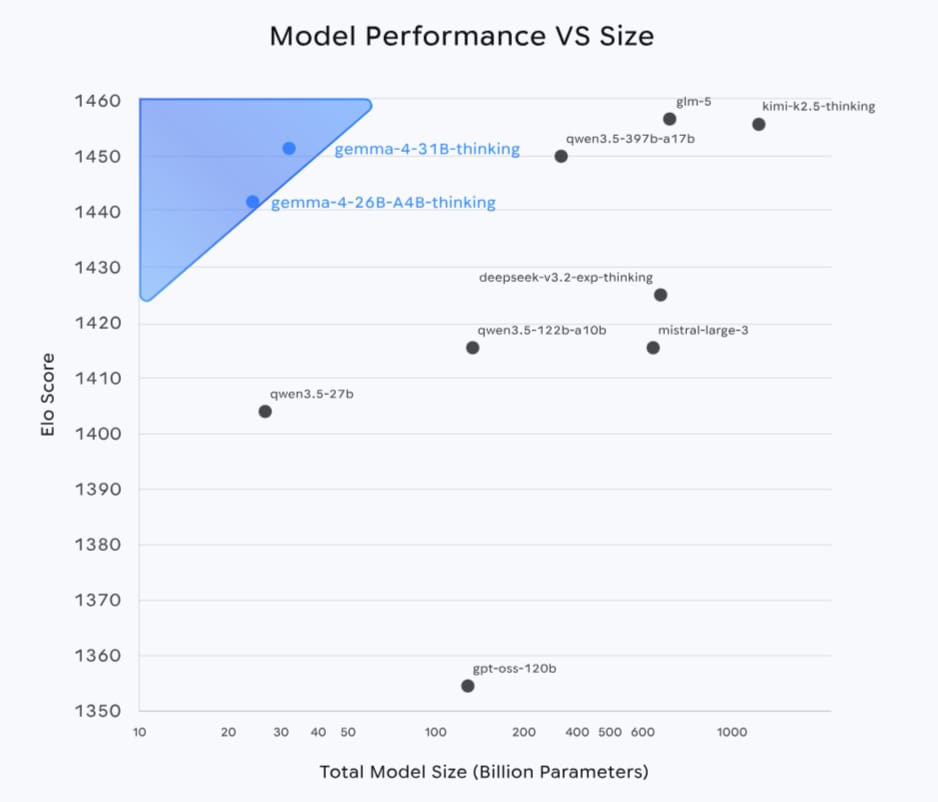

Gemma 4 is rather competitive:

31B model → #3 open model globally on Arena AI leaderboard

26B model → #6 open model

Image Credit: Gemma 4 model page

Even the smaller models remain competitive for their size: E4B reaches ~52% on coding tasks and E2B still handles basic reasoning and multimodal tasks.

This validates the core idea of this release: you can get near-frontier performance without scaling model size proportionally, and this shows up clearly in how the models are designed.

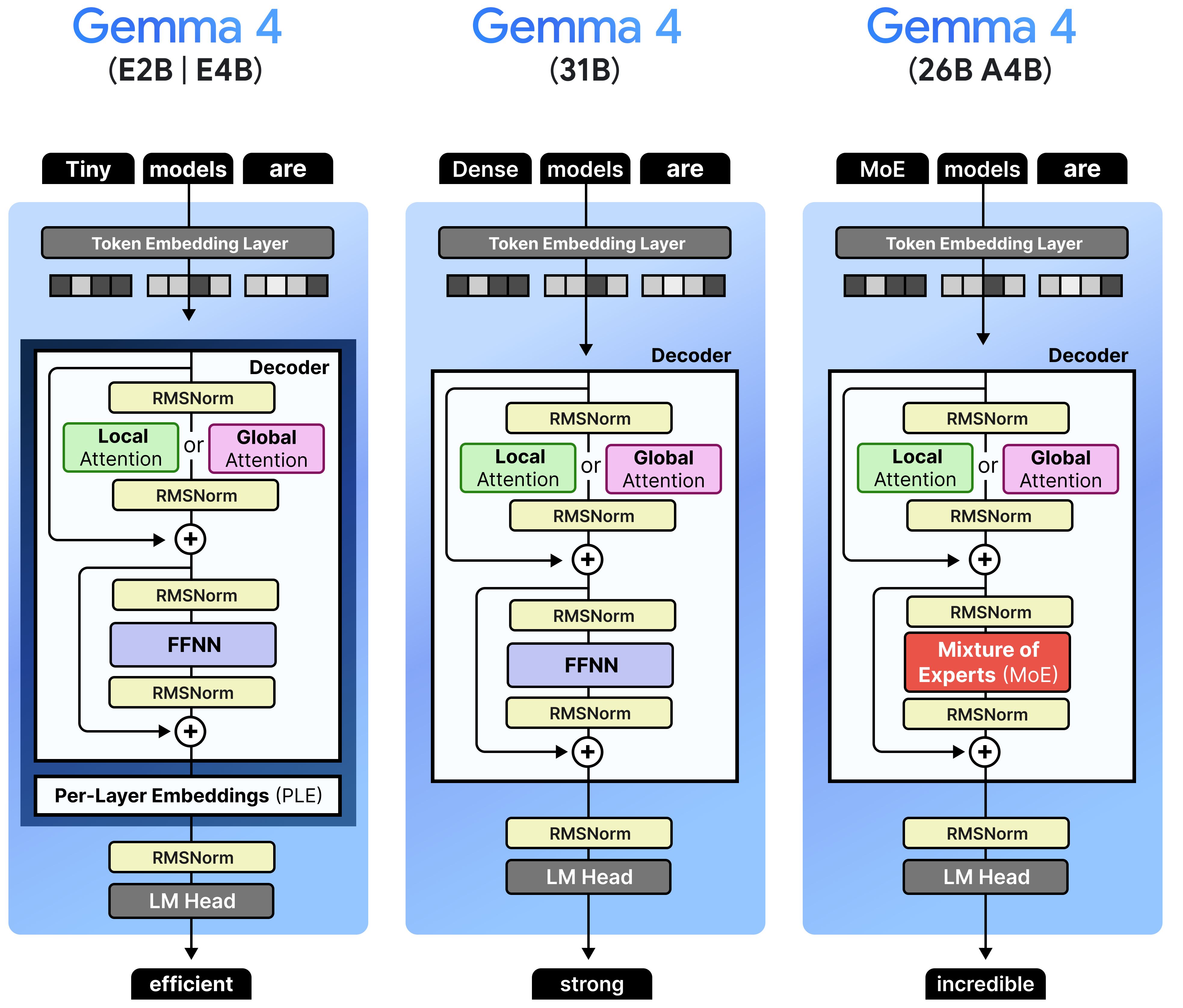

How Gemma 4 Works: Architecture Is Everything

If we go deeper into the Gemma 4’s architecture, we’ll see the following structure.

Two of the larger family members have different but concrete architectures:

The 31B model is a dense Transformer, meaning that all parameters are active for every token. Compared to its predecessor, it has fewer layers, but larger hidden dimensions to improve parallelism and throughput without changing the overall structure much.

The 26B A4B model introduces sparsity through a Mixture-of-Experts (MoE) architecture with 26 billion total parameters and only 3.8B parameters active during inference (this is what A4B stands for: ~4B active parameters). In practice, this means you get something that behaves closer to a large 26B model in terms of capability, while paying a compute cost closer to a much smaller one. As for the workflow, in this Gemma variant, a routing mechanism activates only 8 from the total 128 experts along with a shared expert that is always used.

At the other end, there are two small, dense models with 2B and 4B effective parameters: E2B and E4B. They approach the same problem – capacity vs. active compute – from a different angle. Small Gemmas use per-layer embeddings, which compress how representations are stored across the network, so the effective footprint is smaller than what a naive scaling would suggest. That’s why you can use them on edge devices. (We will discuss their features more precisely a little bit later.)

Image Credit: A Visual Guide to Gemma 4 by Maarten Grootendorst

Even though all Gemma 4 variants target very different hardware setups, they share the same underlying structure and components that define a common architectural backbone of efficiency →

Attention Mix: Local + Global

Don’t settle for shallow articles. Learn the basics and go deeper with us. Truly understanding things is deeply satisfying. We open the article about Gemma and OpenClaw for everyone!

Honestly, we are incredibly lucky that Maarten Grootendorst broke down all aspects of the Gemma 4 architecture in his guide. Here, we’ll briefly walk through the key points so you can understand what makes Gemma 4 workflow distinct and especially efficient. (You can find the link to the original guide is in the end at the sources section.)

First, all models interleave local and global attention, which allows them to balance efficiency with the ability to reason over long contexts. This mixture was used in previous Gemma 3, but Gemma 4 structures it a little bit differently.

Most layers use sliding window attention – they only attend to a fixed number of previous nearby tokens within a window size. In Gemma 4, it is 512 for the smaller models and 1024 for the larger ones. Why use this type of attention here? If the total sequence length is n and the window size is w, this dramatically reduces the cost of attention from O(n2) to O(n·w).

Image Credit: A Visual Guide to Gemma 4 by Maarten Grootendorst

However, local attention alone can’t reliably capture long-range dependencies. Information can still propagate forward through hidden states and become increasingly diluted.

To compensate this, Gemma 4 mixes these local layers with global attention layers that can attend to the entire context. The smallest E2B model follows a 4:1 ratio (four local layers, then one global), and all the others use a 5:1 ratio. The final layer is always global, because ending with a local layer limits models’ ability to integrate the full context at the final step. This is a meaningful change from Gemma 3.

Image Credit: A Visual Guide to Gemma 4 by Maarten Grootendorst

As a result of this attention structure, a model alternates between cheap, local processing and occasional global “synchronization” steps. Most of the time, it operates efficiently on a narrow window of tokens, and periodically re-aligns itself with the full sequence.

But without any additional technique decisions, global attention still can’t keep stability and manageable computation over long contexts . So Google DeepMind handled this by proposing the following changes.

Five Special Optimizations for Global Attention

The goal is to compress how much information global attention needs to store and process. So, there are five changes that make global attention layers in Gemma 4 look quite different from a standard Transformer layer:

Using 8 query heads per KV head in Grouped Query Attention (GQA)

GQA is used for compression at the KV-cache level with multiple query heads sharing the same KV (key-value) representations. In the local attention layers, this sharing is relatively small, with two query heads sharing one KV head. But this sharing is pushed further in global layers – 8 query heads share a single KV head. Since KV-cache size scales with the number of KV heads and sequence length, this reduces memory usage roughly by a factor of 8 compared to generally used multi-head attention.

Doubling the key dimensionality to preserve capacity

Yes, GQA is a better choice for optimization, but the problem is that fewer KV heads also reduce representational capacity per head. DeepMind researchers have found a solution: the model compensates this problem by doubling the dimensionality of the keys in global attention, packing more information into each shared representation.

Setting keys equal to values (K = V) to reduce memory

The next step is to remove redundancy between KV pairs. In global attention, the keys become equal to the values (K=V), which removes the need to store separate value vectors, simplifies the attention mechanism and reduces memory bandwidth.

Applying p-RoPE (p ≈ 0.25) to stabilize long-context behavior

Basically, RoPE (Rotary Position Embeddings) is used in AI models to inject positional information by rotating pairs of dimensions at different frequencies, where high-frequency components encode precise positional shifts and low-frequency components remain closer to the original semantic embedding. But Gemma 4 also modifies the positional encoding through a pruned version of RoPE, called p-RoPE (partial RoPE), in global attention layers. Positional encoding is only applied to a subset, for example, 25% of the dimensions (p = 0.25). The remaining dimensions are left unchanged. This allows Gemma to retain strong positional signals where needed, while preserving clean semantic representations in the rest of the vector space.

Why is it important? By limiting how much positional rotation is applied, the model can generalize better to long distances it may not have seen during training, and so better handle very long contexts up to 256K tokens.

Add the condition that the final layer is always global attention →

and get significantly reduced cost of global attention, which makes Gemma 4 models more practical to combine long context windows with efficient inference. The core idea is not to entirely remove expensive components, but to reshape them so they fit within a tighter compute budget.

The vision pipeline

All of this sits on top of a multimodal foundation, because every model in the Gemma 4 family can process images. Images are turned into structured tokens, just like text, but this time more carefully with adapting to different image shapes, scaling resolution based on compute and integrating visual information directly into the same pipeline as text.

Here is the entire process:

Image Credit: A Visual Guide to Gemma 4 by Maarten Grootendorst

Split the image into patches with Vision Transformer (ViT) as an image encoder. Typically, patches are 16×16 pixels, and the Transformer processes the image just as a sequence of patches, ignoring their 2D structure. In this setup, the position index alone is no longer meaningful. But the layout still matters a lot, so →

Encoding spatial position using 2D RoPE

To eliminate this problem, Gemma 4 divides each patch embedding into two parts:

one part encodes horizontal position (width)

the other encodes vertical position (height)

RoPE is then applied separately along these two axes. This helps the model to understand where a patch is located in the image, and this information is consistent across different shapes and layouts.

Instead of forcing images into a fixed square, which would distort or crop them, Gemma 4 uses adaptive resizing with padding (filling the remaining space) to preserve aspect ratio.

Then it pools nearby patches together (like averaging groups of 3×3 patches into a single embedding) to control token count.

Applying a token budget to manage resolution:

Gemma 4 introduces a soft token budget with options like 70, 140, 280, 560, or 1120 tokens. This budget defines how many visual tokens are passed to the language model: higher budget → higher resolution → more detail, while lower budget → lower resolution → faster processing.

Finally, a linear projection maps image embeddings into the same space as text embeddings. After this projection, RMSNorm is applied to match the scale expected by the Transformer.

All of this together makes image processing more aware of images’ shapes, resolution, compute budget and general precision.

The smaller versions of Gemma extend multimodality further with native audio input support. But they also have one more interesting architectural twist. →

E4B and E2B Models Specifics

As we’ve mentioned, E2B and E4B dense, edge-optimized variants rely on per-layer embeddings for efficiency. Why do they need this special change?

For on-device use, memory is often a stricter constraint than compute, so we need to adapt models to it more cautiously.

In a standard Transformer, token embeddings are created once at the input and then transformed through the network. An additional set of smaller embeddings is introduced for each layer. For every token, the model retrieves a set of embeddings, one per layer.

These per-layer embeddings are much smaller than the main embeddings and are stored separately in cheaper memory. They are looked up once during inference and then injected into the model at each layer.

Before use, they pass through a small gating mechanism that controls how much signal is applied, then they are projected to match the model’s internal dimensionality and combined with the representation.

This has two important effects that Gemma 4 smaller models need:

It reduces the amount of information that needs to be carried through the network, since some of it can be reintroduced at each layer.

It allows to store a large portion of parameters outside of active memory, which is particularly useful on devices with limited RAM and other constraints like latency and battery.

As a result, E2B and E4B support multimodal inputs like text, images, and even audio easily and can run fully offline on phones, laptops, other devices and embedded systems.

The smaller models also include an audio encoder to process speech alongside text and images. The audio pipeline converts raw audio into embeddings using a combination of spectrogram features, convolutional layers, and a Transformer-based encoder (a conformer). These embeddings are then projected into the same space as text and image embeddings, allowing the model to process all modalities together.

Image Credit: A Visual Guide to Gemma 4 by Maarten Grootendorst

Interestingly, only smaller variants of Gemma 4 handle audio data. Again, this shows that different variants are meant to focus on different targets: 2B and 4B are designed for real-time use with continuous input processing, where audio fits in very naturally, while 26B and 31B are heavier reasoning engines for complex generation, reasoning and planning, and audio would make them even heavier. Why overlap them with what smaller models can already do better?

Specialization for particular use cases, hardware and tasks is the main priority of this Gemma release.

And now we’re getting to probably the most important question →

Why many OpenClaw users are now switching to it?

…and why you should take a closer look at this model to power your workflow.

The most telling sign of model’s adoption right now is which models OpenClaw users choose to use. And actually, they are actively testing, routing to, or switching parts of their workflow to Gemma 4 models because it suddenly made the local/OpenClaw stack look much more practical: free or near-free inference, better privacy, strong size-to-quality ratio, permissive Apache 2.0 licensing, multimodality, long context, and day-one availability across Ollama/NVIDIA/local runtimes. This is not so much the story of benchmarks, but the story of usability, economy, and convenience.

Some people are framing Gemma 4 as something you can use right away in OpenClaw and other agent frameworks. And there are many reasons why people at least try to move to Gemma 4 in OpenClaw:

A potential replacement for expensive closed models

In the Reddit/OpenClaw discussion, users describe Gemma 4 as useful for multi-routing and as a local triage layer so only harder tasks go to Claude. A popular idea is that Gemma 4 + OpenClaw can replace Claude Code, because people don’t want to pay twice for Anthropic subscriptions plus API credits. Many posts repeatedly push the “free forever,” “zero API costs,” and “no usage limits” story because it resonates with a real pain point of many users.

Image Credit: Reddit

Gemma 4 hit the sweet spot on size-to-capability

Google’s idea of “intelligence-per-parameter” matters in the market, because for OpenClaw users, that means that the workflow might finally fit their Mac Mini / workstation / consumer GPU and even phones.

Apache 2.0 licensing removed a lot of hesitation and this is another solid reason for adoption acceleration.

Gemma 4 supports the most important features for the up-to-date agentic workflows: function calling, reasoning modes, system-role support, structured JSON, native system instructions, and multimodal input, long context, and improved coding/agentic capability – this is now a new bare minimum for builders.

The models became available everywhere fast. NVIDIA, Ollama, Google AI Studio, and OpenClaw docs that include a local inferrs example using Gemma 4 – all made Gemma 4 feel “live” quickly.

Gemma 4 models are also designed to be fine-tunable to adapt them to specific domains or tasks using standard frameworks.

Putting the all this together with the technical sides, the shift toward Gemma 4 is about collapsing multiple constraints at once:

lower active compute

strong reasoning performance

native support for agentic workflows

deployment across phones, laptops and GPUs

offline operation for smaller versions, including code generation

permissive Apache 2.0 license for full commercial use

Beyond OpenClaw specifically, Gemma 4’s wider ecosystem momentum is real: now it is #1 model trending on Hugging Face, and in general, Gemma family had already reached 400M+ downloads and 100k community variants.

But is everything really so perfect with adoption?

Why Some Users are Pushing Back

Some OpenClaw and LocalLLaMA users say Gemma 4 is still worse than, for example, Qwen3.5 for OpenClaw, especially for tool use and maintaining agent context. It simply forgets what is happening.

Image Credit: Reddit

OpenClaw docs also warn that local models need large context and strong prompt-injection defenses, and that aggressively quantized or small checkpoints can be risky. The inferrs docs notes that some Gemma combinations fail on full OpenClaw agent turns and may need supportsTools: false.

A LocalLLaMA troubleshooting post on Reddit describes how OpenClaw’s huge system prompt, hardcoded timeout behavior, and local backend quirks can make Gemma 4 appear broken until heavily tuned. So while some users are moving to Gemma 4 because it is attractive, others are bouncing off because the integration is still immature.

The general pattern is already clear: users are switching to Gemma 4 where it is good enough and cheap enough, while many still keep Qwen/MiniMax as fallback for harder agentic jobs. But it is just the first week of adoption.

Conclusion

Gemma 4 is not trying to win by being the largest model. Instead, it is trying to make high-level capability accessible under realistic constraints:

strong reasoning, but with fewer active parameters

multimodality, but optimized for real devices

agentic behavior, but runnable locally

Everything is about reality, and everything is built for real user needs. Google DeepMind sees that people are excited about agents like OpenClaw and gives them a model that becomes the new default local candidate to try first there. We get a frontier lab’s development straight into our hands – and that’s a real nod to open source.

Gemma 4 is also the first recent open model that combines enough of the right things at once: strong local performance, practical model sizes, native agent-friendly features, multimodality, Apache 2.0 licensing, wide hardware support, and a compelling “no API bills + more privacy” story. That combination matches OpenClaw’s and general AI user base unusually well.

And from the tech side, Gemma 4 family’s design is less about scaling a single architecture up and down, and more about adapting the architecture itself to different constraints. So if each Gemma 4 model is structured around adaptability and a focus on hardware efficiency and user-friendly convenience, then maybe the adoption issues are just a matter of time, and soon we’ll see a more mature version of this Google DeepMind’s open model?

Sources and further reading

Gemma 4 | Model Page | Model Card | Hugging Face | Ollama

Gemma Docs

From RTX to Spark: NVIDIA Accelerates Gemma 4 for Local Agentic AI | Blog post

Reddit/OpenClaw discussion

Reddit/LocalLLaMA Fix OpenClaw + Ollama local models Tutorial/Guide

Resources from Turing Post