If Turing Post is part of your weekly routine, please share it with one smart friend. It’s the simplest way to keep the Monday digests free.

This Week in Turing Post:

Wednesday / AI 101 series: Transformers are starting to treat depth the way they already treat sequence: as an addressable dimension – deep dive

Friday / Series: Interview and deep dive with Ali Kani on Alpamayo, AlpaDream, Cosmos, and everything in between that powers NVIDIA’s self-driving systems (plus my honest opinion after the test-drive)

From our partners: Still building document ingestion pipelines from scratch?

With LlamaParse (from LlamaIndex), you can skip the complexity and leverage proprietary document understanding agents built for agentic OCR and enterprise-scale AI workflows:

Extract reliable data from PDFs, spreadsheets, images, and scanned or handwritten files

Transform unstructured documents into AI-ready knowledge for RAG and agentic applications

Deploy ingestion pipelines that scale without constant maintenance

Trusted by Fortune 500 enterprises like BP, EY, KPMG, the world's fastest startups like Lovable and Tabs, and over 300,000 developers, LlamaParse helps teams move from raw documents to production-ready AI faster.

Main topic: 100 000 subscribers! (actually, already more than that)

A few news to share.

Even though I have been writing about ML and AI for the past seven years, Turing Post itself is not even three years old yet, and here we are with more than 100,000 readers on the mailing list alone. Our YouTube channel, a much more recent addition, will turn one in just a couple of months and is about to cross 7,000 followers. Strangely enough, YT became my favorite spot for a thoughtful discussion. Our X account has become known for its research coverage, and we are about to cross 90,000 followers there as well.

We are still a very small team: two full-time people, me and Alyona Vert, plus Will Schenk as a contributor. And I do not take any of this success for granted.

Every day, I see names among our free and premium subscribers with such rigor, seriousness, and depth that I feel genuinely humbled. To each and every one of you, thank you. Thank you for reading, subscribing, sharing, commenting, and also for reading silently but steadily. All of it matters.

I also wanted to share a few changes in direction.

I had been working on a series about open-source AI, but this space is still too young, too fluid, and too early for conclusions that would feel truly solid. Rather than pretend otherwise, I would rather wait and do it properly. So instead, we will publish a State of Open-Source AI later this year.

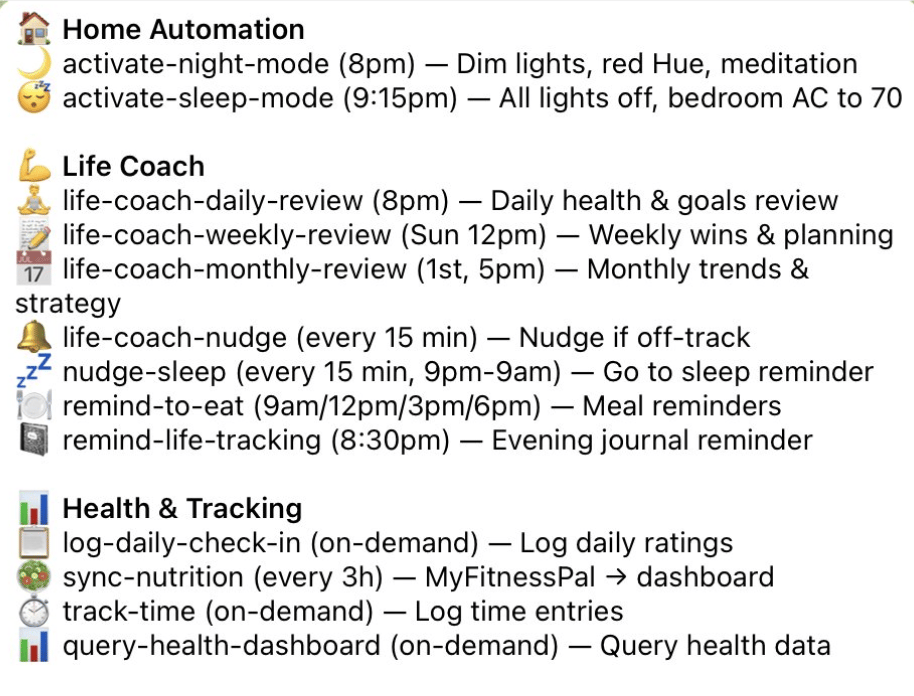

At the same time, your feedback has made a few things very clear. Right now, the strongest interest is around agentic coding and engineering, and around what began almost accidentally as The Organizational Age of AI series. The response to both has been so strong that, for the next quarter or two, they will likely become two of our main Friday series.

And one more addition: going forward, one of the main new pillars of our AI 101 series will be security, including the emerging best practices for building and deploying these systems responsibly.

That also means that in the coming weeks, you should expect some phenomenally interesting speakers from these worlds.

And I mean that sincerely. There were a couple of strange synchronicities for me at NVIDIA GTC, and they left me with a deeper sense of connection to the part of AI that is actually creating standards, building infrastructure, and opening entirely new worlds.

That, in the end, is also what I want Turing Post to keep doing: helping make sense of a field that is moving fast, speaking clearly about what matters, and bringing a bit more structure to places where there is still mostly noise.

Thank you for helping Turing Post become what it is today.

And thank you for making it worth building further.

To celebrate, we offer a 20% discount for the annual Premium subscription. Ends on March 31.

You will immediately get access to our most popular:

We are also seeing growing interest in consulting sessions with us. At the moment, you can choose whom you would like to speak with: Ksenia Se or Will Schenk. Get a consultation here.

PS: We’re working on a few things beyond the content we create. Stay tuned.

No Attention Span episode today. I needed some rest after a wildly intense NVIDIA GTC. But I still have something for you: the second part of my interview with Michael Bolin, on the crucial skills developers need today and how to shift your mindset from coding to managing agentic systems.

Our news digest on Mondays is always free. Click on the partner’s link above to support us or upgrade to receive our deep dives in full, directly into your inbox. Join Premium members from top companies like Nvidia, Hugging Face, Microsoft, Google, a16z etc plus AI labs such as Ai2, MIT, Berkeley, .gov, and thousands of others to really understand what’s going on with AI →

Follow us on 🎥 YouTube Twitter Hugging Face 🤗

Twitter Library

We are reading/watching:

What comes next with open models by Nathan Lambert

The meaning of open (2009)

News from the usual suspects (or should we say, agents…)

A huge release from OpenClaw (Hi Vincent!)

As well as an open-sourced useful prebuilt package “ClawFlows” from Nikil Viswanathan →check their GitHub

this list is much longer

Hermes Agent – an open-source agent that refuses to stay in its lane

Nous Research has unveiled Hermes Agent, MIT-licensed autonomous agent designed to live on your own server, accumulate memory, and improve over time. It is not masquerading as yet another IDE sidekick or thin chatbot shell: Hermes spans CLI, Telegram, Discord, Slack, and WhatsApp, with sandboxing, automations, subagents, and browser control. Worth checking out.HyperAgents – Explores recursive self-improvement by allowing agents to modify not only their behavior but also the mechanism that generates improvements, pushing toward open-ended learning systems →read the paper

MiroThinker-1.7 & H1: Towards Heavy-Duty Research Agents via Verification – Introduces verification directly into the reasoning loop, making agents audit and refine their own steps instead of trusting a single forward pass →read the paper

OpenSeeker: Democratizing Frontier Search Agents by Fully Open-Sourcing Training Data – Shows that strong search agents are as much a data problem as a modeling one by releasing the full training pipeline and demonstrating competitive performance with limited data →read the paper

Memento-Skills: Let Agents Design Agents – Turns agents into systems that build and refine other agents through evolving skill libraries, without updating base model weights →read the paper

🔦 Models Highlight

MiniMax M2.7: Early echoes of self-evolution

Researchers from MiniMax describe M2.7 as a model that helps improve its own agent harness, handling 30%-50% of RL workflows, autonomously iterating over 100 rounds to boost internal programming performance by 30%. It scored 56.22% on SWE-Pro, 55.6% on VIBE-Pro, 57.0% on Terminal Bench 2, 1495 ELO on GDPval-AA, 46.3% on Toolathon, 62.7% on MM Claw, and achieved 66.6% average medal rate across 22 ML competitions.

Nemotron-Cascade 2: Post-Training LLMs with Cascade RL and Multi-Domain On-Policy Distillation – Pushes post-training into a structured pipeline where RL and distillation co-evolve across domains, showing how smaller models can reach very high reasoning density →read the paper

Research this week

(as always, 🌟 indicates papers that we recommend to pay attention to)

What we see this week:

Online learning is becoming real

Agents are turning into self-improving systems

Long-horizon coherence is still the real pain point

Researchers are attacking information decay inside models

Efficiency is now a first-class goal

Multimodal systems still fake understanding too often

AI is moving toward judgment, not just execution

Multilingual coverage is expanding fast

Self-improving agents, online learning, and agent optimization

🌟 Efficient Exploration at Scale (Google DeepMind) – Shows how RLHF can become far more label-efficient by updating reward and language models online instead of treating preference data as a static pile →read the paper

🌟 Online Experiential Learning for Language Models (Microsoft Research) – Turns deployment itself into a training signal and asks a simple but important question: why let real-world experience evaporate after inference? ->read the paper

MetaClaw: Just Talk – An Agent That Meta-Learns and Evolves in the Wild – Builds a practical picture of agents that keep serving users while still improving, combining fast skill updates with opportunistic policy tuning →read the paper

🌟 Complementary Reinforcement (Alibaba, HKUST) – Reframes memory and experience not as a static add-on for agents, but as something that should co-evolve with the policy during training →read the paper

POLCA: Stochastic Generative Optimization with LLM – Treats the model as an optimizer for prompts, agents, and systems, which makes it especially interesting for people thinking about automated iteration loops rather than single outputs →read the paper

🌟 A Subgoal-driven Framework for Improving Long-Horizon LLM Agents (Google DeepMind) – Tackles one of the ugliest agent problems directly: staying coherent across long tasks by combining explicit subgoals at inference time with milestone-based rewards during training →read the paper

Cooperation and Exploitation in LLM Policy Synthesis for Sequential Social Dilemmas – Explores whether LLMs can iteratively write better agent policies for multi-agent settings and shows how feedback design shapes cooperation rather than just raw reward chasing →read the paper

Reasoning, judgment, and AI as a cognitive system

AI Can Learn Scientific Taste – Pushes beyond execution and asks whether models can learn to recognize which research ideas are worth pursuing in the first place →read the paper

Understanding Reasoning in LLMs through Strategic Information Allocation under Uncertainty – Explains why apparent reasoning breakthroughs may depend less on magic words and more on whether the model externalizes uncertainty in a usable way →read the paper

Motivation in Large Language Models – Tests whether “motivation” is a useful lens for understanding model behavior and ends up probing a surprisingly awkward border between metaphor and mechanism →read the paper

🌟 When AI Navigates the Fog of War (MBZUAI) – Uses an unfolding geopolitical crisis as a rare low-leakage setting for testing real-time model reasoning instead of the usual hindsight theater →read the paper

Semi-Autonomous Formalization of the Vlasov-Maxwell-Landau Equilibrium – Offers a concrete case study of AI-assisted mathematical research where proving, coding, and verification get split across tools in a surprisingly disciplined workflow →read the paper

Model architectures, compression, and efficient inference

🌟 Attention Residuals (Kimi Team) – Reworks residual connections themselves, which is interesting because it targets a basic Transformer assumption that most people treat as settled furniture →read the paper

🌟 Mixture-of-Depths Attention – Lets models attend not only across sequence positions but across layers, directly attacking the feature dilution problem that shows up as models grow deeper →read the paper

Effective Distillation to Hybrid xLSTM Architectures – Makes the case that sub-quadratic architectures still have life in them if distillation is handled with more care than the usual “good luck, little student” recipe →read the paper

🌟 Efficient Reasoning on the Edge (Qualcomm) – Brings reasoning to constrained devices by treating verbosity, adapter routing, and KV-cache management as first-class engineering problems rather than unfortunate details →read the paper

BEAVER: A Training-Free Hierarchical Prompt Compression Method via Structure-Aware Page Selection – Compresses long contexts by preserving document structure instead of blindly chopping tokens, which is exactly where many compression methods go feral →read the paper

LoopRPT: Reinforcement Pre-Training for Looped Language Models – Shows how latent-loop architectures may need their own training logic rather than recycled token-level RL borrowed from standard LLMs →read the paper

🌟 Beyond Single Tokens: Distilling Discrete Diffusion Models via Discrete MMD (Google DeepMind) – Matters because discrete diffusion has looked promising but awkward to distill, and this paper tries to make step-reduced generators actually viable →read the paper

Multimodal understanding, reliability, and video reasoning

Anatomy of a Lie: A Multi-Stage Diagnostic Framework for Tracing Hallucinations in Vision-Language Models – Diagnoses hallucinations as a process failure rather than a bad final answer, which makes it more useful for debugging than another benchmark scorecard →read the paper

Cognitive Mismatch in Multimodal Large Language Models for Discrete Symbol Understanding – Exposes a nasty weakness in multimodal systems: they can sometimes “reason” around symbols they never actually perceived correctly →read the paper

🌟 Unified Spatio-Temporal Token Scoring for Efficient Video VLMs (AI2) – Prunes video tokens across the full stack rather than in one isolated module, which makes it interesting for anyone who cares about end-to-end efficiency instead of local cleverness →read the paper

🌟 HopChain: Multi-Hop Data Synthesis for Generalizable Vision-Language Reasoning (Qwen) – Synthesizes the kind of chained multimodal training data that actually stresses perception, reasoning, and hallucination together instead of pretending those failures live in separate boxes →read the paper

Representation, multilinguality, and information access

F2LLM-v2: Inclusive, Performant, and Efficient Embeddings for a Multilingual World – Expands the embedding conversation beyond English-heavy leaderboards and focuses on what useful multilingual coverage should look like in practice →read the paper

Omnilingual MT: Machine Translation for 1,600 Languages – Pushes translation toward real linguistic breadth and highlights a key asymmetry: many models can sort of understand underrepresented languages long before they can generate them well →read the paper

How Well Does Generative Recommendation Generalize? – Separates memorization from genuine generalization in recommender systems and shows that the answer is messier, and more complementary, than the hype usually suggests →read the paper

That’s all for today. Thank you for reading! Please send this newsletter to colleagues if it can help them enhance their understanding of AI and stay ahead of the curve.