Humans’ brains don’t process every tiny detail of the world. Instead, we rely on abstract representations formed from past experiences – mental models – to guide our decisions. Even before events occur, our brains continuously predict outcomes based on these models and prior actions.

This is precisely the concept behind world models in AI.

Rather than learning directly through trial and error in the real world, an AI agent uses a "world model" – a learned simulation of its environment – to imagine and explore possible sequences of actions. By simulating these actions internally, the AI finds paths leading toward desired outcomes.

This approach has significant advantages. Firstly, world models drastically reduce the resources required by avoiding the physical execution of every possible action. More importantly, they align AI more closely with how the human brain actually functions – predicting, imagining scenarios, and calculating outcomes. Yann LeCun once stated that world models are crucial to achieving human-level AI, though it may take approximately a decade to fully realize their potential.

Today, we're working with early-stage world models. Properly understanding their mechanisms, recognizing the capabilities of the models we currently have, and dissecting their inner workings will be essential for future breakthroughs. Let's begin our grand journey into the fascinating world of world models.

What’s in today’s episode?

Historical background of first world models

What’s necessary today to build a world model?

Notable world models

Google DeepMind’s DreamerV3

Google DeepMind’s Genie 2

NVIDIA Cosmos World Foundation Models

Meta and Navigation World Model (NWM)

Conclusion: Why are world models important?

Sources and further reading

Historical background of first world models

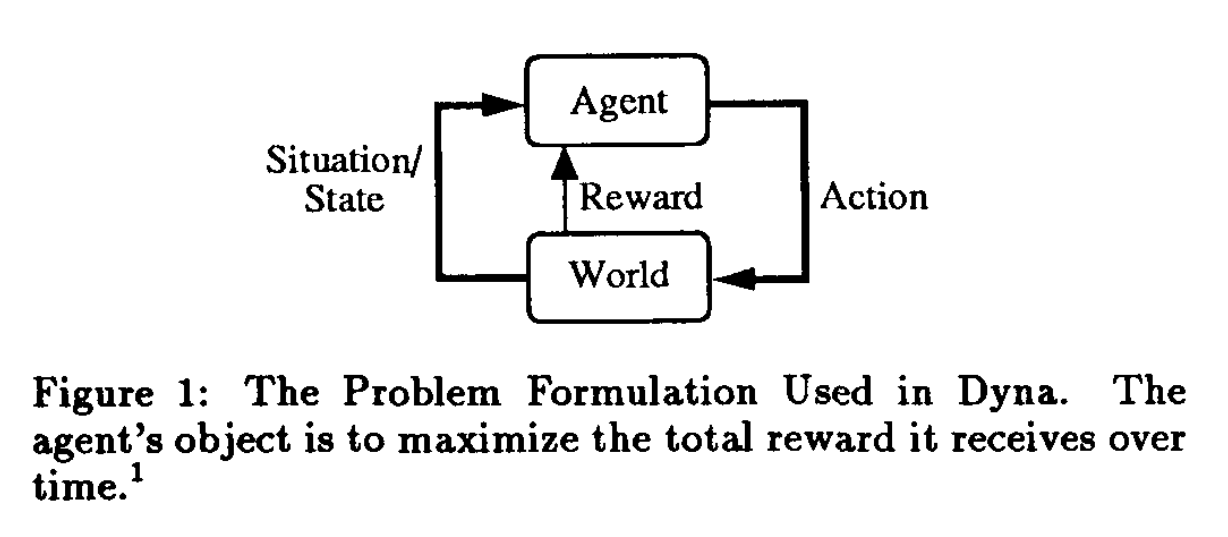

While the term "world model" gained popularity in the last few years, the underlying concept has antecedents in earlier AI research. This idea is dating back to 1990 with the Richard S. Sutton’s Dyna algorithm. It’s a fundamental approach to model-based reinforcement learning (MBRL) that integrates learning a model with planning and reacting, so agents using Dyna can:

Try actions and sees what works (trial and error though RL).

Over time, learn the model of the world and build it to predict what might happen next (learning).

Uses this mental model to try things out in its “head” without having to actually do them in the real world (planning).

If something happens, react immediately based on what it has already learned – no pause to plan every time (quick reaction).

Image Credit: Dyna original paper

A later study from 2018, called “The Effect of Planning Shape on Dyna-style Planning in High-dimensional State Spaces”, tested Dyna in the Arcade Learning Environment, which is a collection of Atari 2600 games used to train AI agents from raw pixel images. It showed, for the first time, that a learned model can help improve learning efficiency in environments with high-dimensional inputs, like Atari games, and suggested that Dyna is viable planning method.

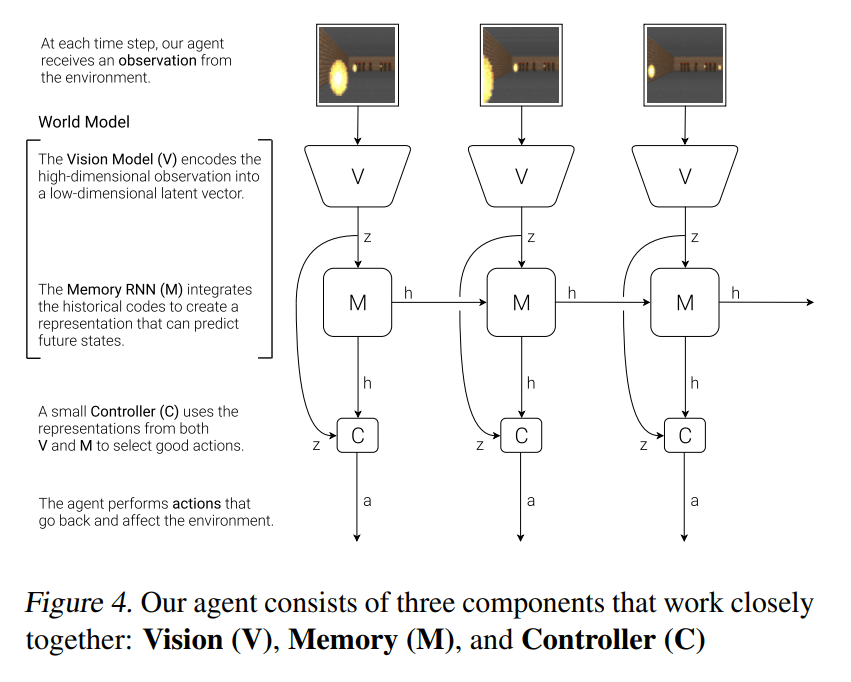

A key milestone was the 2018 paper “World Models” by David Ha and Jürgen Schmidhuber. They built a system that actually works on simple environments. They trained a generative recurrent neural network (RNN) to model popular RL environments, like a car racing game and a 2D first-person shooter-like game, in an unsupervised manner. Their world model learned a compressed spatial representation of the game screen and temporal dynamics of how the game evolves. More precisely, this system consists of three parts:

Vision: Variational Autoencoder (VAE) compresses high-dimensional observations (pixel images) into a lower-dimensional latent representation.

Memory: Mixture-Density Recurrent Network (MDN-RNN) predicts the next latent state given the current latent and the agent’s action.

Controller: Takes the latent state and RNN hidden state and outputs actions. In the original implementation, it was a simple linear policy trained with an evolutionary strategy to maximize reward.

Image Credit: World Models original paper

Ha and Schmidhuber showed that a policy (controller) could be trained entirely within the learned model’s “dream” and then successfully transferred to the real game environment. It was a stepping stone to building smarter agents that can dream, plan, and act just like humans and sparkled an interest in model-based approaches.

A lot has changes since then. What do we have today? How do latest world models work? Do they understand the physical world? Let’s explore.

What’s necessary today to build a world model?

We open this article for everyone to read. If you want to be the first to receive the full articles with detailed explanations and curated resources directly in your inbox →

To sum up, world models are generative AI systems that learn internal representations of real-world environments, including their physics, spatial dynamics, and causal relationships (at least, the basic ones), from diverse input data. They use these learned representations to predict future states, simulate sequences of actions internally, and support sophisticated planning and decision-making without needing continuous real-world experimentation.

NVIDIA has highlighted the following components for building world models:

Data curation: It is essential for smooth training of world models, especially with large, multimodal datasets. It includes filtering, annotating, classifying, and removing duplicate images or videos to ensure data quality. In video processing, this begins with splitting and transcoding clips, then applying quality filters. Vision-language models annotate key elements, and video embeddings help identify and remove redundant content.

Tokenization: Breaks down high-dimensional visual data into smaller, manageable units to accelerate learning. It reduces pixel-level redundancies and creates compact, semantic tokens for efficient training and inference.

Discrete tokenization represents visuals as integers.

Continuous tokenization uses continuous vectors.

Fine-tuning: Foundation models, trained on large datasets, can be adapted for specific physical AI tasks. Developers either build models from scratch or fine-tune pre-trained ones using additional data. Fine-tuning makes models more effective for robotics, automation, and other real-world use cases.

Unsupervised fine-tuning uses unlabeled data for broader generalization.

Supervised fine-tuning leverages labeled data to focus on specific tasks, enhancing reasoning and pattern recognition.

Reinforcement Learning (RL): It trains reasoning models by letting them learn through interaction, receiving rewards or penalties for actions. This approach helps AI adapt, plan, and improve decision-making over time. RL is particularly useful for robotics and autonomous systems that require complex reasoning and response capabilities in dynamic environments.

A recent comprehensive survey “Advances and Challenges in Foundation Agents” (an amazing paper!) summarizes 4 general ways to build AI world models:

Implicit models: These use one big neural network to predict future outcomes without separating how the world changes and how it's observed. These frameworks lets agents “dream” up future actions using compressed images and predictions.

Explicit models: These clearly separate how the world changes (state transitions) from what the agent sees (observations). This makes systems more interpretable and better for debugging.

Simulator-based models: Instead of learning from scratch, these models use a simulator or real-world environment to test actions and outcomes. This is very accurate but can be slow and costly.

Hybrid and instruction-driven models: These combine learned models with external rules, manuals, or language models. This mix of neural predictions and rule-based guidance makes models more flexible in new situations.

For now, let’s put this into practice and look at recent examples of world models that we have today.

Was this email forwarded to you? Forward it also to a friend or a colleague! Sign up

Notable world models

Google DeepMind’s Dreamer

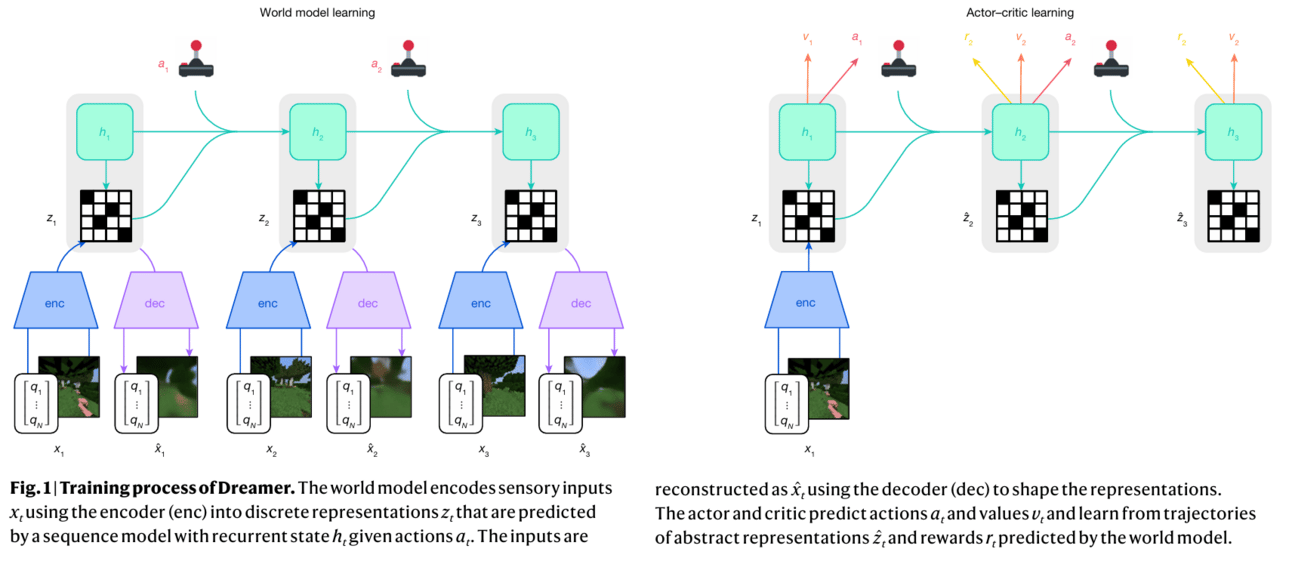

Perhaps one of the most influential series of works came from Danijar Hafner and colleagues from Google DeepMind, who created the Dreamer family of agents. The latest version (April 2025) of this general-purpose RL algorithm, DreamerV3, can handle over 150 different tasks using the same settings, without needing to be adjusted for each one. However, the biggest thing is that it’s the first algorithm to collect diamonds in Minecraft from scratch, without any help from human examples, using only its own “imagination” and default settings. And it’s not only the achievement of RL – it’s the achievement of a world model, as well. DreamerV3 learns model of the world and uses it to imagine what might happen next to figure out how to act better. Here is how this system exactly works →

DreamerV3 consists of 3 parts:

Image Credit: DreamerV3 original paper

World Model – takes what the agent sees, like images or numerical inputs, and compresses them into simpler latent representations using a recurrent neural network (RNN), specifically a recurrent state-space model (RSSM). This helps the model maintain memory of past events and better predict future states. Given an action, the model predicts the next state, the expected reward, and whether the episode continues. (Note: Unlike many recent AI architectures, DreamerV3 does not use Transformers, focusing instead entirely on recurrent models.)

DreamerV3 introduces several smart enhancements here:

KL divergence measures how predictions differ from reality – acting like a "reality check." If the predictions are off, the model adjusts itself accordingly.

Free bits help prevent the model from overcorrecting minor inaccuracies. Think of it as saying: "If it's already good enough, don't waste effort making it perfect."

Symlog encoding compresses large positive and negative real-world signals (such as rewards and pixel values) into manageable numeric ranges, helping the system learn steadily.

Two-hot encoding spreads learning targets across two adjacent categories, smoothing out predictions and making the learning process easier and more stable.

Critic – evaluates how good or bad the outcomes imagined by the world model are. Since rewards might vary dramatically, DreamerV3 uses careful normalization and distribution-based scoring, ensuring stable performance even with sparse or unpredictable rewards. It also employs a moving average of parameters to further stabilize learning.

Actor – decides the best action based on insights provided by the world model and the critic, balancing immediate rewards and exploration of new strategies to avoid getting stuck. DreamerV3 carefully normalizes predicted returns, maintaining balanced exploration even when rewards are rare.

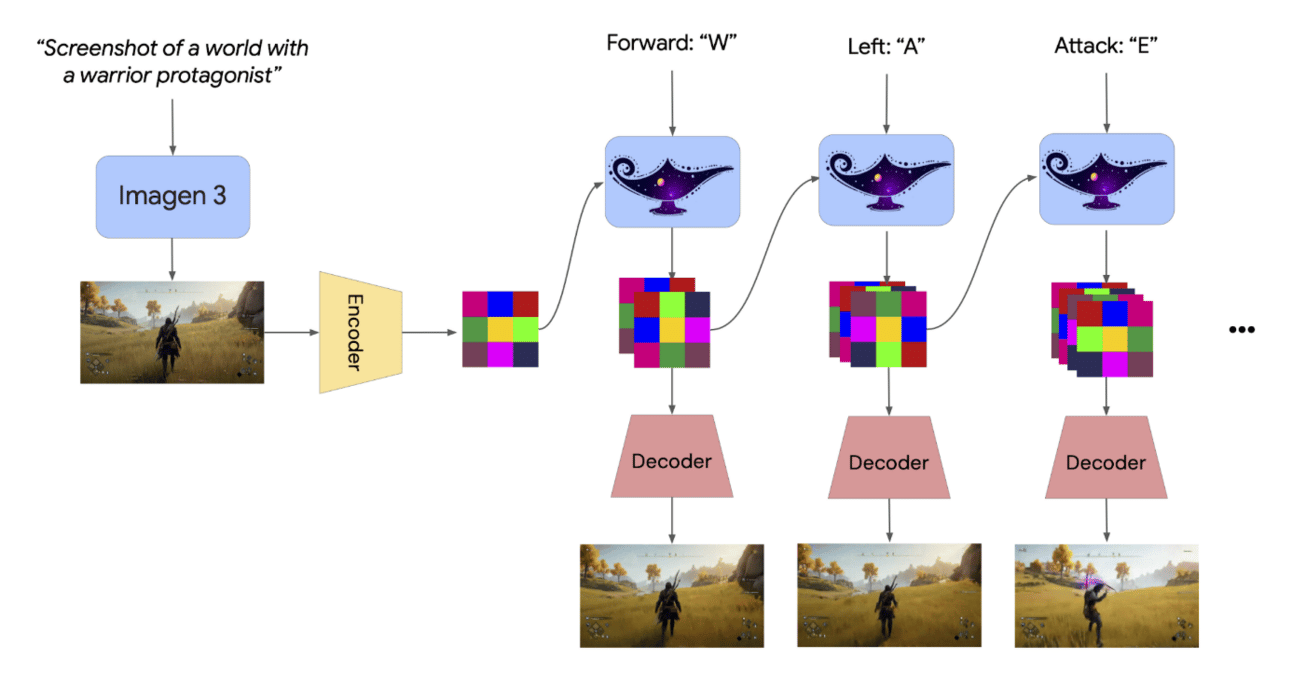

Google DeepMind’s Genie 2

Another interesting example of Google DeepMind’s developments in the world models field is Genie 2 that generates diverse training environments for embodied agents. From a single image prompt, Genie 2 creates playable virtual worlds controllable via keyboard and mouse usable by both humans and AI systems. It supports long-horizon memory, consistent world generation, and counterfactual simulation from a shared starting point. The model demonstrates emergent capabilities, such as:

Handling character movement

Simulating physical dynamics (gravity, lighting, reflections)

Modeling interactions with objects and non-player characters (NPCs)

Paired with agents like SIMA, Genie 2 generates new 3D scenarios to test instruction-following, enabling agents to navigate and act in novel environments using natural language commands.

Image Credit: Genie 2 blog

What is inside Genie 2 that helps it to achieve this?

Genie 2 is an autoregressive latent diffusion model trained on a large video dataset and generating video frame by frame. The process looks like this:

Genie 2 uses an autoencoder to compress video frames into latent space.

Transformer-based autoregressive model predicts the next latent frame based on previous ones and the agent’s actions.

A latent diffusion process is applied to refine and generate realistic video frames from the latent predictions.

Decoding the latents into visual frames.

Image credit: Genie 2 blog

This architecture allows Genie 2 operate in lower-dimensional latent space, respond to user or agent inputs over time and generate realistic and consistent video output. So it offers the potential for building general-purpose systems capable of adapting to a wide range of tasks in complex virtual worlds.

NVIDIA’s Сosmos World Foundation Models

Its hard to underestimate NVIDIA’s contribution to the world models. Their commitment to Physical AI moves their focus to making entire modular ecosystem, called Cosmos World Foundation Model (WFM) Platform designed to train, simulate, and apply video-based world models for Physical AI. We covered how the entire Cosmos platform works in one of our previous episodes in detail, but since that time, more information about NVIDIA Сosmos World Foundation Models has emerged. So let’s also look at them more precisely.

This platform includes three main model families, each playing a distinct but complementary role in enabling rich visual world understanding, simulation, and reasoning.

Cosmos-Predict1:

It simulates how the visual world evolves over time. It learns general physical world dynamics from 100M+ video clips and can be fine-tuned on specific tasks with smaller datasets for controllability via text, actions, or camera input. There are two types of models:

Diffusion models (like Cosmos-Predict1-7B-Text2World): Generate videos from text by denoising noise in latent space.

Autoregressive models (for example, Cosmos-Predict1-13B-Video2World): Generate videos token-by-token from prior context, similar to GPT.

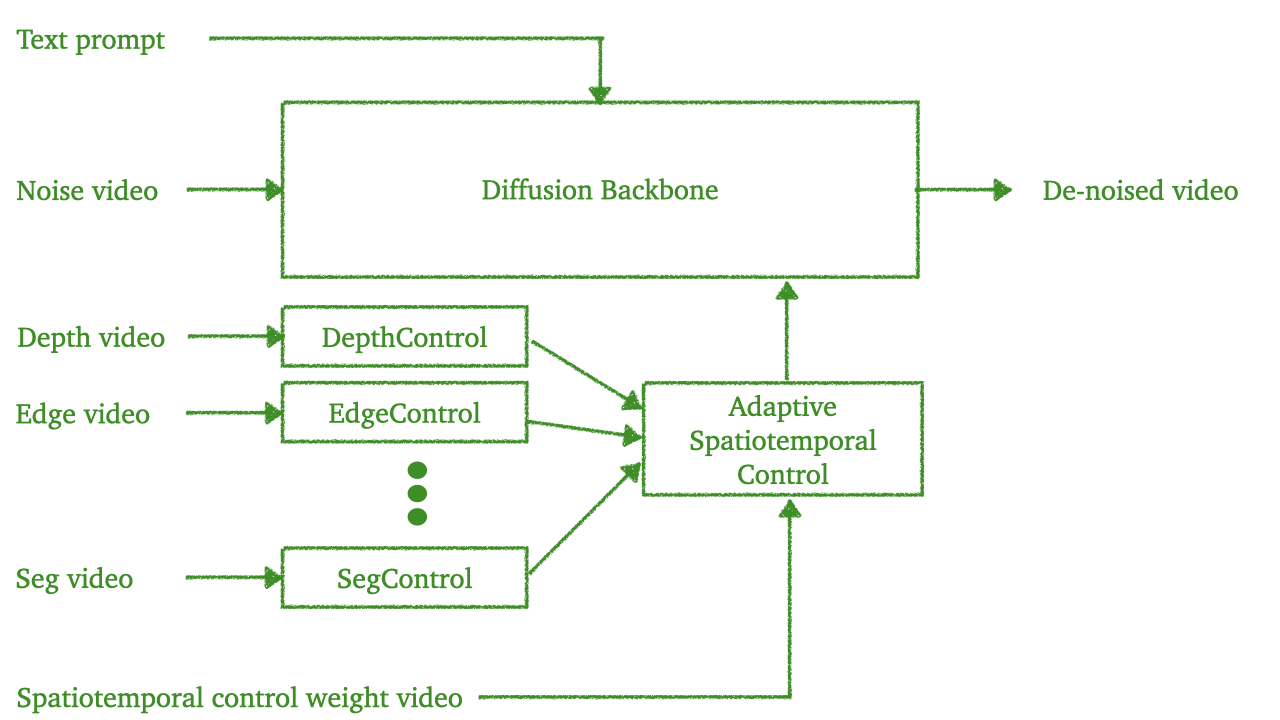

Cosmos-Transfer1:

Built directly on top of Cosmos-Predict1, it extends it with powerful adaptive multimodal control. Cosmos-Transfer1 allows users to guide world generation using multiple spatial control signals, such as segmentation maps, depth maps, edge maps, blurred visual inputs, HD Maps and LiDAR data.

To effectively handle different inputs NVIDIA adds separate ControlNet branches per modality, like one for depth, one for edges, etc. These control branches are trained independently for memory efficiency and flexibility. It also allows fine-grained control – for example, emphasize edge in foreground for object detail or depth in background for geometry.

Cosmos-Transfer1 uses spatiotemporal control maps to assign weights dynamically to different inputs across space and time.

As a result, Cosmos-Transfer1 can generate 5-second 720p videos in under 5 seconds, achieving real-time inference.

Image Credit: Cosmos-Transfer1 GitHub

Cosmos-Reason1:

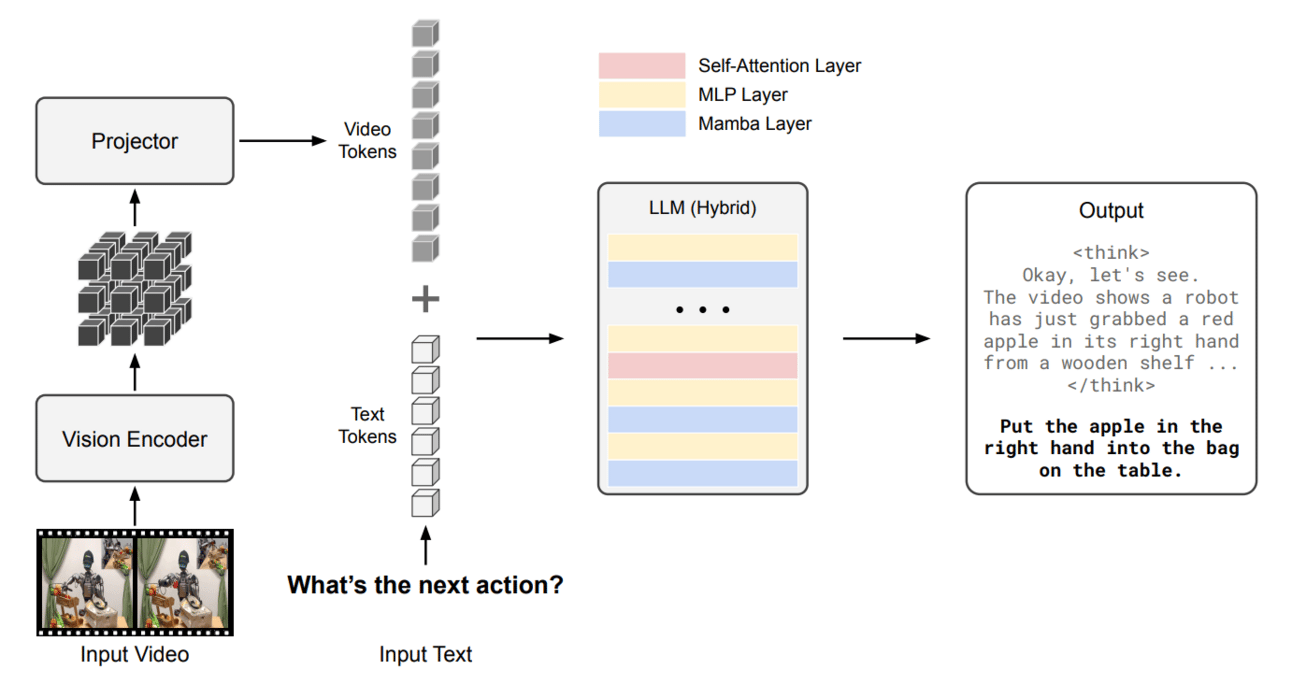

This family of models (available in 8B and 56B parameter sizes) reason about what is happening, what will happen next, and what actions are feasible, grounded in real-world physics and environmental dynamics. Cosmos-Reason1 uses simulated worlds from Predict1 and refined visuals from Transfer1 to make informed decisions, completing the loop for NVIDIA’s Physical AI systems. It centers around 2 reasoning pillars:

Physical common sense: General knowledge about space, time, object permanence, physics, etc.

Embodied reasoning: Agent-based decision-making under physical constraints (robot, human, autonomous vehicle).

Interestingly, Cosmos-Reason1 uses a hybrid Mamba-MLP-Transformer optimized for long-sequence reasoning.

Why did they put the different architectures together?

Well, they are used here because: 1) Mamba excels at capturing long-range dependencies – this improves efficiency; 2) Transformer blocks provide full self-attention, which is crucial for short-range dependencies and high-level abstraction, adding precision; 3) And finally the MLP (Multi-Layer Perceptron) layers provide strong non-linear transformations between Mamba and Transformer layers. They help stabilize learning and serve as bottlenecks for information integration, especially across modalities (video + text) – it’s made for flexibility. Brilliant.

Image Credit: Cosmos-Reason1 original paper

As output, Cosmos-Reason1 generates natural language with CoT explanations and final actions like one that you can see in the image above.

Overall, Cosmos-Predict1, Cosmos-Transfer1, and Cosmos-Reason1 form an integrated foundation for Physical AI: Predict1 simulates realistic world dynamics, Transfer1 enables fine-grained controllable video generation across modalities, and Reason1 interprets and reasons over the physical world to make embodied decisions. Together, they create a unified pipeline that powers intelligent agents to see, generate, and reason about complex real-world environments.

The last but not the least on our list is a world model from another AI giant – Meta.

Meta and Navigation World Model (NWM)

What is firstly notable about Meta and world models is that its Chief AI Scientist, Yann LeCun, is championing world models. He argues that the path to human-level AI in the next decade will rely on developing world models that enable reasoning and planning.

So FAIR at Meta also shifts to developing world models to faster unlock their full perspectives. One of them is Navigation World Model (NWM) created together with New York University and Berkeley AI Research.

Navigation is a key skill for intelligent agents – especially those that can see and move, like robots or virtual assistants in a game. Here, NWM is like a smart video generator that can imagine what the agent will see next, based on where it has been and where it wants to go. It can simulate possible movement paths and check if they reach a goal. Fixed rules are in the past for NWM – it can adjust its plans based on new instructions or constraints.

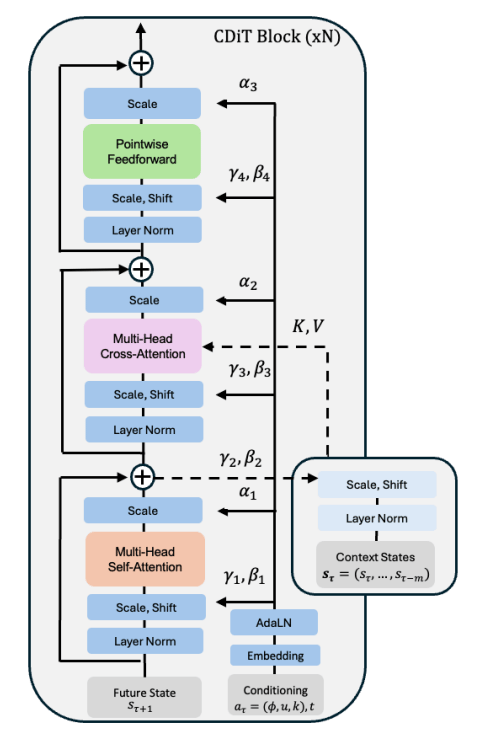

At its core, NWM uses a powerful Conditional Diffusion Transformer (CDiT). CDiT follows a diffusion-based learning process, but it improves on standard diffusion transformers (like DiT) by significantly reducing attention complexity. CDiT uses cross-attention, not self-attention across all tokens, which allows it to scale to longer context windows and larger models (up to 1 billion parameters), with 4× fewer FLOPs compared to DiT.

Image Credit: Navigation World Model original paper

What are other key benefits of NWM for navigation?

It’s trained on a huge set of first-person videos from both humans and robots.

Once trained, it can plan new routes by simulating and checking which ones reach the goal.

The model is quite large (about 1 billion parameters) which gives it the ability to understand complex scenes.

NWM can even handle new environments — by using just one image as a reference, it can imagine what a full navigation route might look like.

All these aspects makes NWM a flexible and forward-looking tool for building smart navigation systems.

Conclusion: Why are world models important?

We’ve covered many advanced world models, such as Google DeepMind’s DreamerV3 and Genie 2, three NVIDIA Cosmos WFMs, and Meta’s Navigation World Model, each with different backbones and working principles. There is even more to discuss in this field. While much has already been accomplished, it’s only the beginning for world models. For example, we’re eagerly anticipating what else these giants and also Fei-Fei Li’s World Labs will invent to unlock the full potential of such models and spatial intelligence. However, this will definitely take time. We would even say that the development stage of world models is somewhat similar to that of agents. This is also because, for physical AI, they can’t exist without each other.

The main summary question that we can answer now is: Why are world models important?

They unlock several key capabilities in AI:

Planning and decision making: With a world model, an agent can plan by “imagining” future state sequences for different action strategies and selecting the best plan. This is the essence of model-based reinforcement learning, enabling far-sighted decision making and planning many steps ahead.

Efficiency: Learning by trial-and-error in the real world (or a simulator) can be expensive or slow. A world model allows the agent to learn from simulated experience (a kind of “mental practice”), which can dramatically reduce the needed real-world interactions.

Generalization and flexibility: A good world model captures general properties of the environment, which can help an agent adapt to novel situations. By understanding the underlying dynamics, the agent can handle conditions it never explicitly saw in training, by reasoning in its model.

As world models can ingest far more raw information, such as video streams, than language models can, they can potentially provide a richer grounding in reality.

Towards general intelligence: World models are viewed by many researchers as a stepping stone to more general AI cognition. They give AI systems a form of “imagination” and intuitive understanding of the world’s workings – prerequisites for human-like common sense, reasoning, and problem-solving.

“We need machines that understand the world; machines that can remember things, that have intuition, have common sense, things that can reason and plan to the same level as humans.”

What world models might still be missing is the integration of Causal AI. We'll cover this fascinating topic – still largely academic or niche-industry focused, but nonetheless crucial for achieving AGI – in the next episode of AI 101.

Sources and further reading

Resources for further reading:

Dyna, an Integrated Architecture for Learning, Planning, and Reacting by Richard S. Sutton (paper)

World Models by David Ha and Jürgen Schmidhuber (paper)

Mastering Diverse Control Tasks through World Models (project page)

World Foundation Models (NVIDIA blog)

Navigation World Models (paper)

NVIDIA Isaac GR00T (NVIDIA blog)

Turing Post resources