Froth on the Daydream (FOD) – our weekly summary of over 150 AI newsletters. We connect the dots and cut through the froth, bringing you a comprehensive picture of the ever-evolving AI landscape. Stay tuned for clarity amidst the surrealism and experimentation.

Today, we discuss the fascinating unexpected developments that bring us Reinforcement Learning (RL), the balanced approach to AI and new ethics from the Vatican, the real dangers of Language Model Models (LLMs), and celebrate a phenomenal week in terms of fundraising and acquisitions. Dive in!

Reinforcement learning is back, and we find ourselves with zero understanding of what to expect

Two interviews published last week have left me with the feeling that a new era of unpredictable moves by AI is on the horizon. From a problem-solving perspective, it's truly exciting. I still remember listening to a podcast a few years ago about the match between Lee Sedol and AlphaGo. The shock that ensued at the 19th stone (the famous move 37) affected not only the commentators and DeepMind researchers but also Lee Sedol himself. No one had anticipated that a machine could make such a creative and truly unique move.

From TheSequence’s interview with Daniel J. Mankowitz, a research scientist at DeepMind, we learn how they made with AlphaDev: an unexpected discovery of new sorting algorithm. The miracle behind this lies with AlphaZero, a reinforcement learning (RL) model that defeated world champions in games like Go, chess, and shogi.

"When we first began the AlphaDev project, we decided to try and learn a sort3 algorithm (i.e., sorting 3 elements) from scratch. This meant that AlphaDev started with an empty buffer and had to iteratively build an algorithm that could sort three elements from the ground up. This is an incredibly challenging task, as AlphaDev had to efficiently explore a vast space of algorithms. In fact, there are more possible algorithms that can be built from scratch, even for sorting three elements, than there are atoms in the universe!

"We visually analyzed the state-of-the-art assembly code for sort3 and couldn't find any way to improve it. We were convinced that AlphaDev could not further enhance this algorithm and would likely reach the human benchmark. However, to our surprise, it discovered a sort3 algorithm from scratch that had one less assembly instruction."

So, AlphaDev delved into the realm where most humans don't venture: the computer's assembly instructions. This unexpected and creative approach yielded remarkable results.

Now, let's see what Demis Hassabis said about Gemini, a new AI system that combines typical LLMs like GPT with techniques used in AlphaGo, “aiming to give the system new capabilities such as planning or the ability to solve problems.” The resurgence of RL is undoubtedly a fascinating development. What other unpredictable results are there for us?

Weaving in some calming notes

Before panicking and making any strong moves, let’s apply engineer’s mindset, as suggested by Marc Andreessen in this interview to Stratechery:

“Okay, look, this is technology, we implement this the same way we implement every other form of technology. We do it step by step”.

As you might understand, he is against drama around any potential apocalypse. Suddenly, the support for this comes from guys whose narrative is often built on apocalypse. But this time, they are urging people to be present and suggesting actionable steps. The Vatican (yup, the Vatican), in partnership with Santa Clara University’s Markkula Center for Applied Ethics, has released a handbook on the ethics of AI. The handbook, titled "Ethics in the Age of Disruptive Technologies: An Operational Roadmap", is intended to guide the tech industry through ethical considerations in AI, machine learning, encryption, tracking, and more. The initiative is led by a new organization called the Institute for Technology, Ethics, and Culture (ITEC). The handbook advocates for building values organized around a set of principles into technology and the companies that develop it from the start. It breaks down one anchor principle for companies: ensuring that "Our actions are for the Common Good of Humanity and the Environment" into seven guidelines and 46 specific actionable steps:

You shall have no other God’s before me.

Thou shalt not make unto thee any graven images.

Just kidding. They are actually are: 1. Respect for Human Dignity and Rights 2. Promote Human Well-Being 3. Invest in Humanity 4. Promote Justice, Access, Diversity, Equity, and Inclusion 5. Recognize that Earth is for All Life 6. Maintain Accountability 7. Promote Transparency and Explainability.

As it mentioned in the roadmap, all these guiding principles serve the common good.

Well, once we define the common good, we might be able to address common sense.

All these advancements in AI and the accelerating speed of development just keeps us thinking: What is it exactly we want AI for?

Maybe when we understand that, it will be easier to set the rules to control it. Naive, I know.

On another calming note, a guest article in Venture Beat, that even without trust to AI, there is no need for fear: “Enterprises need to recognize the need to approach generative AI with caution, just as they’ve had to do with other emerging technologies.”

Also:

Other five cents to provoke your thinking

Musk, Twitter, and AI

The news about Elon Musk implementing paywalls for reading tweets to combat data scraping by AI startups has another side: he recently launched X.AI Corp, an AI company, and has been recruiting researchers with the aim of creating a rival effort to OpenAI. This new company will require extensive data. With one of the largest datasets on driving from Tesla, Twitter provides an additional advantage. If there is or should be regulation, it should focus on data access and establishing a barrier between social networks and LLM models.

Detection and detection biases

In an article by Nathan Lambert in Interconnects, the growing concern of generating and spreading disinformation through large language models (LLMs) is discussed. Lambert highlights the ease of generating harmful content and the challenges in moderating and distributing it. He suggests that LLMs can be fine-tuned to bypass moderation filters and exploit vulnerabilities like predictable passwords. The article also emphasizes the need for improved tools to detect AI-generated text and underscores the crucial role of cybersecurity researchers in addressing these issues.

This leads us to a peculiar article from AI weirdness, which demonstrates that current AI detectors are biased against non-native speakers, often resulting in false positives. People are lame too: a new study reveals that people are more likely to believe disinformation generated by GPT-3 than disinformation written by humans. In the study, participants were 3% less likely to identify false tweets generated by AI compared to false tweets written by humans.

Misinformation as a real danger

One of the major challenges associated with AI is the problem of misinformation. Eric Schmidt, former CEO of Google, identifies misinformation surrounding the 2024 elections as a significant short-term danger of AI. Despite efforts by social media companies to address false information generated by AI, Schmidt believes that a viable solution has yet to be found.

The Verge adds to the discussion around information, "The changes AI is currently causing are just the latest in a long struggle in the web's history. This battle revolves around information – its creators, accessibility, and monetization. However, familiarity with the fight does not diminish its importance, nor does it guarantee that the resulting system will be better than the current one. The new web is struggling to emerge, and the decisions we make now will shape its growth."

In response to that article, The Platformer expresses concern, "The abundance of AI-generated text will make it increasingly difficult to find meaningful content amidst the noise. Early results suggest that these fears are justified, and soon everyone on the internet, regardless of their profession, may find themselves expending greater effort in search of signs of intelligent life."

As they say, "garbage in, garbage out,” and as we've mentioned before, "What we truly need are trusted sources and good old-fashioned fact-checking. Journalism, especially in an era where creating information, photos, and videos has become so effortless, should play a critical role."

Money approval for open-source ML and other financial stories

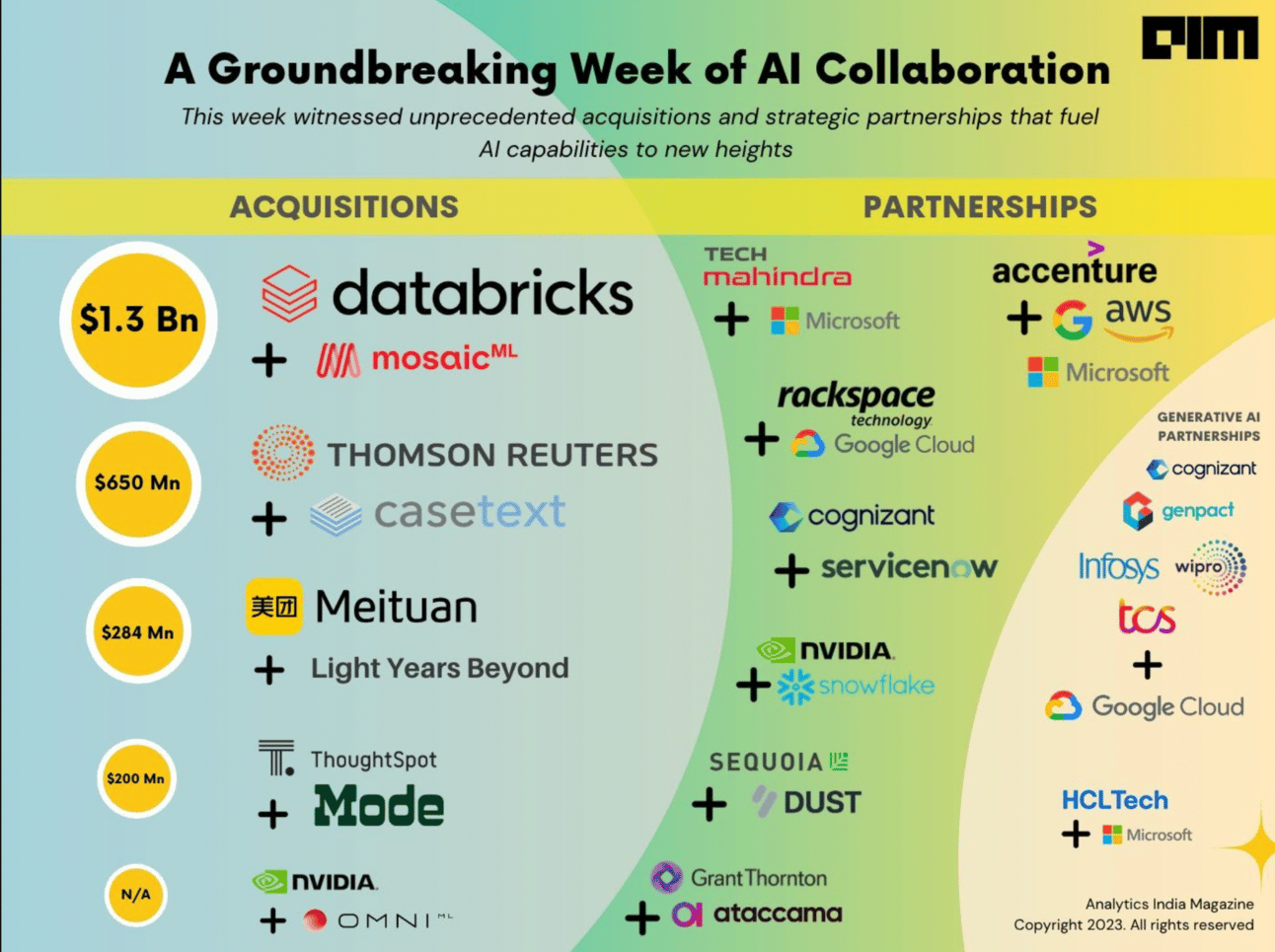

The groundbreaking surge in AI mergers, acquisitions, and funding rounds has set a blistering pace for the second half of 2023, catapulting generative AI to the forefront of the tech sector. Tech giants like Databricks, Snowflake, and Nvidia have been making strategic moves to establish their dominance in the generative AI landscape.

Data and AI firm Databricks recently announced: “We’re over the moon to announce we’ve agreed to acquire MosaicML”, and the $1.3B acquisition followed. Together, companies aim to democratize AI by enabling businesses to build their own language models using proprietary data. The move was praised by AlphaSignal and TheSequence as a significant milestone for the open-source community.

Simultaneously, Snowflake has been riding high with its recent acquisitions of Neeva and Streamlit. As part of its strategic plan to enhance data searchability and app development, Snowflake has also partnered with Nvidia. This partnership simplifies the deployment of generative AI applications, bridging the gap between data infrastructure and AI processing.

The pace is not just rapid for acquisitions and collaborations. Unprecedented funding rounds for generative AI startups indicate a robust market. Chatbot startup Inflection secured the same staggering amount – $1.3B in funding, marking the fourth-largest fundraising round in AI. Other startups like Runway, Typeface, Celestial AI, and Light Years Beyond also raised significant funding, propelling the total to over $14 billion in the first five months of 2023, compared to $4.8 billion for the entirety of 2022.

However, these sky-high valuations also raise concerns about a potential bubble in the AI sector. Despite investor skepticism, the $650M cash purchase of Casetext by Thomson Reuters serves as credible evidence that established companies see real value in generative AI.

As The Newcomer puts it, “For anyone who was arguing that building on top of ChatGPT can’t create great businesses — Casetext is a sign to think again. For Thomson Reuters — a competitor to my old employer Bloomberg and to legal data companies like LexisNexis — Casetext’s technology and experience working with ChatGPT could give it a real edge over the incumbents in legal research technology.”

AI spending trends are aligned with these developments. A recent CNBC survey revealed that nearly half of the companies regard AI as their top priority for tech spending, with AI budgets more than double the second-largest spending area: cloud computing.

However, the question remains: Can the AI sector sustain this gold rush without hitting a bubble? And how will the increasing reliance on AI impact job creation and cybersecurity? As the second half of 2023 unfolds, these are questions the tech sector must grapple with.

What are we reading

Not only human brains can be an inspiration for AI research: How honey bees make fast and accurate decisions

What 5 years at Reddit taught us about building for a highly opinionated user base – nothing to do with AI (at least until this scheme becomes an algorithm) but intensely interesting as internet communities are such an important part of data creation.

Pentagon is facing challenges in rapidly adopting artificial intelligence (AI) and other high-tech capabilities. Which is surprising knowing the rich history of AI support from the Department of Defense.

BAIDU & Ernie bot: surpassing ChatGPT (3.5) in comprehensive ability scores and outperforming GPT-4 in several Chinese language capabilities, as reported by China Science Daily.

Truly fascinating

DreamDiffusion generates high-quality images directly from brain electroencephalogram (EEG) signals, without the need to translate thoughts into text.

Human motion, which is often interpreted as a form of body language, exhibits a semantic coupling similar to human language. This implies that language modeling can be applied to both motion and text in a unified manner, treating human motion as a distinct form of language.

Other research

LLMFlow: An open-source toolkit for fine-tuning large foundation models.

LeanDojo: LLMs Generating Mathematical Proofs using proof assistants

vLLM: A Game-Changer for LLM Inference and Serving.

Preference Ranking Optimization (PRO) for aligning LLMs with human values.

In simulated driving tests, their main autonomous driving algorithm, Unified Autonomous Driving (UniAD), outperformed other autonomous systems by 20% to 30% across various parameters.

Towards Language Models That Can See: Computer Vision Through the LENS of Natural Language.

SoundStorm – a model for efficient, non-autoregressive audio generation.

Salesforce introduces XGen-7B: a new 7B 8k context window LLM achieving comparable or better results with SOTA open-source LLMs.

Merlyn Mind provide a generative AI experience that retrieves content from a user-selected curriculum, resulting in an engagement that is curriculum-aligned, hallucination-resistant, and age-appropriate.

Our concern

Atmospheric Carbon Dioxide Tagged by Source – take a look on NASA’s website for the full visualization.

Thank you for reading, please feel free to share with your friends and colleagues. Every referral will eventually lead to some great gifts 🤍