If Turing Post is part of your weekly routine, please share it with one smart friend. It’s the simplest way to keep the Monday digests free.

This Week in Turing Post:

Wednesday / AI 101 series: Nemotron 3 and the surprising coalition building new AI in the open

Friday / Series: Wrapping up the open-source series (the one that didn’t quite happen…)

From our partners: How to Cut the Trust Tax of Evaluating AI Agents at Scale [Free Guide]

Evaluating agents with external LLMs looks affordable. Until your agent traffic grows. Each scored trace means another external API call, another bill. The hidden costs add up fast. And to manage them, AI teams turn to sampling, even when the missed traces are the ones that matter most: jailbreak attempts, hallucinations, policy violations.

This guide breaks down drawbacks of external LLM evaluations. See how batteries-included Fiddler Trust Models deliver in-environment evaluation without external API costs, so enterprise teams can evaluate every trace without sampling while cutting total cost of ownership (TCO).

Main topic: NVIDIA and its transformational power

What you are about to see is a fight between a professional reporter, a trend-watching analyst, and an excited tech nerd. But let’s let the reporter win for a second.

The San Jose Museum of Art is a cozy little place you can get through in about an hour. At the entrance, two days before GTC, the cashier tells me, “If you come tomorrow, it will be free. For the whole week.”

“Why?” I ask.

NVIDIA rented out the whole downtown and opened the museum to everyone.

Nice metaphor right there, right?

Walking through downtown San Jose, you can see: NVIDIA is everywhere. Green balloons at an Irish pub welcoming GTC. Buildings across downtown reserved, to one degree or another, for conference events. Hotel rooms promising to hold themselves to NVIDIA standards. It’s almost intimidating how everything is penetrated by NVIDIA presence. But at the same time, as with the museum, they also make a lot of things more accessible.

This year, more than 30,000 attendees from over 190 countries are gathering in San Jose for NVIDIA’s annual conference, anchored by Jensen Huang’s keynote and more than 1,000 sessions across the event. The obvious story is that NVIDIA has become enormous. The more interesting story is that, as NVIDIA representatives repeated many times, it is also the only company in the world working with every other AI company. It is, quite literally, everywhere.

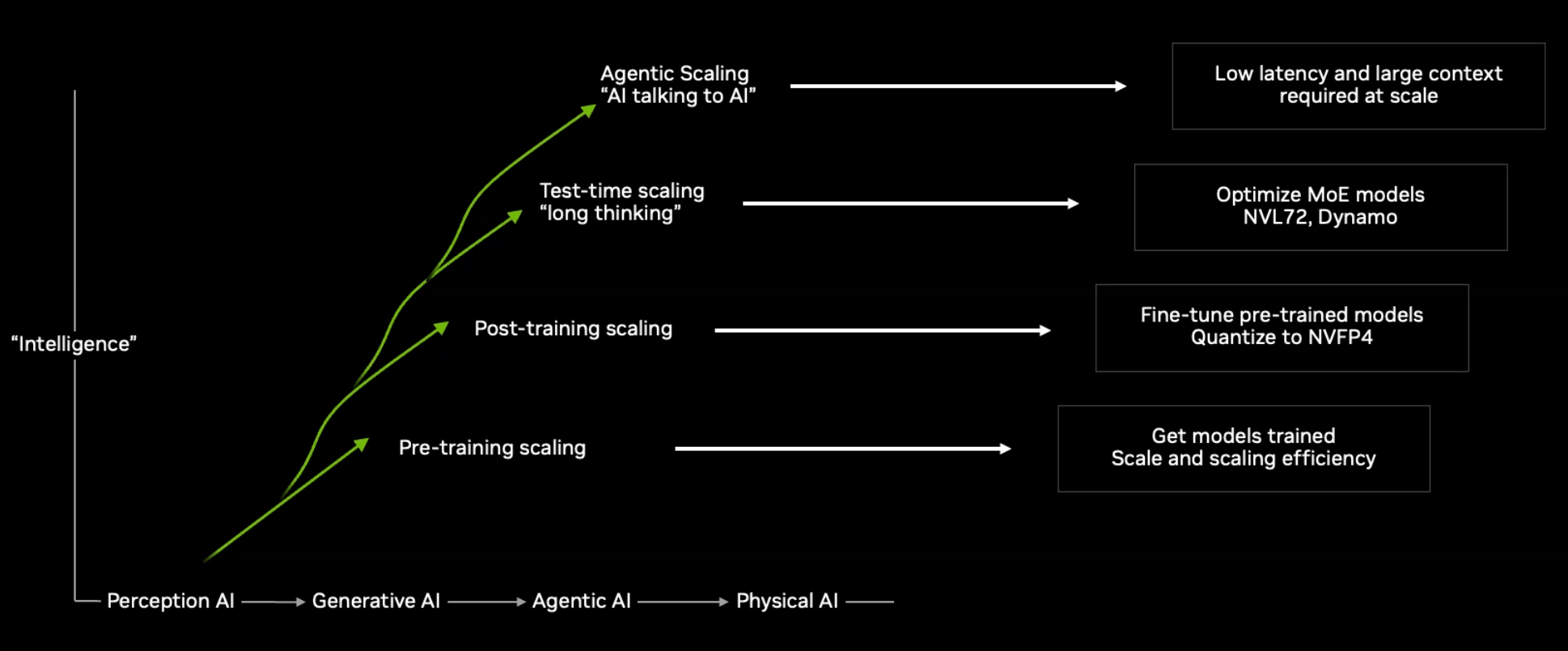

And now NVIDIA wants to change the scaling laws.

For the last two years, the industry has obsessed over pretraining, post-training, and more recently test-time scaling. Now NVIDIA wants to add a fourth law: agentic scaling. In plain English, that means AI systems that do not simply answer and stop. They call tools, write code, search, check files, spawn sub-agents, hold long context, and interact with other AIs.

That kind of workload creates very different infrastructure pressure. It cares about latency, memory movement, storage paths, and coordination with a kind of intensity that classic chatbot inference never demanded.

Image Credit: NVIDIA

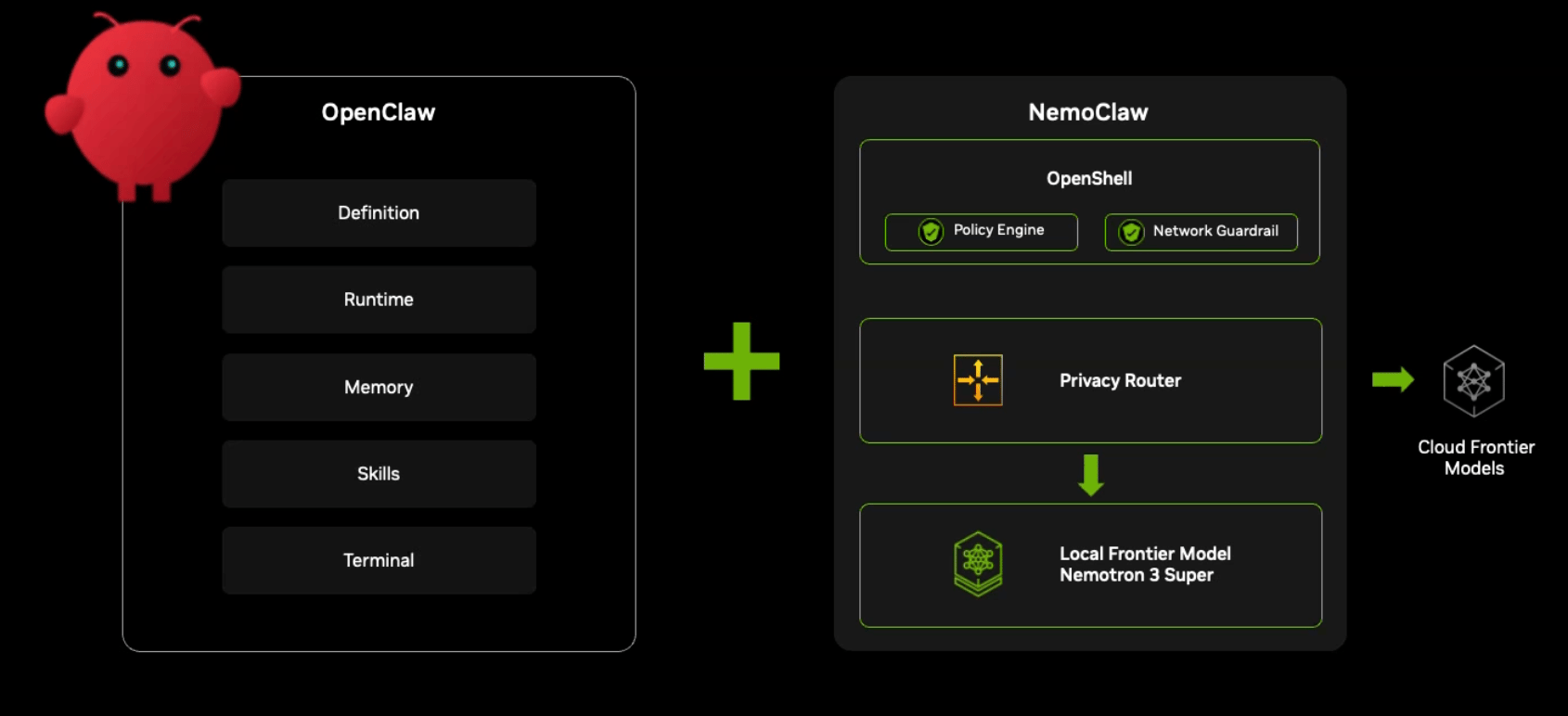

And with this new law, comes new players. One of the biggest announcements hiding inside all this infrastructure talk is NemoClaw. That may sound odd at a conference famous for giant racks and giant token-per-second numbers. But strategically, it may matter as much as any new piece of hardware. Plus Jensen just really loves OpenClaw: “It exceeded what Linux did in 30 years!”. “Everyone needs OpenClaw strategy!” “Enterprise IT Renaissance from SaaS to Agent-as-a-Service!”

Image Credit: NVIDIA

NemoClaw is NVIDIA’s contribution to the emerging OpenClaw ecosystem – a framework for long-running autonomous agents. The idea is simple: install OpenClaw together with Nemotron models and OpenShell, NVIDIA’s new security runtime, in a single command. In other words, NVIDIA is no longer aiming only to power the model. It wants to sit under the agent itself.

This is where everything from the keynote connects.

Agentic systems are messy. They run for hours. They call tools, execute code, search databases, access files, and coordinate with other models. That workload stresses hardware very differently than a simple chatbot response. It requires fast inference, low latency, persistent memory, security guardrails, and orchestration layers that keep the system coherent.

That is exactly why NVIDIA spent so much time talking about agentic scaling.

The new hardware stack – including the Vera Rubin platform and the GPU + LPU rack – is designed to push token generation and reasoning throughput to the level where agents can operate continuously. In other words, the data center is being redesigned for agents (Vera Rubin, according to Jensen Huang, will be likely shipped in the second half of the year). I recorded a video explaining how they integrated LPU and what results they were able to achieve (it’s quite phenomenal):

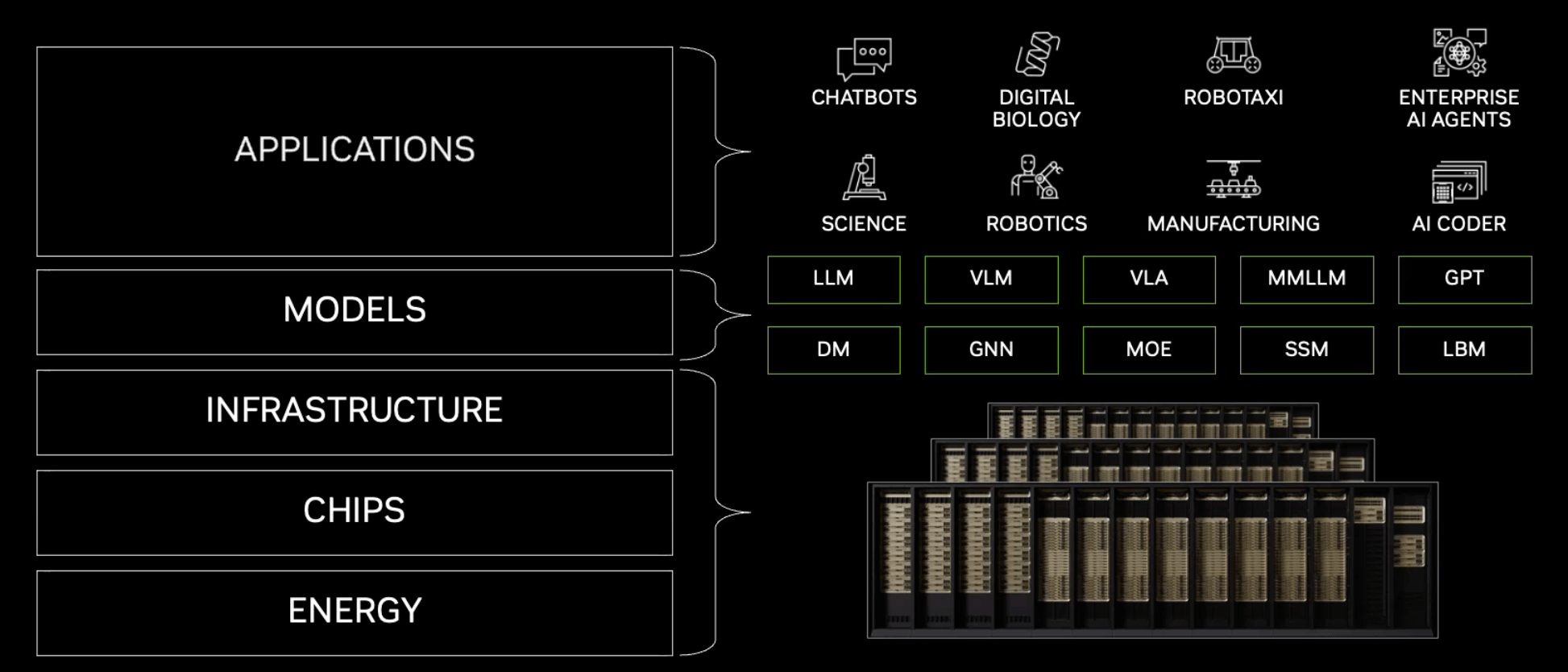

For a while now, NVIDIA has been widening its role far beyond semiconductors. It has been assembling the infrastructure foundation of the AI ecosystem. At GTC, that picture becomes much clearer.

The company is now present at almost every layer of the AI stack: chips, networking, storage, runtime software, models, simulation systems, robotics platforms, and developer tooling. NVIDIA is no longer presenting a sequence of clever models or faster GPUs. It is presenting a theory of AI infrastructure – energy, silicon, networking, storage, models, software, robots, telecom, and data centers stitched into one production system. Jensen called NVIDIA “a vertically integrated computing company with open horizontal integration with the world.”

Image Credit: NVIDIA

Just as interesting is the move toward an open ecosystem. So sneaky.

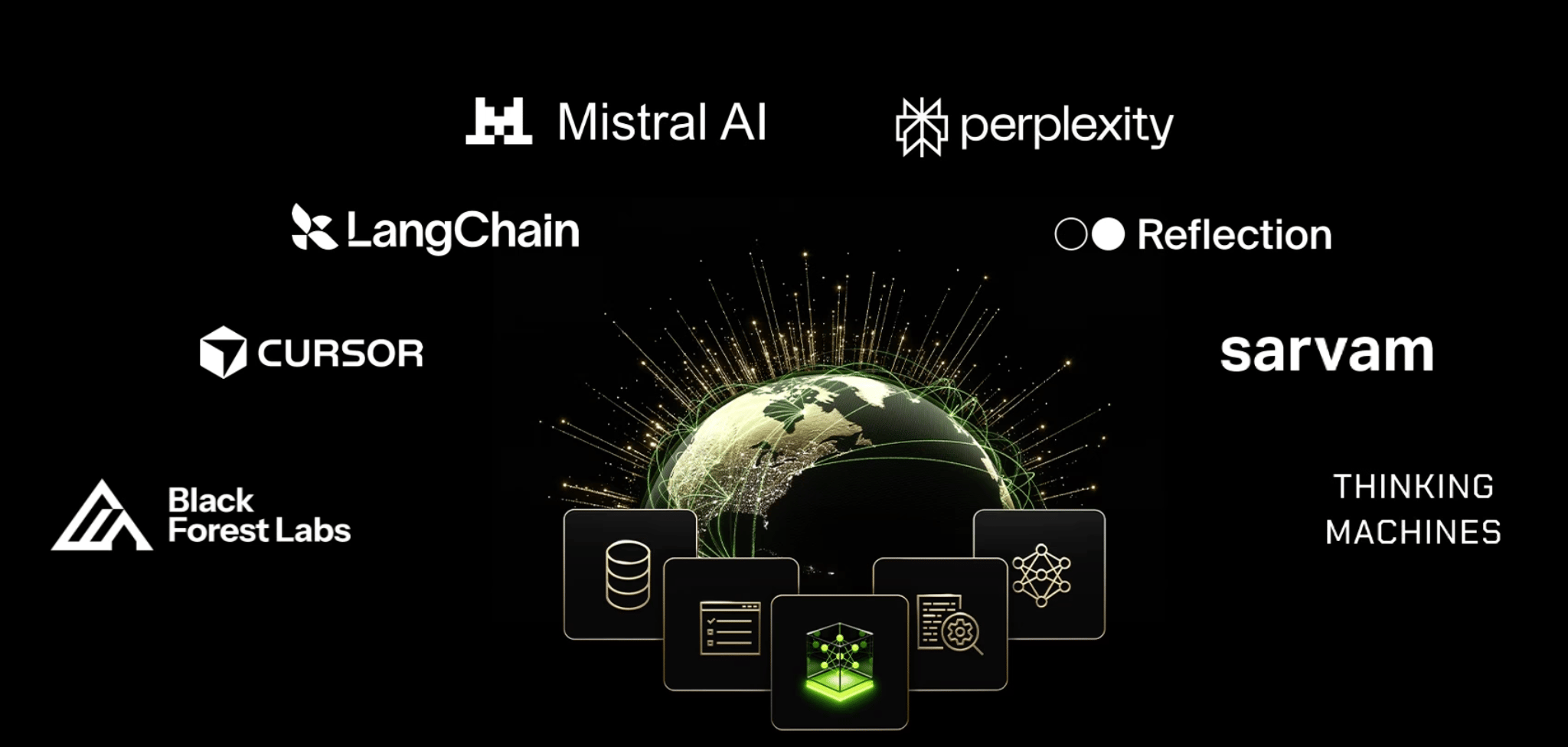

Alongside Nemotron 3, NVIDIA announced a coalition of AI companies – including players like Cursor, LangChain, Perplexity, Reflection AI, Thinking Machines and others – contributing expertise in data, evaluation, and development while NVIDIA provides the training infrastructure. Instead of every lab trying to build frontier models alone, the idea is to build open foundations collaboratively and then specialize them.

It is a very different model from the closed-lab approach that defined the last two years. This topic itself is super interesting and worth closer examination. You will receive our deep dive about the Nemotron coalition this Wednesday. (upgrade to receive it in full)

And then there is the physical world and Physical AI, with robotics promising to become the largest and most valuable industry transformation of them all.

NVIDIA is already the biggest robotics company without building its own robots. Soon it may become the biggest autonomous vehicle platform without building a single car. From robotics simulation to edge AI networks and autonomous driving models like Alpamayo, the same infrastructure logic now extends beyond the data center into factories, vehicles, and cities. Alpamayo is worth discussing in more detail also, and we will do it through an actual test-drive lens – that will be my next Attention Span episode. Stay tuned.

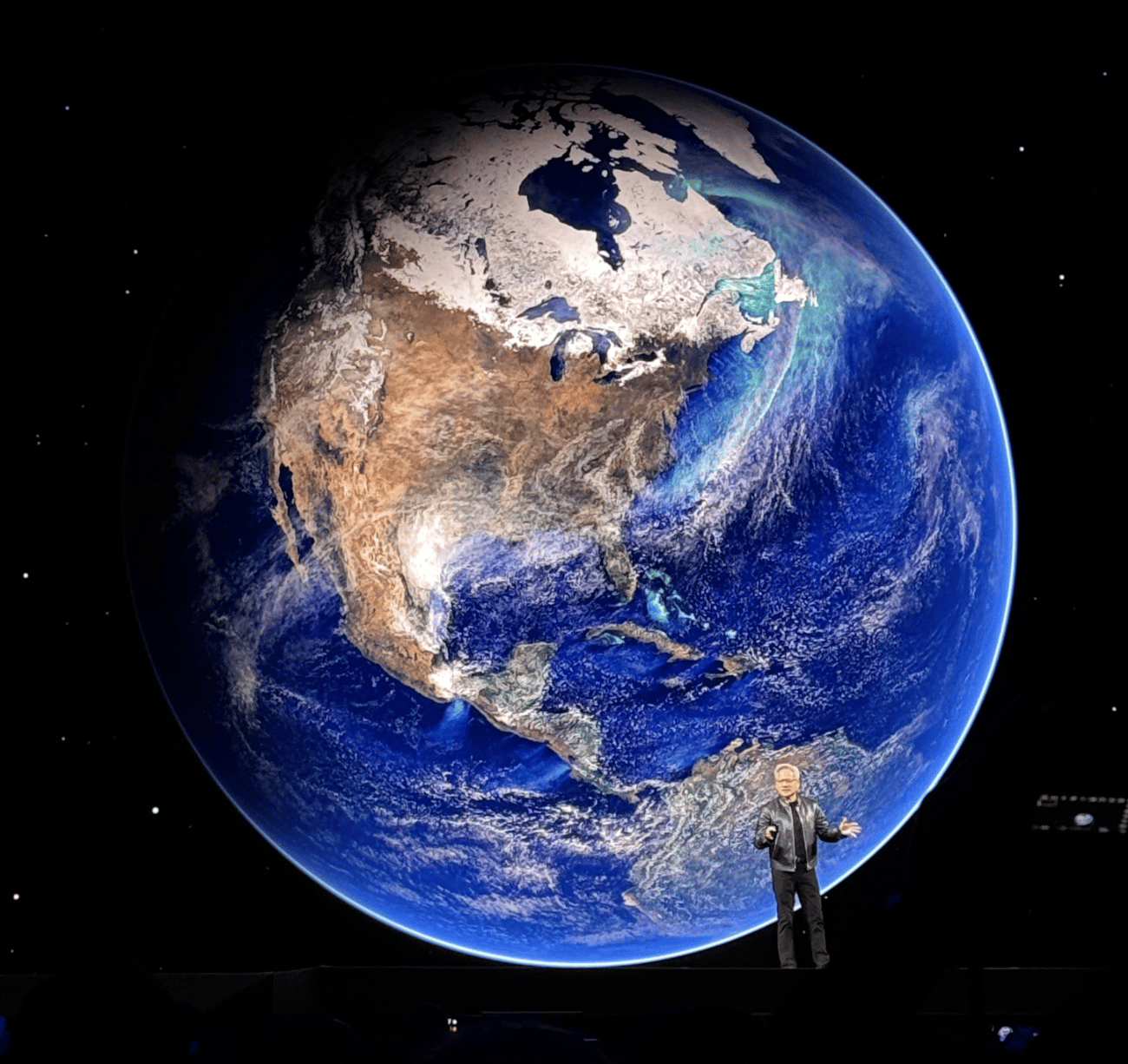

And one more thing caught my attention at GTC. NVIDIA, as if to prove just how omnipresent it has become, is going beyond Earth too.

One of the more surreal announcements was NVIDIA’s push toward space-based AI infrastructure. The company talked about space-optimized Vera Rubin modules and about turning orbital systems into real-time computing platforms for sensing, autonomy, and decision-making. Even if that takes years to matter commercially, the symbolism is hard to miss: NVIDIA is rooting its infrastructure story in every possible direction – cloud, edge, desktop, robotics, telecom, automotive, and now space.

As Jensen said at the pre-game show: AI is making us much busier.

I have the same feeling.

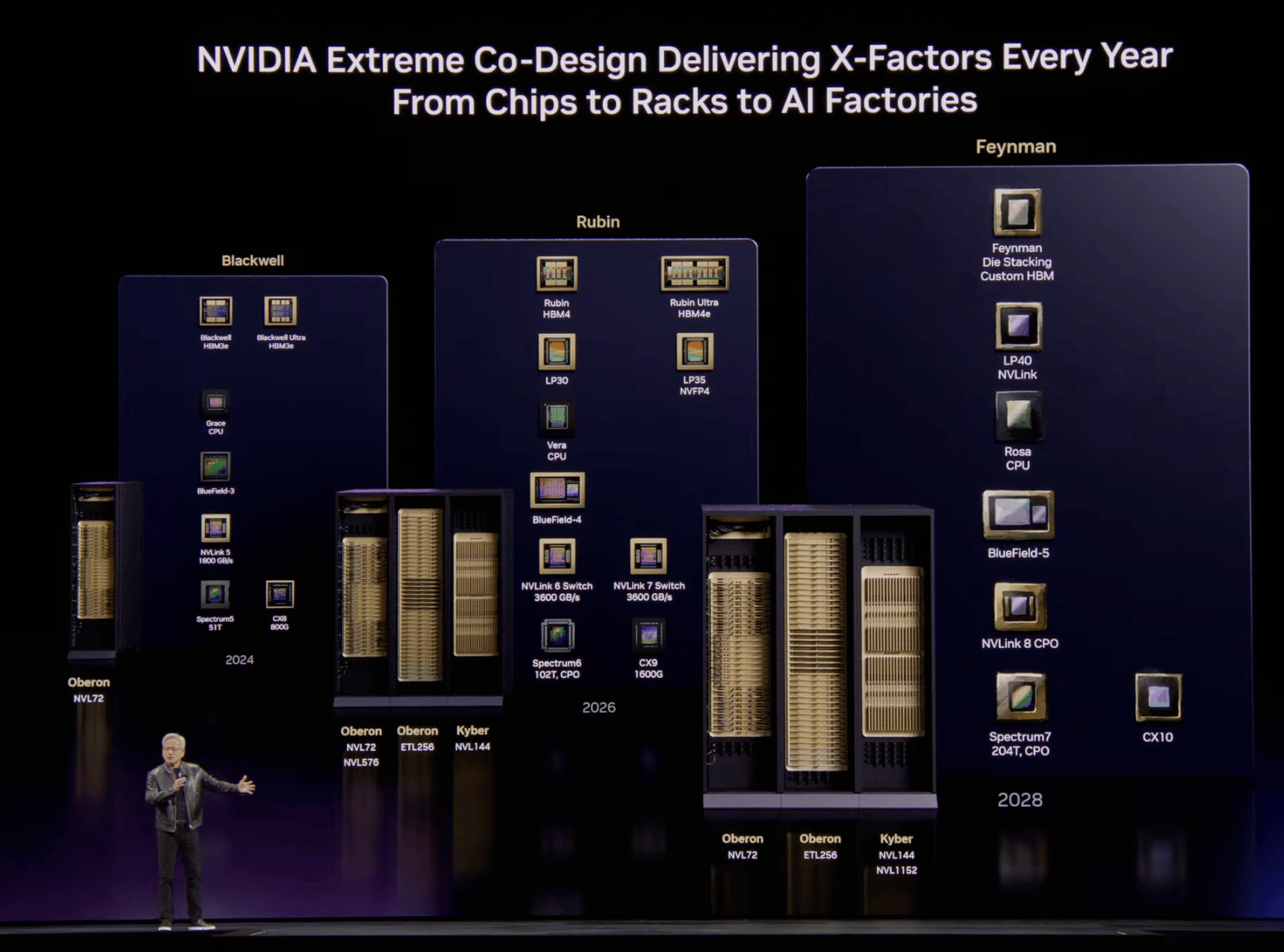

NVIDIA’s strategy in one picture

And their plan for 2026-2028 (all the press releases are available here):

Our news digest is always free. Click on the partner’s link above to support us or upgrade to receive our deep dives in full, directly into your inbox. Join Premium members from top companies like Nvidia, Hugging Face, Microsoft, Google, a16z etc plus AI labs such as Ai2, MIT, Berkeley, .gov, and thousands of others to really understand what’s going on with AI →

Disclosure: I attended NVIDIA GTC as part of the “Creators” group. NVIDIA covered hotel accommodations.

Follow us on 🎥 YouTube Twitter Hugging Face 🤗

Twitter Library

We are reading/watching:

LLM Architecture Gallery – an incredible collection of models’ architectures created by Sebastian Raschka

Five categories of world models by Zhuokai Zhao

World Models: The old, the new and the wishful by Subbarao Kambhampati

News from the usual suspects

👀👀👀

CoreWeave – Bigger Brains, Colder Servers

CoreWeave unveiled support for NVIDIA’s HGX B300 (Blackwell Ultra) at GTC, packing 2.1 TB of HBM3e memory and faster InfiniBand to feed the growing appetite of agentic AI. The pitch: move from training models to running fleets of self-improving agents in production.Nebius & Meta – A $27B Vote of Confidence

Nebius just secured a hefty endorsement from Meta: a five-year AI infrastructure deal worth up to $27 billion. The partnership centers on early deployments of NVIDIA’s Vera Rubin platform, with Nebius providing dedicated capacity starting in 2027.Microsoft – The Foundry of the AI Industrial Age

Microsoft doubles down on its AI ambitions, unveiling expanded Foundry capabilities, tighter NVIDIA integration, and infrastructure built for reasoning-heavy workloads. With next-gen Vera Rubin chips, agentic AI tooling, and a push into “Physical AI,” Redmond is positioning itself as the factory floor of the AI economy.Runway – The Avatar Leaves the Chatbox

Runway has launched Characters, a real-time video agent API that turns a single image into a fully conversational avatar, powered by its GWM-1 world model.Anthropic – Context Goes Supersized

Anthropic has stretched Claude Sonnet 4’s memory to a hefty 1 million tokens – enough to ingest entire codebases, dense research libraries, or sprawling agent workflows in a single pass.Google Maps – The Map Starts Talking Back

Google is giving Maps its biggest glow-up in years with two Gemini-powered additions: Ask Maps, a conversational layer for messy real-world queries, and Immersive Navigation, a richer 3D driving interface with more natural guidance.Google – Embeddings Learn to See, Hear, and Read

Google has introduced Gemini Embedding 2, its first natively multimodal embedding model, mapping text, images, video, audio, and documents into one shared semantic space. That means cleaner multimodal search, retrieval, and classification without the usual patchwork pipeline.Replit – Vibe Coding Gets Project Management

Replit introduced Agent 4, which is less a coding assistant than a small, tireless studio crew: it designs, builds, parallelizes tasks, and even spins up decks, animations, and mobile apps inside the same project.

🔦 Models Highlight

Gemini embedding 2: Google’s first natively multimodal embedding model

Researchers from Google DeepMind introduced Gemini Embedding 2, a multimodal embedding model mapping text, images, video, audio and documents into one vector space for retrieval and classification across 100+ languages. It accepts 8,192 text tokens, up to 6 images (PNG/JPEG), 120-second videos (MP4/MOV), audio without transcription, and PDFs up to 6-page documents. Using Matryoshka Representation Learning, embeddings scale from 3072. Developers can reduce dimensions to 1536 or 768. Paramount reported →read their blog

Research this week

(as always, 🌟 indicates papers that we recommend to pay attention to)

What we see this week:

Agents that learn from use

New training signals beyond labels

Reasoning reliability and calibration

Multimodal agents and perception

Coding, debugging, and automated research

Better adaptation and representations

Faster, cheaper model computation

More controllable generation

Agents that learn by acting, using tools, and improving from interaction

🌟 OpenClaw-RL: Shows how a single policy can learn online from next-state signals across conversations, tools, terminals, GUIs, and SWE traces, which makes it interesting as a genuinely unified recipe for agents that improve simply by being used. → read the paper

🌟 Meta-Reinforcement Learning with Self-Reflection for Agentic Search: Trains search agents to adapt across episodes rather than inside a single attempt, which makes it interesting as a step toward agents that actually get smarter while searching instead of just trying harder. →read the paper

🌟 Agentic Critical Training: Trains agents to judge which action is better rather than merely imitate reflections, which makes it interesting because it treats self-reflection as a learned capability instead of a pasted-on script. →read the paper

🌟 Video-Based Reward Modeling for Computer-Use Agents: Evaluates agent success directly from execution video rather than internal traces, which makes it interesting as a model-agnostic reward signal for computer-use agents in the wild. →read the paper

In-Context Reinforcement Learning for Tool Use in Large Language Models: Replaces the usual supervised warm-start with an RL-only path to tool use, which makes it interesting as a cheaper and more scalable way to teach models to call tools correctly. →read the paper

XSKILL: Continual Learning from Experience and Skills in Multimodal Agents: Splits reusable agent knowledge into short experience-level guidance and higher-level skills, which makes it interesting as a practical memory design for agents that need to improve without weight updates. →read the paper

🌟 AutoResearch-RL: Perpetual Self-Evaluating Reinforcement Learning Agents for Autonomous Neural Architecture Discovery: Turns architecture search into an open-ended RL loop that keeps proposing, testing, and updating, which makes it interesting as a concrete version of automated research rather than the usual hand-wavy sci-fi trailer. →read the paper

Code-Space Response Oracles: Generating Interpretable Multi-Agent Policies with Large Language Models: Replaces black-box RL oracles with LLM-generated code policies inside a PSRO loop, which makes it interesting because it turns multi-agent strategy learning into interpretable program synthesis instead of opaque policy optimization. →read the paper

Automatic Generation of High-Performance RL Environments: Demonstrates that coding agents can translate and optimize RL environments with strong verification at very low cost, which makes it interesting because it moves agentic coding from toy demos into systems work that actually matters. →read the paper

Towards a Neural Debugger for Python: Turns execution-trace modeling into debugger-like control over stepping, breakpoints, and reverse reasoning, which makes it interesting as a possible world model for future coding agents. →read the paper

Understanding by Reconstruction: Reversing the Software Development Process for LLM Pretraining: Reconstructs the hidden planning and debugging trajectories behind repositories, which makes it interesting because it argues that code alone is the corpse and the real learning signal is the life that led to it. →read the paper

Better representations, adaptation, and training mechanics

🌟 Neural Thickets: Diverse Task Experts Are Dense Around Pretrained Weights: Argues that large pretrained models already sit inside neighborhoods full of useful task specialists, which makes it interesting because it hints that post-training may be as much about finding nearby experts as building them. →read the paper

🌟 Training Language Models via Neural Cellular Automata: Uses synthetic non-linguistic data as a pre-pretraining stage, which makes it interesting because it asks the deliciously annoying question of whether language is actually necessary for building useful representations. →read the paper

🌟 The Curse and Blessing of Mean Bias in FP4-Quantized LLM Training: Shows that a rank-one mean bias drives much of low-bit instability and can be removed cheaply, which makes it interesting because the fix is surprisingly simple for such an ugly hardware problem. →read the paper

🌟 Lost in Backpropagation: The LM Head is a Gradient Bottleneck: Argues that the language modeling head is an optimization bottleneck rather than only an expressivity bottleneck, which makes it interesting because it points to a very basic part of the stack that may be wasting enormous training signal. →read the paper

🌟 IndexCache: Accelerating Sparse Attention via Cross-Layer Index Reuse: Reuses sparse attention indices across layers to cut indexer cost, which makes it interesting because long-context agent workloads live or die on these serving optimizations. →read the paper

🌟 Attention Residuals: Replaces fixed residual connections with attention over previous layers so each layer can selectively retrieve earlier representations, which makes it interesting because it turns depth into an attention problem rather than a blind accumulation problem. →read the paper

🌟 REMIX: Reinforcement Routing for Mixtures of LoRAs in LLM Finetuning: Fixes router collapse in mixture-of-LoRAs by replacing learnable weights with reinforcement-based routing, which makes it interesting because it tries to make multiple adapters genuinely matter instead of pretending they do. →read the paper

LLM2VEC-GEN: Generative Embeddings from Large Language Models: Learns embeddings by representing the model’s likely response rather than just the input text, which makes it interesting because it closes the gap between semantic encoding and task-oriented retrieval. →read the paper

Reasoning, search, and reliability under uncertainty

🌟 Strategic Navigation or Stochastic Search? How Agents and Humans Reason Over Document Collections: Measures whether document agents are planning strategically or just brute-forcing their way through PDFs, which makes it interesting as a sharp benchmark for agentic document work. →read the paper

🌟 Thinking to Recall: How Reasoning Unlocks Parametric Knowledge in LLMs: Explains why reasoning can improve even single-hop factual recall, which makes it interesting because it reframes reasoning as a retrieval aid rather than only a logic tool. →read the paper

🌟 How Far Can Unsupervised RLVR Scale LLM Training?: Maps the limits of intrinsic reward signals and shows where they collapse, which makes it interesting as a reality check for anyone hoping unsupervised RLVR will scale forever on vibes alone. →read the paper

Decoupling Reasoning and Confidence: Resurrecting Calibration in Reinforcement Learning from Verifiable Rewards: Separates accuracy optimization from calibration optimization, which makes it interesting because it tackles the very real problem of models becoming confidently wrong after RLVR. →read the paper

🌟 Examining Reasoning LLMs-as-Judges in Non-Verifiable LLM Post-Training: Tests reasoning judges inside real policy training loops rather than static evals, which makes it interesting because it shows that stronger judges can also train better adversarial liars. Lovely. →read the paper

CREATE: Testing LLMs for Associative Creativity: Evaluates whether models can generate multiple specific and diverse conceptual bridges, which makes it interesting because it treats creativity as structured search rather than mystical smoke. →read the paper

Lost in Stories: Consistency Bugs in Long Story Generation by LLMs: Diagnoses where and how long-form narratives contradict themselves, which makes it interesting as a focused look at coherence failures that fluency benchmarks mostly ignore. →read the paper

RBTACT: Rebuttal as Supervision for Actionable Review Feedback Generation: Uses rebuttals as implicit supervision for generating more useful review comments, which makes it interesting because it learns from what feedback actually triggered change rather than what merely sounded smart. →read the paper

Multimodal systems for seeing, generating, remembering, and staying consistent

🌟 Reading, Not Thinking: Understanding and Bridging the Modality Gap When Text Becomes Pixels in Multimodal LLMs: Shows that many failures on visual text are really reading failures rather than reasoning failures, which makes it interesting because it pinpoints a concrete bottleneck behind a lot of multimodal underperformance. →read the paper

MM-Zero: Self-Evolving Multi-Model Vision Language Models From Zero Data: Builds a self-evolving multimodal loop with proposer, coder, and solver roles and no seed data, which makes it interesting as a serious attempt to push zero-data self-improvement beyond text-only models. →read the paper

InternVL-U: Democratizing Unified Multimodal Models for Understanding, Reasoning, Generation and Editing: Combines understanding, reasoning, generation, and editing in a lightweight unified model, which makes it interesting because it aims for a real multimodal all-rounder instead of a benchmark specialist. →read the paper

CoCo: Code as CoT for Text-to-Image Preview and Rare Concept Generation: Uses executable code to plan image structure before refinement, which makes it interesting because it replaces fuzzy verbal planning with something inspectable and actually testable. →read the paper

EndoCoT: Scaling Endogenous Chain-of-Thought Reasoning in Diffusion Models: Injects iterative reasoning directly into diffusion guidance, which makes it interesting because it treats visual generation as a process that can think progressively rather than just decode from a frozen prompt. →read the paper

ID-LoRA: Identity-Driven Audio-Video Personalization with In-Context LoRA: Personalizes voice and appearance jointly in one generative pass, which makes it interesting because it handles identity as a cross-modal problem instead of awkwardly gluing separate systems together. →read the paper

MA-EgoQA: Question Answering over Egocentric Videos from Multiple Embodied Agents: Introduces a benchmark for reasoning over several egocentric video streams at once, which makes it interesting because future embodied systems will need shared memory across agents, not just single-agent perception. →read the paper

PIRA-Bench: A Transition from Reactive GUI Agents to GUI-based Proactive Intent Recommendation Agents: Pushes GUI agents from following commands to anticipating intent from screen trajectories, which makes it interesting because proactivity is one of the big missing pieces in personal agent design. →read the paper

CAST: Modeling Visual State Transitions for Consistent Video Retrieval: Models state transitions rather than only local clip similarity, which makes it interesting because long-form video systems need continuity, not just semantically relevant fragments. →read the paper

Diffusion and generative model efficiency

🌟 Scale Space Diffusion: Replaces full-resolution denoising at highly noisy steps with a scale-space view, which makes it interesting because it questions one of diffusion’s most expensive habits and finds a cleaner way to spend compute. →read the paper

Geometric Autoencoder for Diffusion Models: Rebuilds latent diffusion around a more principled semantic latent space, which makes it interesting because better latents often matter more than one more clever sampler with a heroic name. →read the paper

That’s all for today. Thank you for reading! Please send this newsletter to colleagues if it can help them enhance their understanding of AI and stay ahead of the curve.