If Turing Post is part of your weekly routine, please share it with one smart friend. It’s the simplest way to keep the Monday digests free.

This Week in Turing Post:

Wednesday / AI 101 series: Beyond RL: The New Fine-Tuning Stack for LLMs

Friday / Interview: Michael Bolin, Codex and Open-Source

From our partners: AI Agents Introduce Risk. Modern Infrastructure Reduces It.

AI agents operate autonomously across infrastructure, but legacy identity systems were built for humans. Static credentials and excessive privilege create unnecessary risk. Teleport provides identity-based access, short-lived credentials, and policy enforcement designed to securely deploy and run AI agents in production.

Topic 1: Betting on World Models (Updated version with new news about AMI Labs raise)

In 2022, LeCun published A Path Towards Autonomous Machine Intelligence (AMI), describing a research program for building autonomous agents grounded in world models, planning, and interaction with environments. In a 2024 talk at Columbia, he said he disliked the term AGI and preferred “Advanced Machine Intelligence”, another AMI, adding that Meta had adopted the framing. Through 2025, Meta’s research messaging continued using the term, including presenting V-JEPA 2 as a step toward that direction, and Reuters later described LeCun’s 2025 startup using the same language. In early 2026, a new paper co-authored by LeCun coined another acronym: SAI, Superhuman Adaptable Intelligence. Shortly afterward, AMI Labs announced a $1.03 billion raise to build world models, making it clear that AMI remains an active concept in his work.

So why does he need both?

What is SAI?

Official Definition: Superhuman Adaptable Intelligence (SAI) is capable of adapting to exceed humans at any task humans can do, while also being able to adapt to tasks outside the human domain that have utility.

Image Credit: The original paper

What is AMI?

AMI – Autonomous Machine Intelligence – is a research program for building AI systems that can understand the world, plan actions, and operate autonomously, rather than merely generating text or images from prompt.

Image Credit: The original paper

AMI and SAI

AMI and SAI are not identical, but there is a clear continuity between them. AMI describes the path: autonomous agents built around world models, memory, planning, and interaction with environments. SAI describes a capability property such systems might eventually exhibit: adaptability across tasks, including those beyond human domains. In that sense, SAI reads less like a replacement for AMI and more like a broader framing layered on top of the same research agenda.

Seen this way, the shift looks more rhetorical than technical. The core program remains familiar, while the emphasis moves from autonomy, to advancement, to adaptability.RL is still important, SSL is still foundational, and world models are still a serious bet, but none of them looks sufficient on its own. The direction now seems more practical: learn broad structure through self-supervision, sharpen behavior through reinforcement, use world models for planning, bring in memory for long-horizon adaptation, causal learning for interventions rather than mere correlations, and symbolic methods wherever correctness and exactness still matter – this is not in the paper, that’s how I see it. The result is not one universal recipe but a stack of methods that specialize, transfer, and recombine. Which is probably for the best, because, as the paper notices, “the AI that folds our proteins should not be the AI that folds our laundry.”

And then there’s topic number two: Autoresearch by Andrej Karpathy (who totally bets on Transformers)

Our news digest is always free. Click on the partner’s link above to support us or upgrade to receive our deep dives in full, directly into your inbox. Join Premium members from top companies like Nvidia, Hugging Face, Microsoft, Google, a16z etc plus AI labs such as Ai2, MIT, Berkeley, .gov, and thousands of others to really understand what’s going on with AI →

Follow us on 🎥 YouTube Twitter Hugging Face 🤗

Twitter Library

We are reading/watching:

Chinese Open Source: A Definitive History by Interconnected

News from the usual suspects

Jetson gets a butler

NVIDIA’s Jetson AI Lab shows how to turn an AGX Thor or AGX Orin into a fully local OpenClaw assistant that chats through WhatsApp and runs without cloud APIs. The recipe is simple but ambitious: serve a tool-calling model with vLLM, install OpenClaw, link WhatsApp, and let the agent handle files, apps, and web tasks on-device. Handy and private.OpenAI – The Workhorse Gets an Upgrade

OpenAI has unveiled GPT-5.4, positioning it as its most capable model for professional work. It merges strong reasoning, coding (thanks to GPT-5.3-Codex), and agent-style computer use into one system that can operate software, handle documents, and execute complex workflows. With up to 1M tokens of context and improved tool use, the model aims to spend less time chatting – and more time actually getting the job done.Apple – A Design Reset in Cupertino

Apple has quietly reshuffled its leadership, elevating designers Molly Anderson (industrial design) and Steve Lemay (human interface) to the executive ranks. After years of criticism – from Vision Pro doubts to software missteps – the move signals a renewed emphasis on design. With John Ternus widely seen as Tim Cook’s eventual successor, a trio of hardware, software, and design leadership may redefine Apple’s identity. The $599 MacBook Neo already feels like the opening act.Microsoft – Claude Enters the Cubicle

Microsoft is folding Anthropic’s Claude Cowork into Microsoft 365 Copilot under the name Copilot Cowork, giving enterprise users an agent that can build decks, wrangle spreadsheets, and send the meeting email nobody wanted to write. It’s a shrewd move: Cowork rattled the SaaS establishment when it launched, and Microsoft has decided that if a wave is big enough, better to surf it than watch from shore.Alibaba – A Sudden Exit in the Qwen Lab

Alibaba’s Qwen AI project just lost a key architect. Junyang Lin, one of the most visible technical leaders behind the model family, stepped down days after the company launched its new Qwen 3.5 small multimodal models. The timing raised eyebrows across the AI community. With China racing to rival OpenAI, Google, and Anthropic, losing a central figure mid-momentum is… less than ideal.

💡 Benchmark Highlight

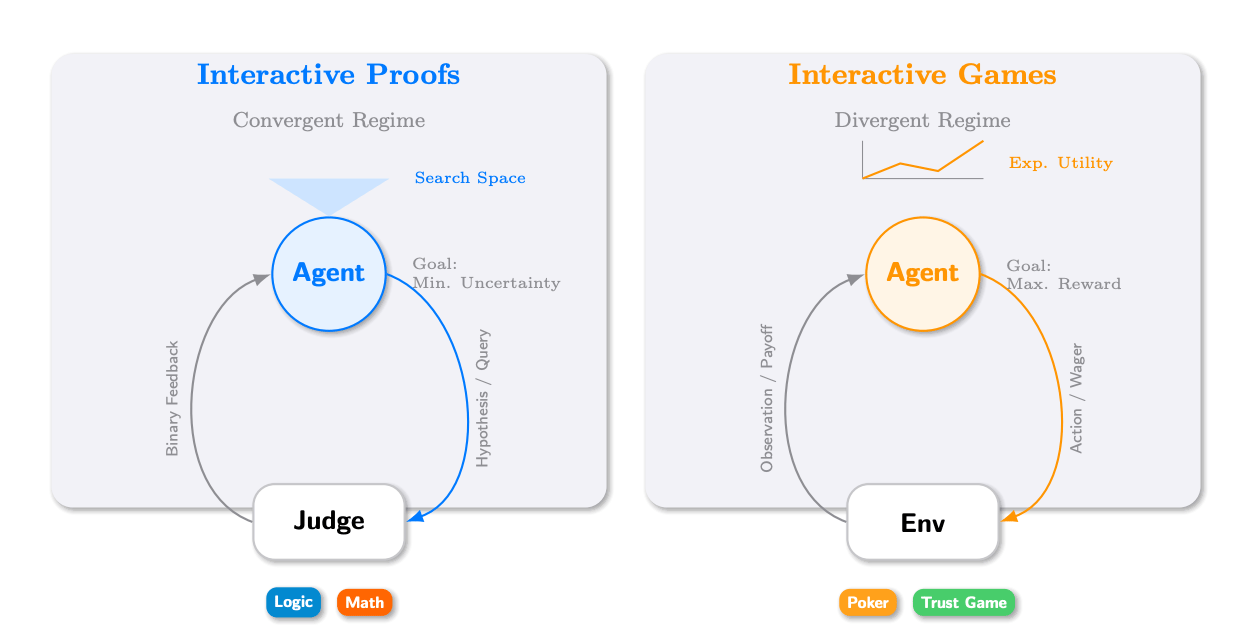

Interactive Benchmarks

Image Credit: The original paper

Researchers from Princeton University introduce Interactive Benchmarks evaluating LLM reasoning via budgeted multi-turn interaction instead of static datasets. Framework models agent–environment exchanges with query costs or discounted rewards. Two domains: Interactive Proofs using a 46-instance Situation Puzzle set and Interactive Games including Texas Hold’em and Trust Game. On a 52-problem HLE math subset, interactive accuracy reaches 76.9% versus pass@k drops of 20–50%. Gemini-3-flash leads with 30.4% accuracy; GPT-5-mini follows next →read the paper

🔦 Models Highlight

Phi-4-reasoning-vision-15B technical report

Researchers from Microsoft Research developed Phi-4-reasoning-vision-15B, a compact open-weight multimodal model optimized for vision-language tasks, scientific reasoning, mathematics, and user-interface understanding. Careful architecture design and rigorous data curation allow competitive performance with substantially less training and inference compute. Systematic filtering, error correction, and synthetic augmentation improve data quality. High-resolution dynamic-resolution vision encoders boost perception, while mixed reasoning and non-reasoning datasets with explicit mode tokens enable fast answers or chain-of-thought reasoning →read the paperOlmo hybrid: Combining transformers and linear RNNs for superior scaling

Researchers from Ai2 introduced Olmo Hybrid, a 7B hybrid LLM combining transformer attention with Gated DeltaNet linear RNN layers in a 3:1 pattern (75% DeltaNet). Pretrained on 6T tokens using 512 GPUs (H100 → B200), it achieves ~2× token efficiency: matching Olmo-3 accuracy on MMLU with 49% fewer tokens and parity on Common Crawl with 35% fewer. With DRoPE at 64k context, it scores 85.0 RULER vs 70.9 for Olmo-3 →read the paperDynamic chunking diffusion transformer

Researchers from AMD developed DC-DiT, a Diffusion Transformer that adaptively compresses image tokens using a learned encoder–router–decoder chunking system. Uniform regions receive fewer tokens while detail-rich areas receive more, with compression varying across diffusion timesteps. On class-conditional ImageNet 256×256, the model improves FID and Inception Score versus parameter- and FLOP-matched DiT baselines at 4× and 16× compression, enabling checkpoint upcycling and fewer training steps with lower compute cost →read the paperHelios: Real real-time long video generation model

Researchers from Peking University and ByteDance introduced Helios, a 14B autoregressive diffusion video generator producing 19.5 FPS on a single NVIDIA H100 while supporting minute-scale videos. A unified representation enables T2V, I2V, and V2V tasks. Training simulates drifting failures to prevent long-video degradation and repetitive motion. Heavy compression of historical and noisy context plus fewer sampling steps yields compute comparable to 1.3B models while outperforming prior short- and long-video systems →read the paper

Research this week

(as always, 🌟 indicates papers that we recommend to pay attention to)

This week is about building the scaffolding that makes models usable, reliable, and durable in the wild:

Verification is becoming central

Agents are getting longer-horizon

Reinforcement learning is spreading everywhere

Synthetic data is becoming core infrastructure

Memory is returning as a major research frontier

World models and embodied prediction keep expanding

Multimodality is becoming more unified

Efficiency remains a primary battleground

Benchmarks are getting more realistic

Safety is shifting toward agentic settings

Agents, memory, tool use, and grounded action

CoVe: Training Interactive Tool-Use Agents via Constraint-Guided Verification

Builds agent-training data around explicit constraints that also double as verifiers, which is interesting because interactive agents usually fail where ambiguity meets deterministic action. →read the paper🌟 Memex(RL): Scaling Long-Horizon LLM Agents via Indexed Experience Memory

Replaces lossy summarization with indexed external memory that can be dereferenced later, which is interesting because long-horizon agency probably needs retrieval over experience, not just compressed chat history. →read the paper🌟 KARL: Knowledge Agents via Reinforcement Learning

Combines synthetic data generation, multi-task search training, and off-policy RL for enterprise search agents, which is interesting because it treats grounded knowledge work as an agent training problem rather than a pure retrieval problem. →read the paper🌟 Learning When to Act or Refuse: Guarding Agentic Reasoning Models for Safe Multi-Step Tool Use

Makes refusal and safety checks explicit inside the action loop, which is interesting because agent safety breaks when it is bolted on after planning instead of embedded into planning. →read the paper

Code agents and software reasoning

BeyondSWE: Can Current Code Agent Survive Beyond Single-Repo Bug Fixing?

Expands code-agent evaluation beyond neat repo-local bug fixes, which is interesting because real software work is messy, cross-repository, dependency-heavy, and rarely benchmark-friendly. →read the paper🌟 Agentic Code Reasoning

Introduces semi-formal reasoning as a certificate for code understanding without execution, which is interesting because it points toward static semantic verification that could plug directly into agent training and review loops. →read the paper

Truthfulness, interpretability, and behavioral control

🌟 Reasoning Models Struggle to Control their Chains of Thought

Tests whether reasoning models can deliberately shape what appears in their chain of thought, which is interesting because it bears directly on whether CoT monitoring is about to become useless or remains informative. →read the paper🌟 Censored LLMs as a Natural Testbed for Secret Knowledge Elicitation

Uses politically censored models as a naturally occurring dishonesty benchmark, which is interesting because most lie-detection setups are artificial and therefore too clean. →read the paper🌟 Spilled Energy in Large Language Models

Recasts decoding through an energy-based lens and uses energy inconsistencies to detect hallucinations, which is interesting because it offers a training-free route to error detection straight from logits. →read the paperSpectral Attention Steering for Prompt Highlighting

Steers attention by editing key embeddings before attention is computed, which is interesting because it makes prompt highlighting compatible with efficient attention implementations instead of fighting them. →read the paper

World models, embodied dynamics, and scientific simulation

Planning in 8 Tokens: A Compact Discrete Tokenizer for Latent World Model

Compresses world-model observations down to a tiny token budget, which matters because planning is often bottlenecked by representation overhead rather than the planner itself →read the paperChain of World: World Model Thinking in Latent Motion

Connects world modeling with visuomotor learning by separating motion from scene structure, which is interesting for robotics because it pushes prediction toward what actually changes. →read the paper🌟 Operator Learning Using Weak Supervision from Walk-on-Spheres

Reframes neural PDE learning around cheap stochastic supervision instead of expensive datasets or fragile PINN objectives, which makes it a practical bridge between scientific computing and learned operators. →read the paperSciDER: Scientific Data-centric End-to-end Researcher

Extends research agents from literature-and-code loops into raw scientific data handling, which is interesting because it shifts autonomous science closer to actual experimental workflows. →read the paper

Reasoning improvement through data, RL, and verification

CHIMERA: Compact Synthetic Data for Generalizable LLM Reasoning

Shows that a relatively compact synthetic dataset can still move reasoning performance meaningfully when it is broad, structured, and automatically validated. →read the paperLearn Hard Problems During RL with Reference Guided Fine-tuning

Uses partial reference solutions to help models enter the reward-yielding region on hard problems, which is interesting because it tackles reward sparsity without forcing the model to imitate alien human trajectories. →read the paperTool Verification for Test-Time Reinforcement Learning

Replaces shaky majority-vote pseudo-labels with tool-grounded verification, which is interesting because online self-improvement usually falls apart when the reward signal starts hallucinating confidence. →read the paper🌟 V1: Unifying Generation and Self-Verification for Parallel Reasoners

Treats verification as pairwise comparison instead of isolated scoring, which is interesting because models often judge relative correctness better than absolute correctness. →read the paperHeterogeneous Agent Collaborative Reinforcement Learning

Lets different agents share useful rollouts while still acting independently at inference time, which is interesting because it turns heterogeneity into training signal instead of treating it as noise. →read the paperSurgical Post-Training: Cutting Errors, Keeping Knowledge

Focuses post-training on minimally corrected trajectories, which is interesting because it aims to improve reasoning without paying the usual catastrophic-forgetting tax. →read the paper

Production tuning and real-world model behavior

🌟 CharacterFlywheel: Scaling Iterative Improvement of Engaging and Steerable LLMs in Production

Documents a full production flywheel for improving social-chat models with live traffic, which is interesting because it exposes how model behavior is actually tuned when the benchmark is user interaction rather than lab elegance. →read the paper

Training efficiency, optimization, and model compression

Progressive Residual Warmup for Language Model Pretraining

Stabilizes pretraining by letting earlier layers settle before deeper ones fully engage, which is interesting because it treats depth as an optimization schedule rather than a fixed stack. →read the paperSAGEBWD: A Trainable Low-Bit Attention

Pushes low-bit attention from fine-tuning territory into pretraining territory, which matters because efficient attention only really changes the game when it survives full training. →read the paperOn-Policy Self-Distillation for Reasoning Compression

Shrinks reasoning traces without relying on external labels or fixed token budgets, which is interesting because it treats verbose reasoning as something to optimize away rather than simply tolerate. →read the paper

That’s all for today. Thank you for reading! Please send this newsletter to colleagues if it can help them enhance their understanding of AI and stay ahead of the curve.