Token 1.2: Use Cases for Foundation Models and Key Benefits

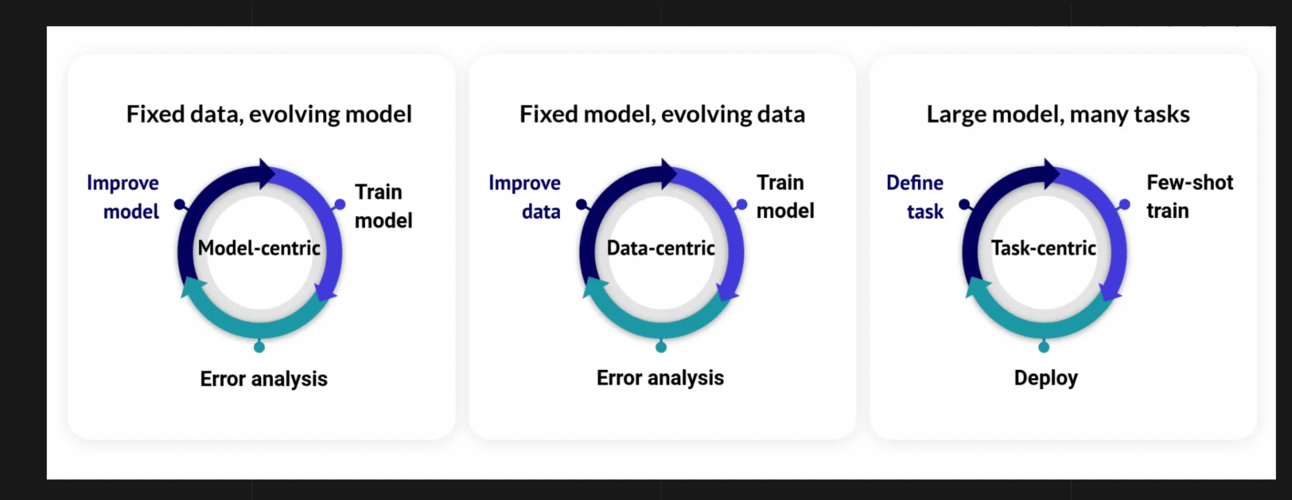

In our 1.1 episode about the paradigm shift from data-centric to task-centric machine learning (ML), we’ve mentioned that task-centric ML focuses on using foundation models to perform a wide range of tasks efficiently and with fewer training examples. In the comment section, Vu Ha, startups’ advisor at AI2 Incubator and Semantic Scholar’s first engineer, pointed out that he first mentioned the term task-centric in October 2021, way ahead of time!

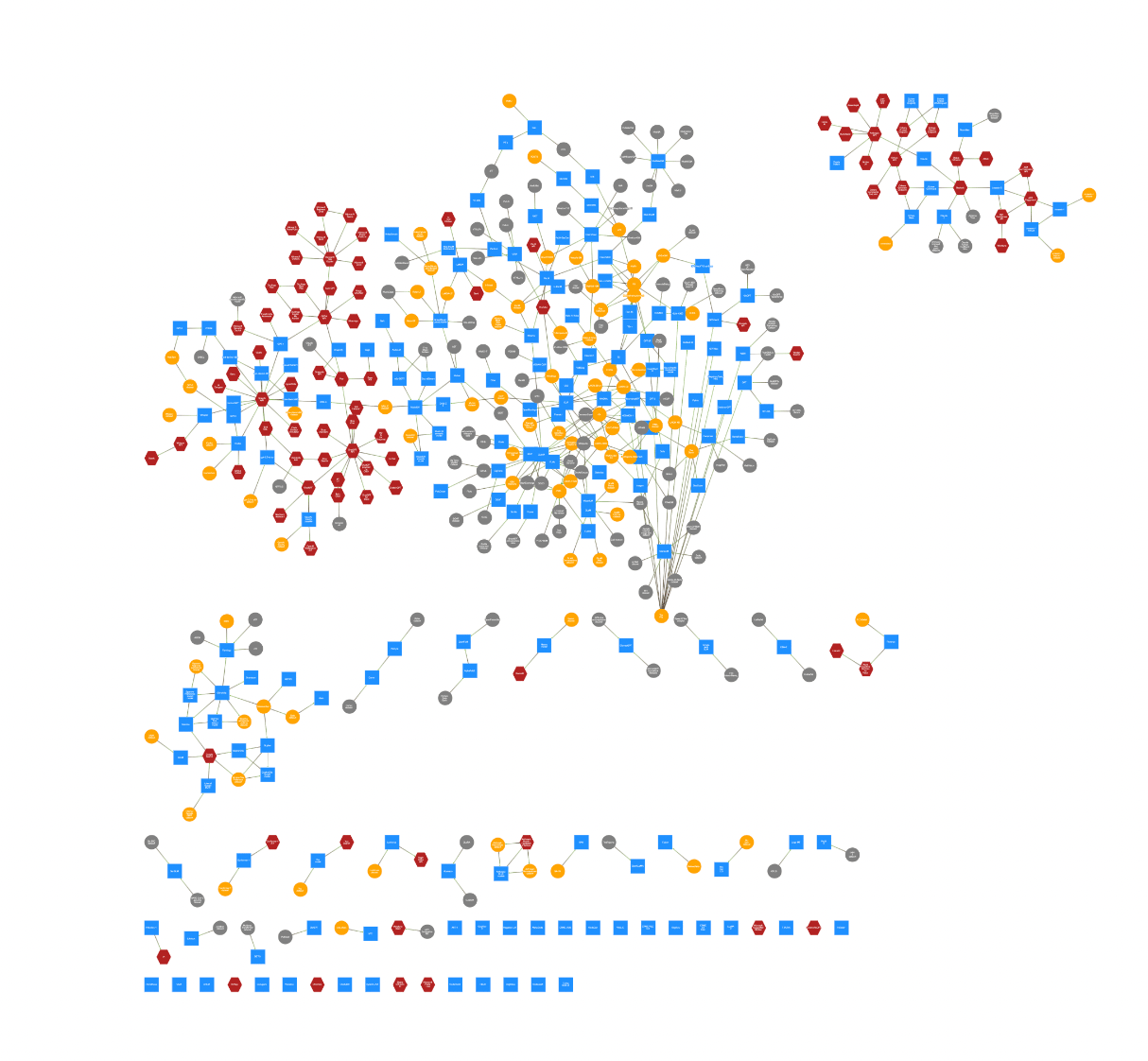

In that article, he proposed the following visualization of task-centric ML:

Image Credit: AI2 Incubator, Insights 4

Now, let's dig deeper into the world of foundation models and find out what makes them such game-changers. We also take a look at the cases when traditional ML is still the king.

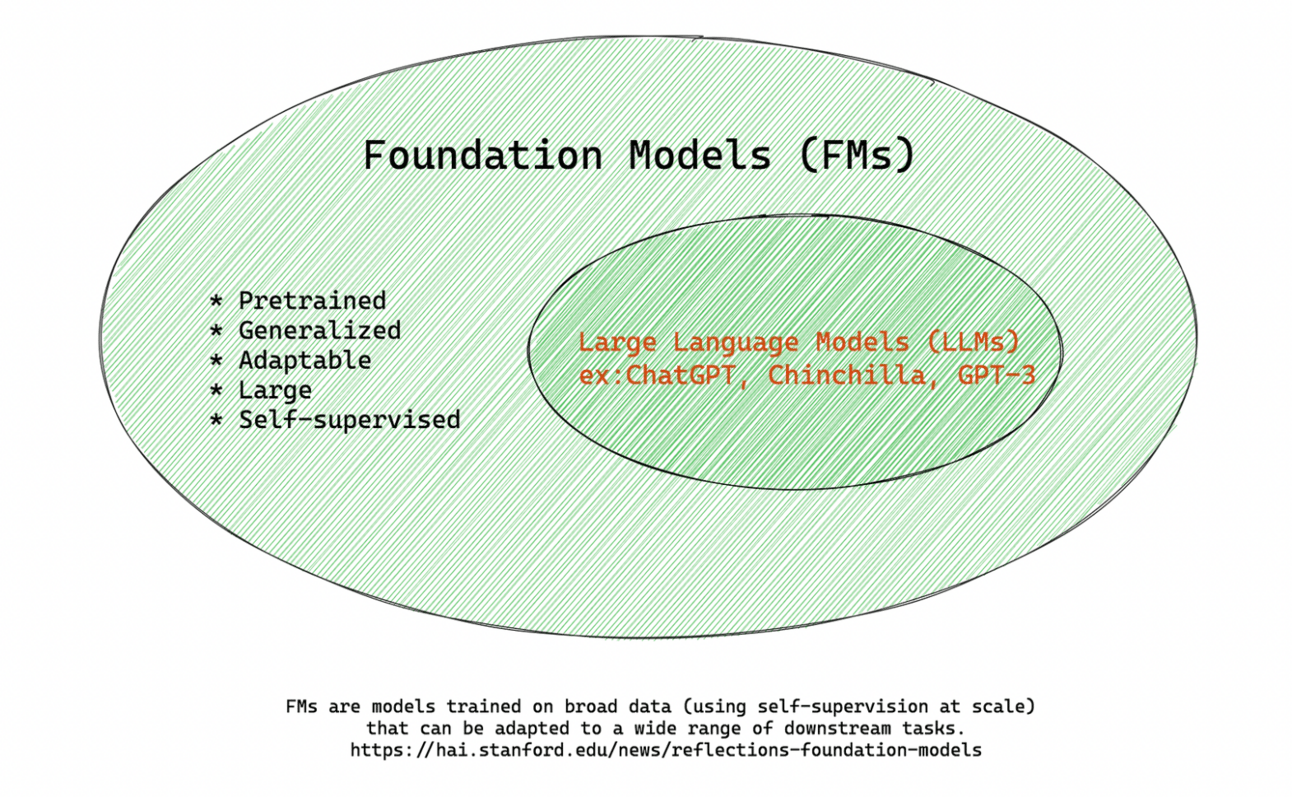

Basics first: what are foundation models?

Foundation models (FM) are large-scale neural networks trained on broad data. They can perform multiple tasks without specific training, often through zero-shot or few-shot learning. They may handle multiple types of data (text, image, audio), making them a superset of Large Language Models (LLMs).Let’s dive a little deeper

Foundation models, a term that comes to us from Stanford University, “to underscore their critically central yet incomplete character”, have altered the trajectory of ML by lowering the barrier of entry for building complex AI systems. They've proven especially adept at one thing: versatility. Unlike the niche specialization of earlier models, these computational behemoths are surprisingly flexible and agile across an array of tasks.

These models employ…

This is the last week when you can subscribe to Turing Post for only $50/year or $6/month and gain access to ALL our articles. This includes the FM series, which is packed with knowledge, the Unicorn Chronicle which offers insights into the main genAI companies, and other bonuses. Plus, you'll have the satisfaction of supporting independent tech journalism. Thank you!

…"few-shot" and "zero-shot" learning methods. This means they can adapt to tasks they haven't specifically trained for by using little (few-shot) or no (zero-shot) task-specific data. FMs can also be multimodal, effortlessly traversing across data types such as text, image, or audio. An important role plays is Reinforcement Learning from Human Feedback (RLHF) in fine-tuning and adapting these models.

Image Credit: Stanford University

FMs can be closed-source and open-source and with limited access. The ecosystem of FMs is vast and growing daily. Center for Research on Foundation Models (CRFM) offers an interactive map (click the image to open it in a new tab to play with it):

Now that we've got a handle on foundation models, let's check out all the cool stuff they bring to the table in the AI world.

The task-centric nature of foundation models

LLMs have democratized the use of machine learning, not just for data scientists and ML engineers, but also for software developers, business analysts, and – most shockingly – the casual user.

Why? Because they're fabulously task-centric and can be used without special data science or engineering training. Instead of training new models for each task, you can now tweak an existing model with a few words to do multiple tasks decently well. Vu Ha sums it up neatly: the new paradigm allows for "1,000 models with 10 labels each" as opposed to "10 models with 1,000 labels each." This shifts the focus from data collection to task specificity and model adaptability.

A user-friendly UX packaging created by OpenAI, coupled with their launch of ChatGPT without any guardrails, has catapulted us into a new reality. Now, no one can stop. So let's figure out how you can and should use it (and when it's still better to prefer traditional ML).

The utility of foundation models varies depending on the target audience

B2B: Focused on specialized, high-stakes tasks (e.g., fraud detection).

B2C: Aims for broad adaptability and user engagement (e.g., personalized shopping).

Apps: Often serve as the interface for foundation model capabilities.

End Users: Benefit from user-friendly applications that leverage these models for specific tasks.

Choosing between traditional ML and FM hinges on common sense and the ROI factor

Cost-benefit analysis reigns supreme when it comes to choosing between traditional ML and foundation models. The crux of advancing in the AI domain lies in the strategic choice between traditional ML and propagating FMs.

What should startups do?

Startups, often working with tight budgets and smaller datasets, may find traditional ML models a more feasible choice. These models are generally easier to customize and quicker to deploy, aligning well with the nimble nature of startups and their pursuit of a faster time to market. Using FMs is possible via APIs, though it raises questions about data safety.

What should enterprises do?

On the flip side, enterprises, with abundant resources and vast datasets, might find foundation models more fitting. These models are engineered for scalability, capable of handling complex tasks, and provide a pathway for long-term strategic advantage in the competitive AI landscape. The expertise available within large enterprises may also better complement the demands of foundation models, paving the way for superior performance and innovation.

In conclusion, the choice between traditional ML and foundation models should be a strategic alignment with the entity's scale, resources, and long-term vision.

Also, the general rule of thumb suggests: if something is working well with a traditional model, it's better to continue using it. Not every task is suitable for, or benefits from the complexity and adaptability of foundation models, and it's wise to assess the suitability of FMs on a case-by-case basis.

And, yep, traditional ML isn't packing up and leaving just yet. There are times when it's still the MVP:

When Traditional ML Still Makes Sense

Despite the rise of foundation models, traditional ML algorithms like Support Vector Machines (SVMs) and decision trees still have their niches:

Speed and Efficiency: In tabular data cases (e.g., spreadsheets), classical algorithms often perform exceptionally well and require fewer computational resources compared to neural networks.

Interpretability: For sectors like healthcare, finance, and legal, the need to interpret and understand the decision-making process is critical. Traditional ML models are far more transparent than deep learning models, fulfilling this requirement.

Embedded Systems: Traditional ML models can operate within tight memory and computational constraints, making them ideal for embedded systems where computational resources are limited.

Feature Engineering: Traditional ML models often perform better when you handcraft the features, utilizing domain expertise. In industries like finance, this is often the norm.

With FMs, augmentation is the keyword

When considering foundation model implementation, there are two pivotal questions to ponder: Does employing FMs augment your workflow, accelerate processes, free up time, and boost overall efficiency? Moreover, is it financially viable? Consider this from two vantage points:

Business Optimization

Human Optimization (encompassing you, your co-workers, and employees)

For Human Optimization

Act depending on the task you are working on now. Let’s say for software developers, using FMs is like having a little team of helpers. You need expertise to curate them and check the results, but that’s what you always need when managing a team.

Additional Read: In his article ‘Everyone is above average’ Ethan Mollick discusses the leveling effect of AI on the workforce, particularly emphasizing how AI boosts the performance of lower-skilled workers across various professions. The article reveals that AI significantly narrows the skill gap between top and lower performers, as showcased in a study where lower-half consultants at Boston Consulting Group improved their output quality by 43% with AI assistance. Mollick mentions varying perspectives on AI's role, either as a skill leveler, escalator, or kingmaker, each impacting workers differently. Amidst the rapid evolution driven by AI, he urges thoughtful deliberation on the implications and the reshaping of the professional landscape to ensure a balanced and inclusive transition into this new era of work.

For Business Optimization: Industries Poised for Transformation

The infusion of FMs is set to redefine operational landscapes across multiple sectors:

Healthcare: The advent of predictive analytics fueled by foundation models is poised to revolutionize patient outcomes, accelerate drug discovery processes, and enhance medical imaging analysis, opening avenues for a healthier world.

Finance: In a sector where accuracy is paramount, foundation models step in as a vanguard for fraud detection, algorithmic trading, and automating customer services.

E-commerce: The era of personalized shopping is ushered in with refined recommendation systems, precise customer behavior predictions, and robust inventory optimization strategies.

Automotive: The road to autonomous vehicles becomes less tumultuous with foundation models steering the wheel on predictive maintenance and real-time monitoring systems.

Research and Academia: From parsing convoluted datasets in climate science to interpreting ancient texts, foundation models offer a useful toolset that helps scholars derive actionable insights.

Unfolding Use Cases: A Glimpse into the Future

Co-pilots and personal assistants: With companies, such as Google and Microsoft, implementing foundation models into the entire user ecosystem, this could potentially revolutionize the way we interact with computers and beyond. Our communication with computers deepens.

Automated Customer Service: The deployment of chatbots and virtual assistants to handle customer queries not only augments customer experience but also drives operational efficiency to new heights.

Predictive Analytics and Supply Chain Optimization: The ability to accurately forecast trends and demands using historical data can be a game-changer for strategic planning and resource allocation, enabling businesses to stay a step ahead.

Sales Forecasting: Empowering businesses with a crystal ball to predict sales trends, foundation models enable informed decision-making, ensuring resources are aptly channeled for maximum profitability.

Program Synthesis: Converting natural language instructions to code snippets or even full-fledged programs, can be quite painful and could be alleviated through task-focused LLMs.

These two lists are nowhere close to being complete. We are still in the nascent era of foundation models and will learn, develop, and invent new ways of using them every day. Foundation models are significantly shaping how businesses and end-users interact with this multitasking ML technology, offering a balance of versatility and specialization that we haven't seen before.

List of links from this article:

Not used in the article but can be tremendously helpful: https://assets.publishing.service.gov.uk/media/650449e86771b90014fdab4c/Full_Non-Confidential_Report_PDFA.pdf

You are able to read the full article because you are our Premium subscriber. Thank you so much