The complexity of human reasoning remains a challenging frontier for large language models (LLMs) to master. LLMs often struggle with tasks that require multiple, sequential reasoning steps, such as arithmetic, commonsense, and symbolic reasoning.

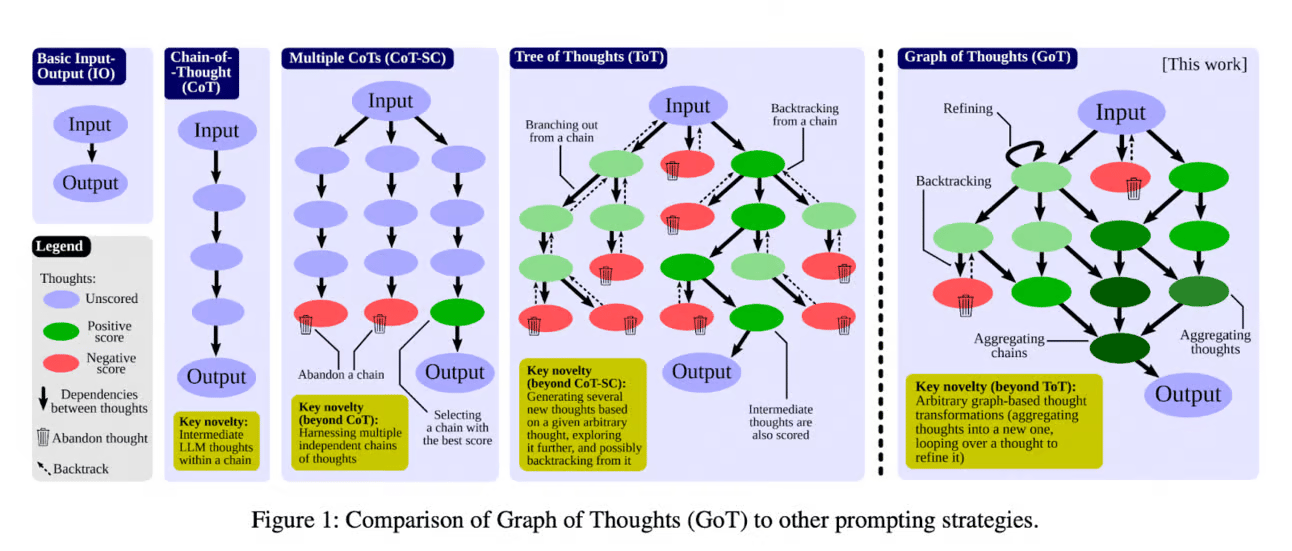

One of the partial solutions to this challenge is chain-of-thought (CoT) prompting, a method proposed by Google Brain at NeurIPS 2022. This method involves making LLM generate a series of intermediate reasoning steps while presenting its output.

The introduction of CoT prompting improved large language models’ results in performing complex reasoning tasks. It has sparked the interest of researchers, leading to the development of more sophisticated methods based on the underlying idea of CoT, which we discuss extensively in our article.

In this short article, we decided to compile all the useful resources that could help you utilize CoT methods in your projects:

Methods that require you to write your prompt in a specific way:

Basic: zero-shot prompting, few-shot prompting

Chain-of-thought: Original method, self-consistency, zero-shot chain-of-thought -> Read Chain-of-Thought Prompting Explained and use these 7 resources to master prompt engineering