- AV cars are very polite. They're too polite for humans. You need to be bold.

- You do need to be bold. But at the same time, you also always need to be risk-averse. It's a combination, right? So that balance is one of the hard challenges of getting self-driving right.

The first phrase is mine, watching from the passenger seat as the new self-driving Mercedes-Benz was trying to make its way into a packed right lane.

We are in the middle of San Francisco, around 2 pm, in real traffic, testing NVIDIA's Autonomous Driving (AV) software, which NVIDIA is building as a platform (with a lot of key elements being open sourced) any car company can adopt – first for L2 driving and then eventually for much higher levels of autonomy. That openness and availability excite me the most.

The reply comes from Ali Kani, who was our guide that day. He has been at NVIDIA Automotive for almost eight years, through many of the ups and downs, and he is eager to explain everything. What started as a joke turns out to be a neat summary of why NVIDIA’s autonomy stack looks the way it does. A modern self-driving system has to be smooth enough to behave like a good human driver, cautious enough not to do something stupid, and traceable enough that an OEM, a regulator, and eventually a passenger can understand why it did what it did.

This is a real inflection point for self-driving cars, and if you want to understand the future that is starting to take shape now, you need to understand how this stack works.

This piece can be read in a few different ways. It is partly a guide to the current stack, from in-car models to safety layers to simulation. It is partly a look at the hardware path NVIDIA believes autonomy will require. And it is partly a case study in how one company is trying to make self-driving more reproducible, more scalable, and less dependent on closed internal systems.

Or you can just experience our drive and the conversation that became the backbone of this article →

… By the way, although Ali thought the driver might need to take over, the car, after a brief hesitation, found the right moment and made it into the lane. It was smoother than many human drivers would have been.

What's in today's episode?

The easiest way to understand NVIDIA’s autonomy stack

It’s not there yet (and that’s awesome)

How this whole thing works →

What changes when NVIDIA moves to Level 4

Where Alpamayo actually fits

Before reasoning VLAs, how were AV systems handling driving? (and why NVIDIA has not abandoned the modular world completely)

What happens in the cloud, and why it may matter as much as the car?

Is NVIDIA’s dual-stack approach a silver bullet?

So where is NVIDIA different (and what might soon make it the largest car company without building a single car)?

How do I actually start learning this stack?

A serious resource list for people who want to learn the field

The easiest way to understand NVIDIA’s autonomy stack

The easiest mistake is to think of NVIDIA’s self-driving effort as one product. It is much closer to a layered system.

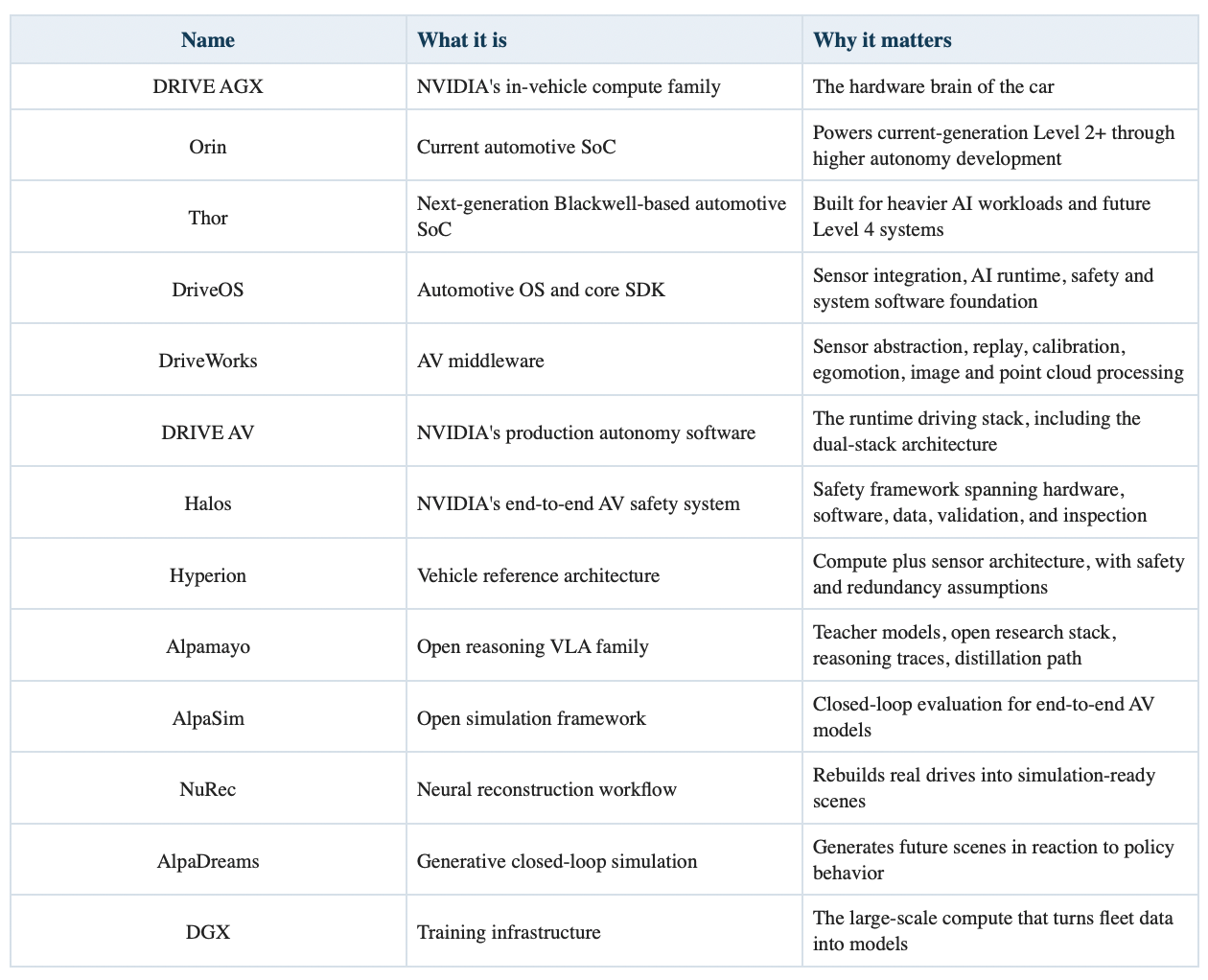

At the bottom is in-vehicle compute. Today that means DRIVE AGX Orin in current-generation systems. Orin is the compute platform that Mercedes publicly tied to its next-generation Level 2 and Level 3 driving efforts when it laid out MB.OS. For the next step up, NVIDIA wants DRIVE AGX Thor. Thor is the Blackwell-based automotive SoC designed for much heavier AI workloads, including generative AI, vision-language-action models, and increasingly consolidated cockpit-plus-driving workloads. One useful clarification here, because NVIDIA has created enough branding layers to qualify as a minor weather system: DRIVE AGX is the hardware family, Orin and Thor are the chips, and Hyperion is not the chip but the reference vehicle architecture built around the chip.

On top of the hardware sits the low-level software foundation. This is DriveOS and the broader runtime plumbing around it. DriveOS is NVIDIA’s automotive operating system and SDK. It supports Linux or QNX, sensor integration, image processing, AI acceleration, inter-process communication, debugging, and profiling. Simply put, DriveOS is the systems layer, NvMedia handles the sensor and media pipelines, NvStreams handles efficient data movement, CUDA and TensorRT handle accelerated compute and inference, and DriveWorks sits above that as the middleware toolkit developers actually use to build AV functions.

Above that sits the runtime autonomy software, which NVIDIA calls DRIVE AV. This is the part that matters most conceptually, because DRIVE AV is built as a dual-stack architecture (according to Ali Kani):

One side is the AI end-to-end stack that learns holistic driving behavior from data – AlpaMayo.

The other is a parallel classical safety stack that provides redundancy, verification, and explicit guardrails – Halos.

Publicly, Halos is now NVIDIA’s umbrella safety framework across hardware, software, tools, datasets, processes, inspection, and validation. In the interview, the term was also used in a narrower way to describe the classical safety guardrails sitting beside the end-to-end model. Both uses are related, but they are not identical.

Then there is Hyperion, which is NVIDIA’s reference vehicle architecture. Hyperion bundles compute, sensors, and safety assumptions into a template OEMs can build on. Hyperion 8 is the Orin generation. Hyperion 10 is the Thor generation and the more obvious Level 4 jump: dual Thor, lidar, more cameras, more radars, more interior sensing, and enough redundancy to keep operating if something fails. Hyperion is NVIDIA’s way to standardize the vehicle architecture around its autonomy stack.

Finally, there is the cloud training, simulation, and safety loop, which many people still underestimate. NVIDIA’s AV stack is not only in-vehicle software. It is also DGX for training, Omniverse and Cosmos for simulation, NuRec for reconstruction, open datasets for training and validation, and increasingly generative systems like AlpaDreams for closed-loop world generation. This is why Halos is described as a cloud-to-car safety system. NVIDIA is explicitly saying that the safety story begins long before deployment and stretches across training, validation, simulation, and runtime behavior.

Image Credit: NVIDIA, 3D reconstruction

Naming cheat sheet, because NVIDIA has created a small alphabet soup

It’s not there yet (and that’s awesome)

Ali was very clear during the ride that this is still Level 2, not Level 4. The driver remains responsible, and he genuinely expected that in dense city traffic we might need to take over. The current setup is also deliberately constrained: no lidar, relatively modest compute, and hardware designed to be cheap enough for real consumer vehicles rather than experimental fleets. The cars drives very smoothly, but sometimes you can feel those constraints in the behavior: a little ghost break, a brief hesitation, over-politeness.

And that is exactly why the ride was impressive. It did not feel like a fantasy prototype built under ideal conditions. It felt like a real system, running on a real consumer-grade stack, already handling more than many people would expect. For NVIDIA, a company that does not build cars, that’s a serious success. I heard Jensen Huang even brought his parents to do the test drive that week. He had reasons to.

How this whole thing works →

What the current Level 2++ stack is trying to do

As we said: the CLA system is not "full self-driving." It is a supervised, navigation-aware, point-to-point assistance system. The driver remains responsible. Cooperative steering means the human can adjust steering without fully disengaging the system (that’s an awesome feature!) Mercedes also says certain steering-hand requirements remain in place, especially through turns, because Level 2 still assumes the human can immediately take over.

From a systems perspective, the current stack looks like this:

Sensors: camera-heavy, with radar and ultrasonic coverage, but no public lidar mention for the current CLA consumer system.

Compute: Orin-generation centralized compute.

Inputs: sensor streams plus navigation guidance (google maps).

Core runtime: DRIVE AV dual-stack architecture.

Human interface: supervised Level 2++, cooperative steering, OTA-upgradable features.

Safety posture: driver remains in the loop, classical stack constrains the AI stack.

What the end-to-end model is doing in the current car

In the interview, NVIDIA described the end-to-end model as tokenizing sensor input and outputting a trajectory. That is the modern end-to-end framing: instead of hand-authoring every submodule boundary, the model learns to map observations, route intent, and motion history into action proposals.

The current evolution seems to be moving from pure camera input toward richer multimodal inputs and more flexible interfaces:

Variable camera counts

Navigation map input

Text input in newer parent models

Distillation from a larger teacher into a smaller in-car model

This matters because the open Alpamayo 1.5 model adds exactly those capabilities: navigation guidance, flexible camera counts, text-conditioned behavior, and interactive Q&A. In other words, the research track and the product track are clearly feeding each other.

From driving to interacting: talking to the car

One detail that may look secondary at first, but is actually quite important, is that these models are no longer limited to sensor input and trajectory output. They can also take text.

That changes the interface. Instead of selecting from predefined modes or navigating through menus, you can describe intent.

“Take a smoother route.”

“Be more assertive in traffic.”

“Why did you stop?”

This is not how the current CLA system is designed to be used, and it is not what we experienced during the ride. But at the model level, the capability is already there. Alpamayo explicitly supports text input and question answering, and Thor is being positioned to run language models alongside driving.

So the shift is not only from manual driving to automated driving. It is also from operating a system to interacting with it. Over time, that will likely become one of the main ways people build trust in these systems: not only by observing behavior, but by being able to ask for it, adjust it, and question it in plain language. Just amazing.

What the classical safety stack is doing

This is the adult in the room.

The interview example that makes this easiest to understand was "no right on red." The end-to-end model might see an open gap and think a turn is physically possible. The classical stack enforces the rule and blocks the action. In the ride, NVIDIA also described sign detection, collision-risk prediction, and explicit constraint handling as part of that guardrail logic.

This is not just about traffic signs. The classical stack exists because:

Black-box models are hard to audit.

Safety cases still need traceability.

OEMs want configurable regional and legal constraints.

Regulators do not accept vibes as an assurance argument.

NVIDIA's public safety materials make the same point in more formal language: Halos combines classical and AI-based models, platform safety, algorithmic safety, and ecosystem safety to build guardrails into design-time, validation-time, and deployment-time workflows.

Current vs planned hardware, in one table

What changes when NVIDIA moves to Level 4

Almost everything.

That is the first thing to understand. Level 4 is not just "same thing, but more polished." It is a different safety assumption, a different hardware assumption, and a different redundancy requirement.

NVIDIA’s published Hyperion 10 (planned Level 4 architecture) specification includes two DRIVE AGX Thor SoCs, 14 cameras, 9 radars, 1 lidar, 12 ultrasonic sensors, 4 interior cameras, and an exterior microphone array. NVIDIA explicitly positions Hyperion 10 as Level 4-ready and fault tolerant. The logic is straightforward: if one computer fails, the second remains. If one sensor modality degrades, another modality can cover part of the gap. This is classic defense in depth, but implemented for an AI-heavy AV era.

Why the hardware jumps so much

Three reasons.

More autonomy means more responsibility moves into the machine

At Level 2, the human is the fallback. At Level 4, the system must perform the fallback within its operational design domain. That alone changes the hardware and software burden.

Redundancy is not optional

For Level 2 system, a graceful handoff to the human is part of the safety story. A Level 4 system cannot do that.

The model itself gets heavier

NVIDIA is increasingly building around VLA-style and reasoning-heavy workloads. Thor is not only about driving. NVIDIA also wants consolidated vehicle intelligence: autonomous driving, cockpit AI, language models, and multimodal assistants on one centralized compute architecture. Thor is the chip for that future.

What the planned Mercedes Level 4 path looks like

In January 2026, NVIDIA and Mercedes said a future S-Class built on MB.OS would use DRIVE Hyperion and full-stack DRIVE AV L4 software, aimed at future robotaxi operations through Uber's mobility platform. In March, NVIDIA also announced Hyperion adoption by BYD, Geely, Isuzu, and Nissan for Level 4 programs.

That gives a fairly clean two-step roadmap:

CLA: consumer Level 2++ point-to-point driving

S-Class and other Hyperion 10 partners: Level 4-ready architecture for premium autonomy and fleet use cases

So when NVIDIA says it supports everything from Level 2++ to Level 4, it is claiming one software-and-infrastructure family spans those autonomy levels while the vehicle architecture scales up.

Where Alpamayo actually fits

This is the part people are most likely to misunderstand.

Alpamayo is not the entire production driving stack. NVIDIA effectively says that itself, even when the surrounding marketing language gets more excited than it should. The open Alpamayo models are teacher models and research foundations. They are intended to push reasoning-based autonomy forward, provide a transparent VLA baseline, enable evaluation and auto-labeling workflows, and support fine-tuning and distillation toward target deployments.

NVIDIA’s own GitHub disclaimer says Alpamayo is not a fully fledged driving stack, lacks critical real-world sensor inputs and redundant safety mechanisms, and has not undergone automotive-grade validation for deployment. That sentence clears up a lot of confusion.

What Alpamayo 1.5 actually is

Alpamayo 1.5 is NVIDIA's open 10B-parameter reasoning VLA model for AV research. Publicly, NVIDIA describes it as:

Built on the Cosmos-Reason2 VLM backbone

RL post-trained

Able to take image or video, text, and egomotion history

Able to use navigation guidance

Flexible across camera counts

Able to answer user questions

Designed to output trajectories plus reasoning traces

The model card breaks it down into an 8.2B backbone plus a 2.3B action expert, with a default four-camera input setting and a hardware requirement of at least 24 GB of VRAM for single-sample inference.

So Alpamayo is best understood as a bridge between end-to-end trajectory generation, language-based reasoning and explanation, and the distillation path into smaller runtime-capable models.

And here is why this bridge is so important:

Why reasoning matters in driving

Traditional end-to-end systems can get very good at interpolation. They struggle when the world gets weird.

A suitcase in the road. Construction moved overnight. A car is stopped too close to a stop line. A cyclist drifts. A pedestrian hesitates, then suddenly commits. Someone else breaks the rules and forces you into a new negotiation.

Humans handle these situations by decomposing the scene, forming causal hypotheses, and choosing the least bad action. NVIDIA's reasoning VLA pitch is that driving models need more of that ability, plus the ability to explain their decisions.

That does not automatically solve the problem. But it does push the field away from a pure "pattern-match the trajectory" mindset and toward a "reason under uncertainty and then act" mindset.

Before reasoning VLAs, how were AV systems handling driving? (and why NVIDIA has not abandoned the modular world completely)

Before the current wave of end-to-end enthusiasm, AV systems were mostly built as modular stacks: perception, localization, tracking, prediction, planning, control. Engineers liked this architecture for obvious reasons. Every subsystem is inspectable, testable, and replaceable. The downside is that modular stacks can become brittle. Information gets compressed too early. The planner sees a cleaned-up world rather than the raw ambiguity. Rare edge cases that spill across module boundaries can become strangely hard to handle well.

End-to-end learning emerged partly as a reaction to that problem. If the whole system can learn jointly from sensor data to action, maybe it can preserve more of the scene and produce more natural behavior. NVIDIA’s answer seems to be yes, but not by abandoning the modular logic entirely. That is why DRIVE AV is dual-stack. One side captures the benefits of end-to-end learning and increasingly VLA-style reasoning. The other preserves traceability, explicit constraints, and rule-based safety enforcement. In plainer terms, NVIDIA wants the model to be clever, but it does not want cleverness alone carrying the full system.

What happens in the cloud, and why it may matter as much as the car?

A lot of the real moat is here.

NVIDIA's autonomy pipeline, as publicly described, looks something like this:

Collect real-world sensor data from vehicles.

Train and refine models on large GPU clusters, using DGX and related infrastructure.

Reconstruct real scenes with Omniverse NuRec so the exact failure can be replayed in sim.

Use Cosmos world models to create more data, more variants, and more environmental diversity.

Run policies closed-loop in AlpaSim.

Move toward generative closed-loop simulation with AlpaDreams.

That last part is the new frontier (and makes me think about cars being able to dream). Let’s explain what it all means in more detail:

NuRec: reconstruction instead of hand-built scenes

NuRec is NVIDIA's neural reconstruction workflow. The idea is to take real-world multi-sensor logs and rebuild them into high-fidelity 3D scenes that can be replayed from novel views and new trajectories.

This is powerful because it means one bad real-world case is no longer just one bad case. It becomes a seed crystal for many tests.

Cosmos: synthetic variation

Cosmos is NVIDIA's world-model family. In the AV context, it is used to generate more variation, more scenarios, and more training or evaluation data. Public NVIDIA material frames Cosmos as useful for generating single-view and multiview videos, accelerating synthetic data generation, and scaling world generation from prompts and spatial controls.

AlpaSim: closed-loop policy testing

AlpaSim is the open simulation framework where policies actually drive. It is a reactive environment, with realistic sensor modeling, vehicle dynamics, traffic behavior, and NuRec integration for photorealistic views.

Closed-loop matters because open-loop evaluation can flatter a model. A trajectory predictor can look great offline and still drive terribly once its own actions affect the future state of the world.

AlpaDreams: generative closed-loop simulation

This is the part that starts to sound like science fiction and then immediately turns into an engineering tool.

AlpaDreams is NVIDIA's new action-conditioned generative world model for AV simulation. Public descriptions say it generates multi-camera, physics-aware scenes in real time in response to simulator state and driving actions.

Which means the simulator is no longer only replaying or varying a fixed scene. It is imagining what happens next in reaction to the policy. And it can do it in real time. That is a big deal if it works well. It moves the training and validation loop closer to infinite interactive scenario generation.

And yes, this is probably what Ali Kani meant by "compute becomes data." Once you can reliably turn compute into worlds, the bottleneck shifts.

Is NVIDIA’s dual-stack approach a silver bullet

No. And the interview was strongest when it implicitly admitted that.

The current system still needs polish around ghost braking, unprotected intersections, stop-sign negotiation, assertiveness in dense traffic, and socially legible lane changes. None of those are side issues. They are very close to the core difficulty of autonomy. The “too polite” joke was on point because autonomous driving is not only a physical safety problem. It is also a social coordination problem. A vehicle that always yields may remain physically safe while still making traffic worse, creating ambiguity, or encouraging other road users to exploit it.

It was very interesting when Ali said that NVIDIA’s answer to that is to train on good driving, filter out bad demonstrations, give the model richer reasoning tools, and keep a classical safety stack in the loop. That is sensible. It still does not erase the harder questions. How much of a reasoning model’s explanation is faithful rather than post hoc? How do you validate rare-event competence without fooling yourself through simulation shortcuts? How do regional rules and driving culture get encoded without creating an endless layer of special cases? How much of the final safety case can rest on learned components? How much of a larger teacher model’s behavior survives distillation into the smaller runtime model? These are not NVIDIA-specific problems. They are autonomy problems in general. It is encouraging to see many of these questions moving from theory into active solutions.

So where is NVIDIA actually different (and what might soon make it the largest car company without building a single car)

NVIDIA is very smart about layers. They don’t survive on single breakthroughs. Their moat is integration.

A lot of AV companies have strong models. Some have strong hardware. Some have strong simulation. Some have strong safety narratives. NVIDIA is trying to tie those layers together into one stack. Its distinctive bet looks something like this: use open reasoning-VLA models to move the field forward, distill them into runtime-capable production systems, keep a classical safety stack in parallel, standardize the hardware and sensor architecture with Hyperion, push the whole loop through DGX, NuRec, Cosmos, AlpaSim, and eventually AlpaDreams, and wrap the result in Halos so the safety story is designed in from the beginning rather than added at the end.

That is a very NVIDIA answer to the AV problem. They don’t want to build cars. Their aim is broader and more powerful: to own a large part of the compute, software, simulation, and safety substrate underneath other companies’ autonomy efforts.

How do I actually start learning this stack?

The following are a few comprehensive paths depending on your goal, along with an extensive list of resources we curated for a complete dive into the self-driving universe.

LAST 3 DAYS to upgrade with 20% discount!

Join Premium members from top companies like Microsoft, NVIDIA, Google, Hugging Face, OpenAI, a16z, plus AI labs such as Ai2, MIT, Berkeley, .gov, and thousands of others to really understand what’s going on in AI.

How did you like it?

Further reading