After putting together a comprehensive profile of OpenAI, we continue our series about AI unicorns with the examination of AI startup Anthropic, founded by two siblings: Dario and Daniela Amodei.

Quick Answer: Who is Daniela Amodei?

Daniela Amodei is a co-founder and president of Anthropic, known for leading work on building and scaling safer large language model systems. She’s associated with operationalizing “AI safety” into product and governance decisions, and with steering Anthropic’s strategy around reliable model deployment. If you’re comparing AI labs, her role is a good lens on how safety, policy, and enterprise adoption get translated into engineering priorities.

Subscribe for weekly operator-grade AI systems analysis:

https://www.turingpost.com/subscribe

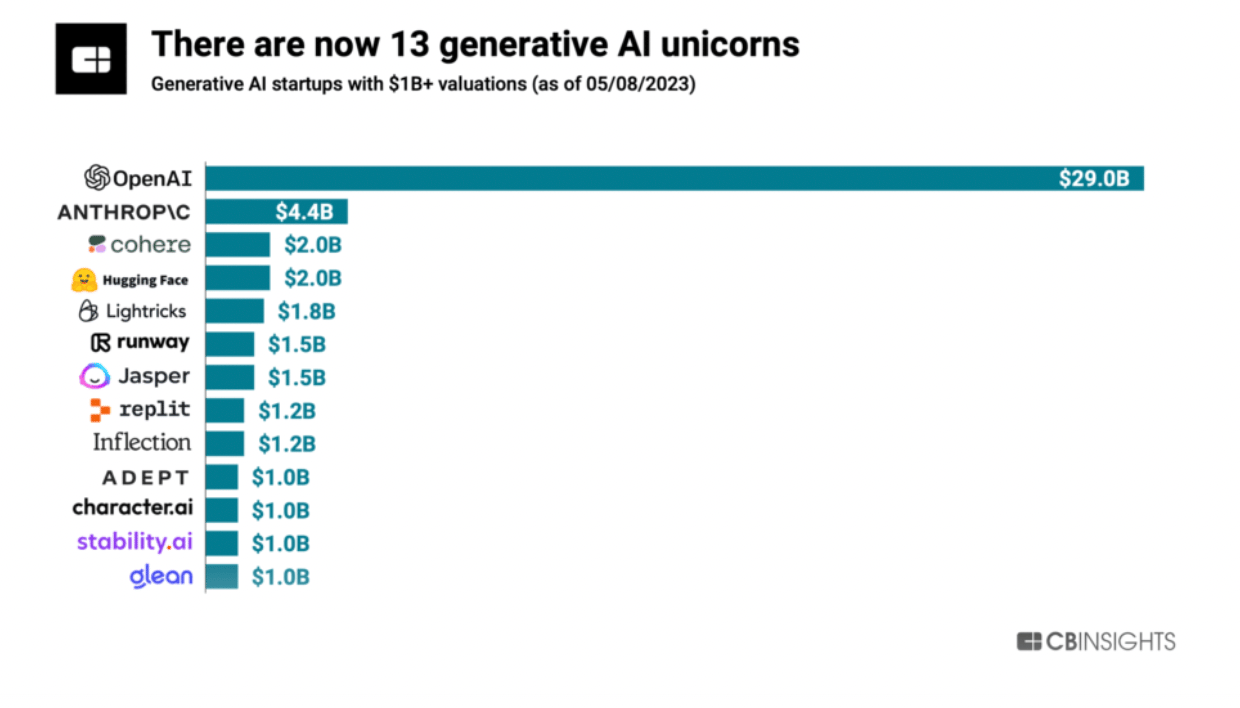

According to CBInsights, Anthropic is positioned in second place, with a $4.4 billion valuation, which is a modest number compared to OpenAI. Born out of a disagreement with OpenAI, Anthropic is inevitably compared to and mentioned alongside its main rival. However, Anthropic maintains a lower public profile, with only two types of news reaching the mainstream media: the capabilities of their chatbot, Claude, and their consistently increasing fundraising numbers. Let's delve into their story, what drives them since college, what relations they have with effective altruism, what they think about safety, and what sets them apart from OpenAI.

Frictions with OpenAI and the birth of Anthropic

Amodei’s Vision and the effect of effective altruism

Money situation

Claude and research path to Claude

Policy tools for AI governance

Philosophy around safety

Bonus: What and Who is Anthropic

Frictions with OpenAI and the birth of Anthropic

In 2019, OpenAI gets a hefty $1 billion investment from Microsoft and announces the creation of a for-profit subsidiary called OpenAI LP, to attract more funding and form partnerships with other companies.

In December 2020, after nearly five years at OpenAI, Dario Amodei leaves his position of the VP of research, followed by a handful of other high-level OpenAI employees, including his sister Daniela Amodei who played a bunch of important roles such as VP of Safety and Policy and Engineering Manager + VP of People. According to Financial Times, “the schism followed differences over the group’s direction after it took a landmark $1bn investment from Microsoft in 2019.” Quite a few people in OpenAI were concerned about a few things: a strong commercial focus of the previously non-profit organization, too close bond with the tech giant, and that the products would be shipped to the public without the proper safety testing

Amodei told Insider that OpenAI's product was too early in its development during her time there to comment on, but added that the founding of Anthropic centered around a "vision of a really small, integrated team that had this focused research bet with safety at the center and core of what we're doing."

Anthropic, established as a public benefit corporation, aimed to concentrate on research. Dario and Daniela took the steer of the new generative AI startup, with other four ex-OpenAI colleagues as cofounders. It started from less than 20 people, as of June 2023, it has 148 employees, according to Pitchbook.

In the description on Linkedin it says: Anthropic is an AI safety and research company that’s working to build reliable, interpretable, and steerable AI systems.

How do they plan to build a steer for an AI system?

Amodei’s Vision and the effect of Effective Altruism

We should probably be looking back, at the time when brother and sister Amodeis were in grad school and college, respectively. That’s what Daniela says about her older brother in an interview to the Future of Life Institute, “He was actually a very early GiveWell fan, I think in 2007 or 2008. We would sit up late and talk about these ideas, and we both started donating small amounts of money to organizations that were working on global health issues like malaria prevention.”

And about herself, “So I think for me, I've always been someone who has been fairly obsessed with trying to do as much good as I personally can, given the constraints of what my skills are and where I can add value in the world.”

Holden Karnofsky about his wife, “Daniela and I are together partly because we share values and life goals. She is a person whose goal is to get a positive outcome for humanity for the most important century. That is her genuine goal, she’s not there to make money.”

Having very strong ties with the effective altruism community (Daniela is married to Holden Karnofsky, a co-CEO of grantmaking organization Open Philanthropy and co-founder of GiveWell, both organizations associate with the ideas of effective altruism), Anthropic raised money from vocal participants of this community, such as: Jaan Tallin (a founding engineer of Skype and Kazaa, co-founder of the Future of Life Institute), Dustin Moskovitz (the co-founder of Facebook and Asana, co-founder of the philanthropic organization Good Ventures) and… Sam Bankman-Fried, notoriously known for being alleged by U.S. officials in perpetrating a massive, global fraud, using customer money to pay off debts incurred by his hedge fund Alameda Research, invest in other companies and make donations.

That was an earthquake for the effective altruism community. On of its ideologists, philosopher William MacAskill posted, “Sam and FTX had a lot of goodwill – and some of that goodwill was the result of association with ideas I have spent my career promoting. If that goodwill laundered fraud, I am ashamed. As a community, too, we will need to reflect on what has happened, and how we could reduce the chance of anything like this from happening again. Yes, we want to make the world better, and yes, we should be ambitious in the pursuit of that. But that in no way justifies fraud. If you think that you’re the exception, you’re duping yourself.”

One can argue that exceptionalism is quite common in Silicon Valley.

Let’s also provide the official vision and mission of the company, “Anthropic exists for our mission: to ensure transformative AI helps people and society flourish. Progress this decade may be rapid, and we expect increasingly capable systems to pose novel challenges. We pursue our mission by building frontier systems, studying their behaviors, working to responsibly deploy them, and regularly sharing our safety insights. We collaborate with other projects and stakeholders seeking a similar outcome.”

Well said.

In the same interview to the Future of Life Institute, Amodeis say, that they planned to create a long-term benefit committee made up of people who have no connection to the company or its backers, and who will have the final say on matters including the composition of its board. I couldn’t find information if it actually happened.