- Turing Post

- Posts

- Kids as Philosophers of AI

Kids as Philosophers of AI

Welcome to the 2nd Episode of our AI Literacy Series

Introduction: The Power of Words

Ksenia Se: “Hello everyone and welcome back to the AI Literacy Series from Turing Post. I’m Ksenia Se, founder of Turing Post and a very concerned parent of five kids who are growing up in the world shaped by AI. And my co-host…”

Stefania Druga: “Big coffee drinker.”

We laughed as we started Episode 2, but we quickly fell into a serious discussion. The very words we use about machines – think, know, imagine, create – carry enormous weight. Language shapes belief. Tell a child that ChatGPT is “smart” and the word does more than describe; it builds a mental model. The machine feels alive.

That leap of imagination is not wrong. It is a philosophical experiment, and children perform it naturally. Kids anthropomorphize because it helps them reason. When they draw what’s “inside Alexa” – a little person with a typewriter, or an endless library of books – they are exposing how society itself projects agency into black boxes.

Image Credit: Family as a Third Space for AI Literacies (pdf)

This is where Episode 2 begins: AI literacy does not involve only technical instruction. It is a cultural negotiation. And sometimes, the clearest AI “philosophers” – or epistemologists – are the children at the dinner table asking, “But what does the robot really know?”

There are two ways to approach this series: you can watch or listen to our conversation as-is, old school – as if you are joining us live. Or you can read it here, as a curated reflection on what it means to raise AI-literate kids in a world that’s still figuring out AI itself. Because how we talk to our children about AI will shape how they talk back to it.

Watch it here → or read along

Also, please check the Resources section, there is plenty of awesome material.

And remember to share this article and the whole series – AI literacy is important.

The Architecture of Non-Thinking

To answer that question, we decided to peek inside the black box a bit.

Stefania: “I think it’s helpful to distinguish the different types of AI. Because each technology works differently under the hood, and none of them actually think in the same way a human does.”

When children first meet generative AI, it feels like magic. The trick to literacy is turning that sense of awe into curiosity. Once kids realize there’s no “tiny brain in the box,” they become empowered: they stop asking “What does it know?” and start asking “How does it work?”

The main families of systems can be introduced simply:

Classification Models – the matchmakers of AI. They learn by sorting inputs into categories: show them thousands of cats and dogs, and they’ll adjust their weights until “whiskers and pointy ears” equals cat, “snout and floppy ears” equals dog.

These models are also the oldest ones. The lineage goes back to the 1950s perceptron, then CNNs in the 2010s that suddenly outperformed humans on ImageNet. Today, the same principle powers face unlock, spam filters, and medical scans.

Kids love exposing the cracks. A muffin that looks like a Chihuahua, a Dalmatian that looks like cookies – the model slips, because it isn’t thinking. It’s counting pixel patterns.

That is the key lesson: machines don’t think; they count. And when the data is skewed or the categories flawed, the counts themselves carry bias. A child who sees this in a toy classifier is glimpsing the same dynamics that shape hiring systems, credit scoring, or policing tools.

In several studies, the natural gift of kids of being able to “break” and test the classification models is highlighted as an opportunity to engage in active learning activities where they “break it/fix it” and discover how to select data for training classification models that can handle lots of corner cases.

Diffusion models – These are the artists. They learn by taking an image apart into noise, then practicing how to reconstruct it. Show them a dog, they dissolve it pixel by pixel, then learn to reverse the process. Once they master that trick, they can remix: “a dog that looks like a flower” becomes possible because they’ve learned to rebuild both.

These models power image, video, and even audio generation. Kids quickly see the magic when the same prompt produces wildly different results depending on the random seed. The insight is simple: machines don’t imagine; they remix.

Transformers – These are the writers. Transformers, the backbone of ChatGPT and its peers, don’t see the world. They see text broken into tokens. Their job is next-word prediction: given “the sky is,” guess “blue.” Trained on oceans of language, they become eerily fluent.

Play “next-word karaoke” with kids – humans surprise, models stick to probability. The deeper lesson: machines don’t understand; they predict.

Beyond the Big Three

Alongside classification, diffusion, and transformers, there are other important families that shape the AI tools kids already touch every day:

GANs – the Duelists. Generative Adversarial Networks pit two models against each other: one creates, the other critiques. Kids often grasp this as “the artist and the judge.” GANs were behind the first realistic deepfakes.

Reinforcement Learning – the Gamers. These models learn by trial and error, like a dog fetching a stick. Reward signals guide them toward better strategies. They power everything from AlphaGo to warehouse robots.

Embodied AI – the Movers. Robots and agents combine perception, control, and planning. They don’t just generate outputs – they act in the world, where mistakes are physical.

Memory-Augmented Systems – the Librarians. New architectures allow models to “remember” past interactions or retrieve external knowledge, turning static systems into evolving collaborators.

Image Credit: AI Playground

When you explain these families to children, you replace “the machine is smart” with “the machine follows math.” That shift is liberating. It doesn’t kill the magic; it reframes it as something they can poke, break, and rebuild.

I love hearing this from kids: “How does it work?”

Anthropomorphism as a Tool

Ksenia: “So what do we do with this language? Because there is no brain in a model, no feeling, no thinking. But we keep saying that it thinks.”

Stefania: “Anthropomorphizing itself is not a bad thing. It’s a very useful mechanism for making sense of the world. We see a plug and think it looks like a face. We do this with all sorts of technologies because we learn and explore from our human perspective. It’s an easy way to create connections between our experience and inanimate objects or tools. We’ve been doing this for millennia – it’s almost evolutionary.”

Children give us the template. They start with magical thinking – Alexa has a tiny librarian inside. Then, if guided, they unpack it. They redraw Alexa not as a librarian but as a sensor and a database. The philosophical move here is profound: anthropomorphism as entry point, system thinking as destination.

This is why children are philosophers of AI. They show us how belief is constructed, how metaphors move from imagination to explanation. Adults should learn to follow their arc.

The Five Big Ideas, and Their Gaps

Educators and researchers have tried to give structure. The NSF-funded AI4K12 initiative proposed five big ideas:

Perception – machines perceive through sensors.

Representation – machines build internal models.

Learning – machines adapt from data.

Natural Interaction – machines communicate with us.

Societal Impact – machines change how we live.

It was a groundbreaking start. Yet as Stefania reminded me, these ideas often remain too abstract. Teachers stop at “AI recognizes faces” or “AI recommends movies,” and the curriculum falls apart when kids ask, “But how does this connect to my homework, my TikTok feed, or my privacy?”

Here UNESCO and the U.S. Department of Education frameworks push further. They define AI literacy as not just knowledge but also durable skills and future-ready attitudes. That phrase may sound bureaucratic, but its meaning is radical: thriving with AI requires new human dispositions. Curiosity. Skepticism. Responsibility. The ability to learn again and again.

This is where our Episode 2 brings a new lens. AI literacy must become a bridge from abstractions to lived practices.

Prompt: “Imagine a bridge between neural networks on one side and a smartphone’s photo library on the other side, simple drawing made with colored pencils --16:9”. Created with Flux.Kontext by Ksenia Se

Ksenia: “The problem I see with current resources is that they stop at the same point. They explain machine learning, but they don’t continue. What is the next step?”

Stefania: “Yes, most stop at definitions. The transfer to everyday life is missing. And yet kids are already immersed in tools made by big tech. We need to connect the research with the products children actually use.”

Transfer Learning for Humans

In machine learning, transfer learning means using knowledge from one task to solve another. In human learning, it means something similar: can you take what you learn about classification and apply it to understanding bias in hiring?

Stefania studied this gap with her colleague Nancy Otero. They analyzed 55 AI education resources. Most explained concepts. Few taught transfer. The missing link were applications linking back to childrens’ lived experiences.

We need to bring this into families and classrooms. Three bridges:

From classification → to bank loan fairness and college admissions.

From diffusion → to deepfake awareness.

From transformers → to critical reading of AI-generated answers.

From reinforcement learning → to how incentives shape behavior.

From embodied AI → to safety when machines act in the physical world.

From memory systems → to debates on privacy and permanence.

Transfer happens when a child who trains a biased image classifier realizes that the same skew could deny someone a job. Transfer happens when a teenager who plays with a local chatbot notices the privacy difference compared to a cloud-based model. Transfer happens when a family activity leads to a discussion about how power and responsibility are distributed across systems.

AI literacy becomes meaningful only when it travels outward from sandbox experiments to the choices children will face as workers, citizens, and creators. When children make these connections, they are not only learning AI. They are learning how to govern it.

Children as Philosophers of Bias, Prediction, and Agency

Philosophy often begins with simple thought experiments. Children are masters of this form.

Give them a dataset: eight oranges, three apples. Train a model. Ask: “What will it predict more often?” They will see bias emerge. Then ask: “What happens if the same imbalance exists in a bank’s data?” The child who first laughed about muffins and dogs suddenly understands fairness at a civic scale.

Play “next-word karaoke.” Start a sentence, let kids predict the next word, then compare with ChatGPT. They quickly notice how humans can surprise and models stick to probability. That sparks a deeper insight: creativity is deviation from expectation.

Hand them a blurred image, let them redraw it, then reveal the clear photo. They intuitively grasp diffusion. More importantly, they see how different reconstructions can still feel plausible – a child’s window into the epistemology of uncertainty.

In each case, the child is both student and philosopher. They learn mechanics, but they also pose questions adults avoid. “If the model is wrong but sounds right, who is responsible?” “If it always guesses the common fruit, does it ever learn the rare one?”

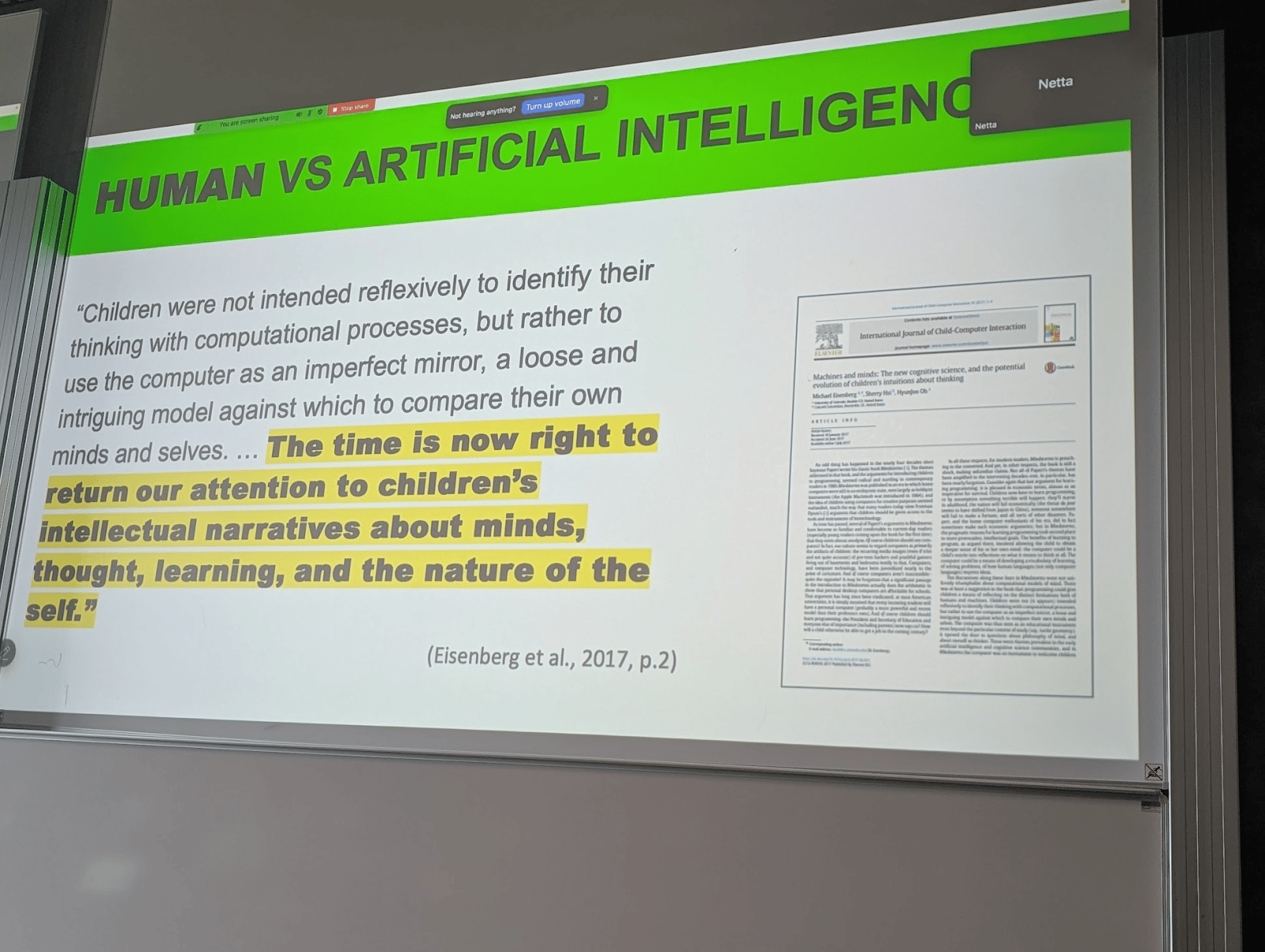

Stefania: “Yasmin Kafai's IDC keynote that resonated a lot with me was the emphasis of Mike Eisenberg’s research on human vs. AI (the perception of AI intelligence will very much shape how youth co-create with AI)

Culture and the Language of Thinking Machines

The phrase “thinking machines” has been with us since Alan Turing. It reshaped philosophy, then science fiction, then industry. Today it shapes households. A child asks “ChatGPT, explain to me…?” and receives an answer in a voice warmer than most teachers’. That is not a neutral interaction; it is a reprogramming of cultural expectations.

Language shapes how we understand the present and design the future. If we call these systems “co-pilots,” we assume a shared cockpit. If we call them “oracles,” we assume unquestionable wisdom. If we call them “assistants,” we assume servitude. The label becomes a design brief.

Children show us this faster than research groups. They live inside the metaphors. Their drawings of little people inside machines echo the medieval homunculus theory of the mind. Their casual questions about “what AI wants” anticipate debates in governance. Their playful errors reveal the cultural work of naming.

To study kids is to study society’s philosophy in its raw form.

Seven Advanced Activities for Families and Classrooms

Bias in a Bowl. Train a model with oranges and apples. Discuss how imbalance leads to skewed predictions. Connect to fairness in real systems.

Next-Word Karaoke. Human vs. Transformer. Who surprises more?

Anthropomorphism Diary. Keep a log of every time family members describe machines with human traits. Debate whether it helps or misleads.

Framework Crosswalk. Print AI4K12’s five ideas, UNESCO’s skills, and Dept of Education’s definition. Let students map overlaps and gaps.

Local vs. Cloud Debate. Run a small model locally and compare with a cloud chatbot. Discuss trade-offs of privacy, speed, and control.

Design a Metaphor. Challenge kids to invent their own metaphor for AI – not “thinking machine” but something fresh. Collect them. These metaphors are seeds of future culture.

Who knows more?

More printouts and games on Stefania’s AI playground

Closing: The Takeaway

AI literacy is not a checklist of technical knowledge. It is a cultural practice shaped by language, imagination, and agency. To raise AI-literate children is to raise philosophers who teach us how society should think about non-thinking machines.

Stefania: “The world is anthropocentric, at least for now. Machines recognize patterns; humans create meaning. Our job is to bridge them thoughtfully.”

Ksenia: “And next time, we’ll talk about how to create with machines – not only to demystify them, but to use that same imagination to build.”

👉 Next in the series: AI as Your Creative Sidekick – how to co-create with machines without losing sight of what creativity means.

Resources and further reading

AI competency framework for students (UNESCO, aug 2024)

AI4K12 – Five Big Ideas with grade‑band charts and printable poster

Family as a Third Space for AI Literacies: How do children and parents learn about AI together? (pdf)

On diffusion models and how they are used across crafting, design, art and in many maker projects

The Binding of Fenrir: Children in an Emerging Age of Transhumanist Technology by Michael Eisenberg

The 4As: Ask, Adapt, Author, Analyze - AI Literacy Framework for Families (pdf)

AI Playground (gitHub)

AI playground (educational website)

Play with AI and ML

Reply