Quick answer: What is attention in AI?

Attention in AI is the mechanism that lets Transformer models decide which parts of an input sequence should influence each token’s representation. It compares queries, keys, and values to build contextual meaning, powers self-attention, and enables faster generation through KV cache reuse.

TL;DR: Attention in AI lets Transformer models dynamically decide which tokens matter most for understanding context. Using queries, keys, and values, attention computes relationships between tokens, enabling contextual representations, efficient long-context processing, and modern capabilities like reasoning, translation, retrieval, and autoregressive text generation.

What is attention in AI? This question may sound simple, but there are actually many aspects worth a deep discussion.

In the previous episodes, we explored what a token in AI is and followed tokens as they became embeddings: dense vectors, that are also points in a learned space and coordinates with semantic structure. Embeddings are where meaning lives, but coordinates alone are not enough. The next natural step in our series is attention. So what is its role in the workflow?

Tokenization breaks language into units.

Embedding turns those units into learnable geometry.

Attention makes that geometry interact.

Attention solves a harder task than previous stages.: for this token, in this sentence, at this exact moment, which other tokens are relevant enough to change its meaning? The overall mechanism lets one token attend to another token, decide how relevant it is, and pull in exactly the information it needs. At this moment a sequence stops being a row of vectors and becomes context.

This mechanism became an indispensable foundation and changed AI once and forever, especially becoming the central computation part of Transformers.

So let’s unpack where attention comes from, how it works, the core components and types of this mechanism, and why attention isn’t the same as understanding. It’s foundational knowledge.

In today’s episode:

The history of attention: from translation to Transformers

How attention works

From embeddings to contextual representations

Queries, keys, and values: the QKV mechanism

How to compute attention step-by-step?

What is KV cache and why does it matter?

How attention mechanisms are evolving

Why attention is not the same as understanding

Sources and further reading

The history of attention: from translation to Transformers

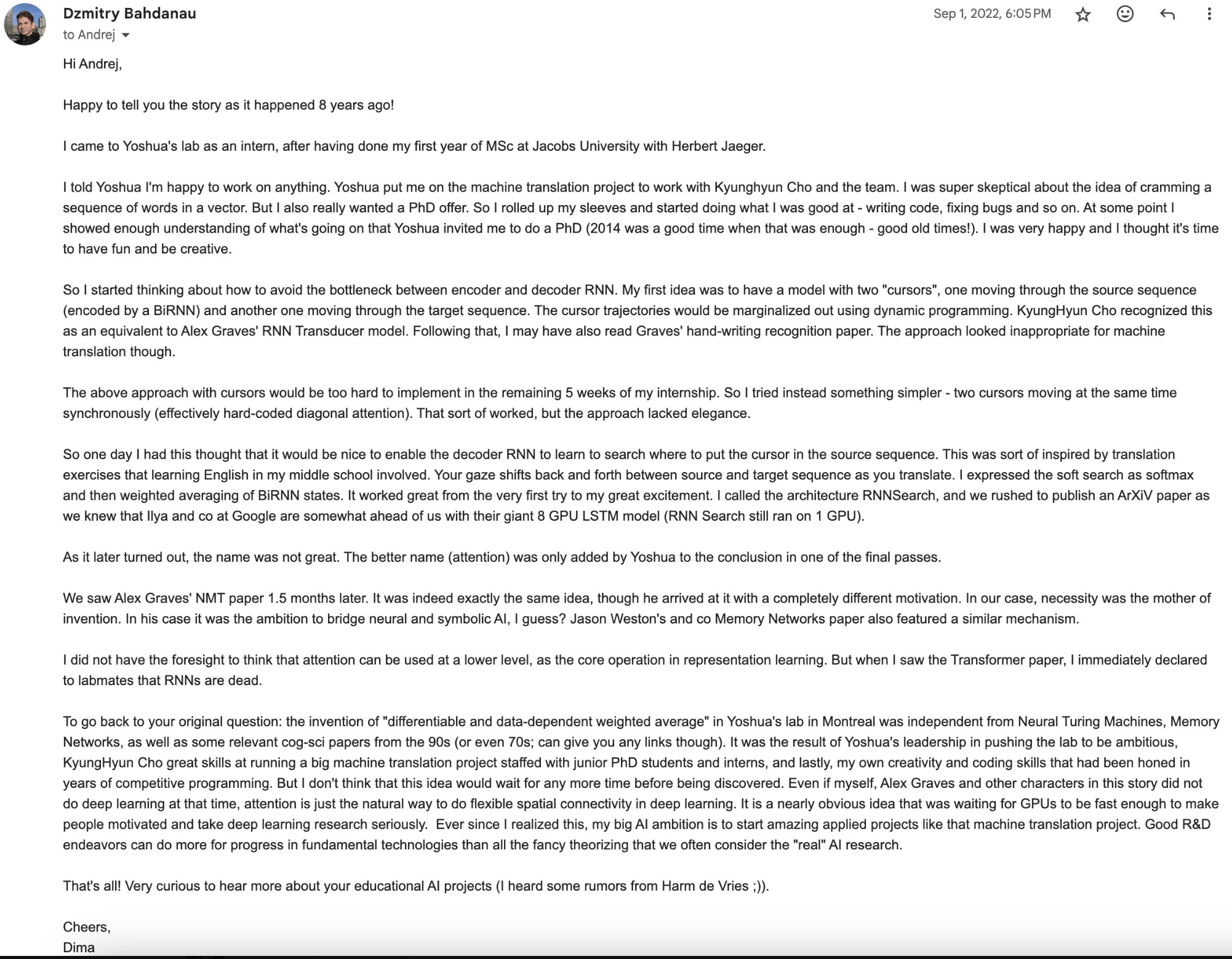

Before attention became the center of the Transformer described in one of the most influential papers in AI – Attention is All You Need from Google, it had appeared three years earlier as a solution for a practical problem in neural machine translation.

Early encoder–decoder models encoded an entire source sentence into a single fixed-length vector – a dense numerical representation that captures the sentence’s meaning – and decoded the translation from that compressed summary. Dzmitry Bahdanau, KyungHyun Cho and Yoshua Bengio in their Neural Machine Translation by Jointly Learning to Align and Translate paper (2014) argued that this fixed-length context vector created a bottleneck, because the model had to compress all relevant information into one representation. They proposed a model that could align source and target words during decoding, letting the decoder softly search over different parts of the input sentence while generating each new word. The decoder dynamically computes a context vector as a weighted combination of source annotations, focusing on the most relevant parts of the input for the current prediction. This was the moment when context became adaptive and target-dependent.

Image credit: Karpathy’s post

Then in 2015, Stanford researchers published Effective Approaches to Attention-based Neural Machine Translation by Minh-Thang Luong, Hieu Pham and Christopher D. Manning that extended this idea with practical attention mechanisms. They introduced global attention, where the decoder attends to all source positions, and local attention, where it focuses only on a smaller window of source words at each step. Plus, they proposed input-feeding approach, where the model feeds earlier attention information back into later steps so it can remember what parts of the source it has already focused on.

Image Credit: Global attention mechanism in Effective Approaches to Attention-based Neural Machine Translation

Image Credit: Local attention window Effective Approaches to Attention-based Neural Machine Translation

Then finally came the main breakthrough – the architecture built around attention itself. Yes, it is about Transformers and Attention Is All You Need paper (2017) by A. Vaswani et al. The Google researchers removed recurrence and convolutions and made self-attention layers the core foundation of Transformers. They also introduced the formulation of attention and the language of queries, keys, and values that became the canonical way to describe how models retrieve and combine contextual information.

This is just the story of how attention became the centerpiece of modern models. It’s not enough to understand the basics. Let’s go through every part of the workflow step-by-step.

How attention works

From embeddings to contextual representations

The usual Transformer model starts with token embeddings (dense numerical vector representations of tokens that encode semantic and syntactic information in a continuous vector space), combined with positional encodings, because the word order matters a lot in this architecture. At this stage, these vectors are still not deeply contextual. They have information about token identity, and with positional encoding they “know” something about location, but they do not yet define what matters to what.

So these “token embeddings + positional encodings” vectors serve as the inputs to the attention mechanism, entering the Transformer’s attention layers.

Image Credit: Transformer architecture showing self-attention layers and positional encodings, Attention is All You Need

There, each token representation is transformed into queries, keys, and values. These are linear projections of embeddings or hidden states – context-aware vector representations of tokens as they are processed layer by layer inside the model. Embeddings provide the raw material from which attention builds its comparisons. Without embeddings, the mechanism would have nothing meaningful to compare or route through the network.

Queries, keys, and values: the QKV mechanism

Here is the essential vocabulary that everyone needs to know to understand the full mechanism and attention formulation.

After embeddings and positional information enter the model, each token vector is multiplied by learned weight matrices to produce three different versions of itself:

A query (Q) is what the current token is looking for. It is the signal used to compare against other tokens and determine which ones may be relevant.

A key (K) is information that every token exposes about itself so others can decide whether to attend to it. It acts like a tag or description attached to a token.

A value (V) represents the information a token contributes if attention selects it. It is the actual content being passed along, like a payload. A weighted combination of value vectors becomes the attention output.

But why can’t we just use ordinary embeddings, and why do we need to split them into these three vectors?

Don’t settle for shallow articles. Learn the basics and go deeper with us. Truly understanding things is deeply satisfying.

Join Premium members from top companies like Microsoft, NVIDIA, Google, HF, OpenAI, a16z, plus AI labs such as Ai2, MIT, Berkeley, .gov, and thousands of others to really understand what’s going on in AI.

FAQ

What is attention in AI?

Attention is a mechanism used in Transformers that allows models to determine which tokens in a sequence are most relevant when processing information. It dynamically computes relationships between tokens to build contextual understanding.

How do queries, keys, and values work in attention?

Queries represent what a token is searching for, keys describe what each token offers, and values contain the information passed forward. Attention compares queries with keys to decide how much of each value to use.

Why is attention important for Transformers?

Attention allows Transformers to process relationships between tokens in parallel instead of sequentially. This enables better context modeling, scalability, and long-range dependency handling compared to older recurrent architectures.

What is KV cache in LLMs?

KV cache stores previously computed key and value vectors during autoregressive generation so the model does not recompute them for every new token. This dramatically speeds up inference in large language models.

Attention vs understanding: what is the difference?

Attention helps models prioritize relevant information in context, but it does not guarantee reasoning, factual correctness, or human-like understanding. It is a computational mechanism, not consciousness or intent.