If you think about the term AGI, especially in the context of pre-training, you will realize that the human being is not an AGI, because a human being lacks a huge amount of knowledge. Instead, we rely on continual learning.

Do you feel this shift too? The idea of models learning endlessly is showing up everywhere. We see it, we hear it, and it’s all pushing the spotlight toward continual learning.

This approach refers to the ability to keep learning new things over time without forgetting what you already know. Humans do this naturally (as Ilya Sutskever also noted) and they are very flexible to changing data. But, unfortunately, neural networks are not. When developers change the training data, they often face something that is called catastrophic forgetting: the model starts loosing its previous knowledge, and returns to training model from scratch.

Finding the very balance between a model’s plasticity and its stability in previously learned knowledge and skills is becoming a serious challenge right now. This paradigm is the path to more “intelligent” systems that will save time, resources, and money spent on training, it helps mitigate biases and errors, and, in the end, things can just go easier and more naturally with model deployment.

Today we’ll look at the basics of continual learning and two approaches that are worth your attention: very recent Google’s Nested Learning and Meta FAIR’s Sparse Memory Finetuning. There is a lot to explore →

In today’s episode, we will cover:

Continual Learning: the essential basics

Setups and scenarios for Continual Learning training

How to help models learn continually? General methods

What is Nested Learning?

How does Nested Learning work?

HOPE: Google’s architecture for continual learning

Not without limitations

Cautious continual learning with memory layers

Sparse Memory Finetuning

Limitations

Conclusion / Why continual learning is important now?

Sources and further reading

Continual Learning Explained: Core Concepts

Continual learning means learning step-by-step from data that changes over time. So it is related to two main things:

Non-stationary data, which means the data distribution does not stay the same and keeps shifting.

Incremental learning – the model should add new knowledge without wiping out what it learned before.

The new pieces of information can be new skills, new examples, new environments, or new contexts. As the data comes in gradually, continual learning is also known as lifelong learning. The process of continual learning happens when the model is already deployed.

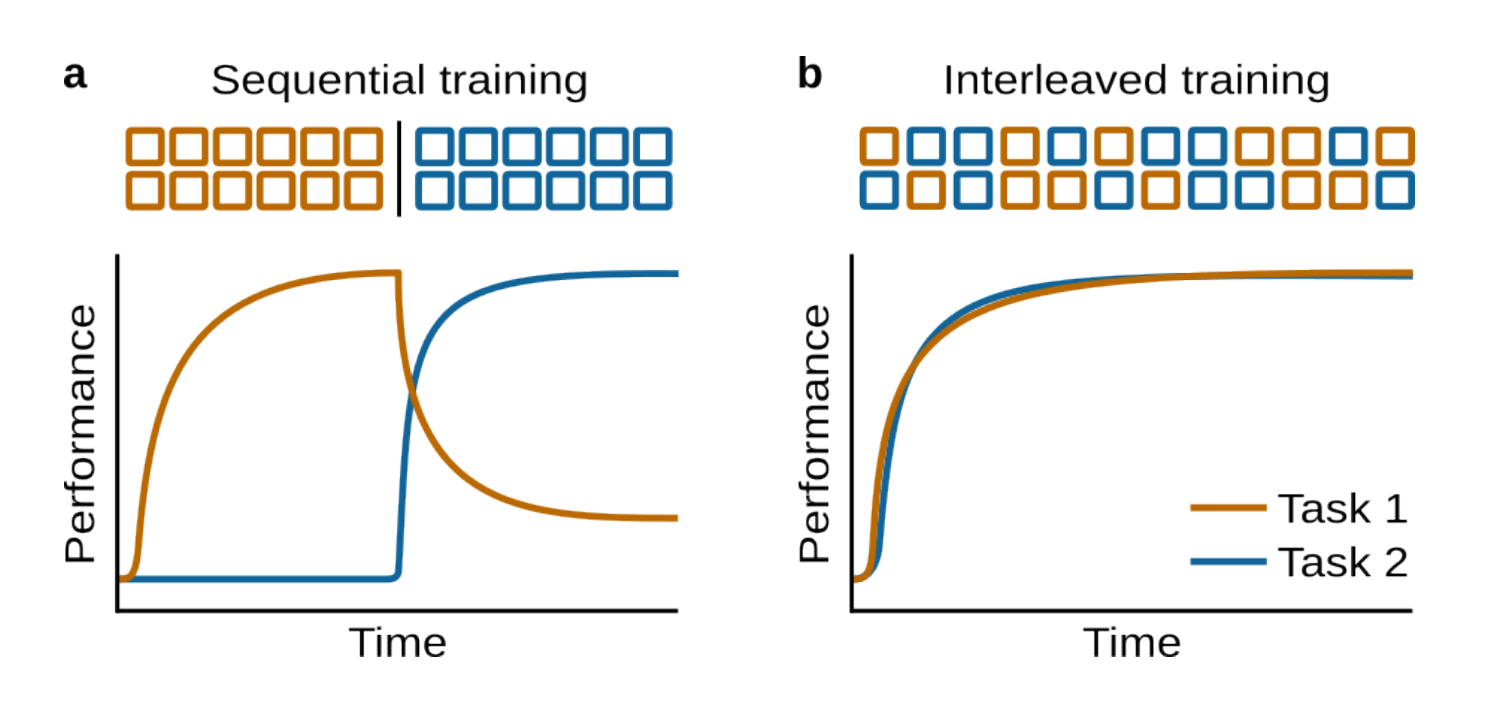

Everything would be great if models didn’t face one major challenge – catastrophic forgetting. This problem generally looks like this: a neural network is trained on Task 2 after Task 1, and its weights are updated for Task 2. This often pushes them away from the optimum for Task 1, and the model suddenly performs very poorly on that task.

The problem here is not the model’s capacity – this usually happens because of the sequential training procedure. Even in 1989-1990, Michael McCloskey and Neal J. Cohen and R. Ratcliff identified this problem and showed that simple networks lose previous knowledge extremely quickly when trained sequentially. They also highlighted that this forgetting is much worse than in humans.

But if you train on Tasks 1 and 2 interleaved, forgetting does not happen.

Image Credit: Illustration of catastrophic forgetting, “Continual Learning and Catastrophic Forgetting” paper

Preventing forgetting is only one part of the solution. Effective lifelong learning also requires:

Fast adaptation

Ability to leverage task similarities

Task-agnostic behavior

Robustness to noise

High efficiency in memory and compute

Avoiding storing all past data and retraining on all previous data

If tasks are related, the model should get better at one after learning another, which marks positive knowledge transfer:

Forward transfer → Task 1 helps Task 2 later.

Backward transfer → Task 2 helps improve Task 1. This is a more difficult variant for neural networks.

So, a good continual learning system needs the right balance: it should stay stable (not forget old things) while still being plastic enough to learn new ones. It also needs to handle differences within each task and across different tasks. How is it released on practice?

Image Credit: “A Comprehensive Survey of Continual Learning: Theory, Method and Application” paper

Continual Learning Setups: Task-Based vs Tas-Free

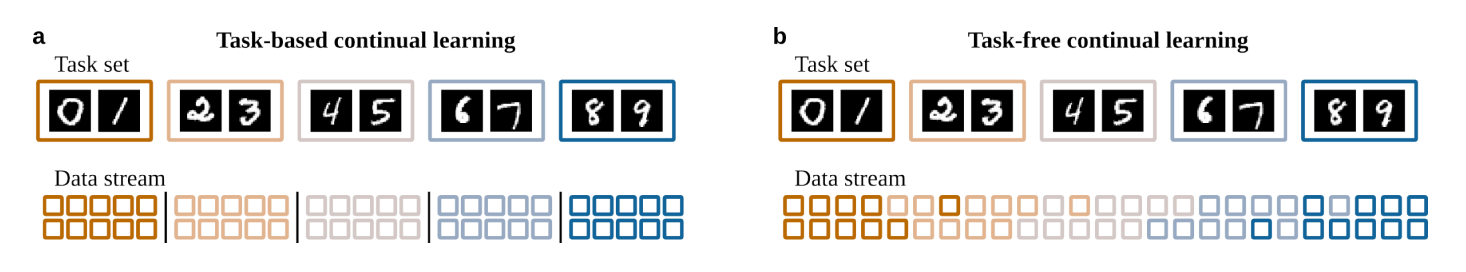

Continual learning is mainly about moving from one task to the next while keeping performance stable or improving it during ongoing learning. That’s why two fundamental setups are used for it:

Task-based continual learning: Data is organized into clear, separate tasks which are shown one after another, with explicit task boundaries. It is the most common setup, because it is convenient and controlled – you know exactly when tasks switch. But it doesn’t represent gradual changes found in the real world, and models may rely too heavily on boundaries for memory updates.

Task-free continual learning: This one is more realistic, because it better reflects real-world data where distributions shift continuously. There is still an underlying set of tasks, but task boundaries are not given and transitions are smooth.

Image Credit: “Continual Learning and Catastrophic Forgetting” paper

Continual learning researchers often uses three main scenarios to describe what the model is expected to know at test time and whether it gets task identity information. Importantly, these scenarios are defined by how the changing data relates to the function the network must learn:

Task-Incremental Learning (Task-IL)

The model must learn multiple distinct tasks, but it can switch behavior depending on task identity. This information might come from an explicit task label during evaluation, contextual cues, distinct inputs. The thing is, this scenario allows using different task-specific modules, separate output heads per task, or even a different network per task. The idea behind it is if you use separate parts or networks, there is no forgetting. But at the same time, this approach is wasteful.

Domain-Incremental Learning (Domain-IL)

The model must perform the same mapping, like classify even/odd digits, or identify objects, but without being told which domain the input belongs to. The task identity is not given, but the underlying function stays the same. What changes is the input distribution or context, for example, classifying the same objects in different lighting conditions, driving in different weather.

Class-Incremental Learning (Class-IL)

This is the most difficult scenario. The model must learn more and more classes over time among all classes ever learned, when no task identity is given at test time. One difficult thing that is learned through this approach is distinguishing between classes that were never seen together (like first learning cats vs. dogs, then birds vs. fish, and then recognizing any of the four).

Image Credit: “Continual Learning and Catastrophic Forgetting” paper

These three scenarios are independent of whether the learning setup is task-based or task-free. Here are also some helping methods that can make continual learning even more effective.

How to Prevent Catastrophic Forgetting: Key Methods

Techniques usually refer to methods that improve the main approach or concept. Here are some techniques that work for continual learning and help mitigate the forgetting problem:

Replay – involves showing the model old examples again so training looks more like “interleaving tasks.” This can be experience replay (like a memory buffer) with mixing set of past examples with new data during training, or replay of synthetic generative samples.

Parameter regularization – protects important model’s weights by penalizing large changes during training on new tasks. This helps the network keep old knowledge, but it doesn’t work well for class-incremental learning.

Functional regularization – this one, in turn, protects the function the network performs, or the actual input–output mapping. It is like replaying the same inputs but with model-generated targets. The idea is to choose some anchor points (inputs) and keep the network’s predictions on those anchor points similar to past predictions.

Image Credit: “Continual Learning and Catastrophic Forgetting” paper

Optimization-based approaches – change how training updates how learning occurs: they seek flatter minima to reduce sensitivity, adapt learning rates per-parameter to protect old knowledge, project gradients to avoid increasing loss on previous tasks (e.g., Gradient Episodic Memory (GEM)), and use fast/slow weight dynamics to encourages fast weights not to drift too far from slow ones. These types of strategies address the core problem that standard optimizers fail under non-stationary, task-shifting data.

Context-dependent processing – the network does not use all of its parameters for every task. For example, it lets the model switch modes, or each task gets its own output layer (head), the model adds new components or modules for different tasks. This strategy works best for Task-IL.

Template-based classification – widely used for class-incremental learning. The model uses stored representations, or templates, for each class, and later, it classifies a sample by comparing it to all templates and choosing the closest one.

These are the general techniques, the basics, that pop up here and there across the field. But right now we’re seeing breakthroughs that give continual learning a fresh perspective and bring it back into focus. And we couldn’t ignore the two most interesting ones →

Google Nested Learning: Multi-Speed Memory Architecture

The first one comes from Google Research. The team noticed that the brain can learn continuously because different parts of it update at different speeds: some areas change quickly, like short-term memory, while others update slowly, like long-term memory. This insight became the basis for their new method, Nested Learning (NL), which brings this idea into machine learning by letting different parts of a model update on different time scales.

The Google researchers noted that if you look at models like Transformers through this lens, they’re basically layers that update at different “frequencies”, similar to what the brain does.

NL is build to overcome the main problem of LLMs that usually remember the context window (short-term memory), remember what was in their parameters from pretraining stage (long-term memory), but can’t store new long-term memories and can’t learn new abilities. This highlights the main gap: new information in context never updates long-term memory and so doesn’t change long-term parameters. So →

How does Nested Learning work?

NL encourages us to rethink neural networks from the perspective of memory. Instead of seeing a model as one monolithic system trained with one optimizer, NL views it as a stack of smaller learning systems, each operating at its own speed. Each part of the model trains at its own pace and learns from its own “slice” of information, and NL shows that together they form a collection of interconnected optimization problems. But how?

Every part of a modern neural network – layers, optimizers, even attention – can be interpreted as a kind of associative memory with its own update rule.

An associative memory is the ability to link one thing to another. In machine learning, it maps keys to values and updates itself to improve this mapping. A key could be a token, a gradient, or a hidden state, and a value could be an embedding, a momentum vector, or an attention value. In this case, training becomes a compression problem: just take many key→value pairs and store them efficiently in parameters.

For example, when we train a 1-layer Multilayer Perceptron (MLP) using gradient descent, the model is basically learning a mapping: input → signal (the gradient). The gradient update is the solution to a small optimization problem over the weight matrix, so the weight matrix is itself an associative memory storing what the model has learned so far. This is a 1-level nested system.

How do we get two levels?

→ Nested Learning uses a structure where different parts of the optimization process update at different speeds.

Fast memory: the inner optimizer state that updates every step and captures short-term learning signals.

Slow memory: the model weights that update more slowly by integrating those fast updates.

In this two-level system, the inner optimizer learns rapid patterns, while the outer optimizer consolidates them into long-term parameters.

Transformers add their own fast memory through attention, which recomputes token-to-token relationships on every forward pass.

Attention updates instantly, optimizer states update every step, and weights update slowly – giving the model multiple natural time scales even before NL.

Nested Learning treats the optimizer as an explicit memory module:

momentum stores recent gradients, Adam stores two moments, and more advanced optimizers hold richer internal states. NL generalizes this into a structured hierarchy where each level learns at its own speed.

If we sort components by how often they update, we get a hierarchy of levels, which forms the structure of Nested Learning:

Level 1: updates every token (like attention memory)

Level 2: updates every batch (like momentum)

Level 3: updates every few steps (like slow weights)

A neural network becomes a Neural Learning Module – a hierarchy of memories, each optimizing its own internal objective at its own frequency.

Image Credit: Nested Learning original paper

But if we want continual learning, we need multiple levels of memory, each with its own update frequency, not just “short-term vs long-term.” This idea led to the Continuum Memory System (CMS) and then to the new HOPE architecture.

Continuum Memory System (CMS)

Instead of one MLP (long-term memory), Continuum Memory System (CMS) uses multiple MLPs, each updating at a different frequency, for example:

Level 1 memory updates every token

Level 2 memory updates every 10 tokens

Level 3 memory updates every 100 tokens and so on

Each MLP in the CMS’ chain is indexed by its update frequency and has its own parameters, so they update on different schedules. This creates a continuum from fast to slow memory:

Each memory block watches its own chunk of recent data.

The slower the block, the less often it updates.

Each memory level compresses its own context into its parameters.

Fast levels are lower levels and they capture details and fast-changing information.

Slow levels, which are also higher levels, compress longer trends and store more abstract and more stable information.

In this paradigm, Transformer is a special case with only one MLP that is updated only during pretraining. That is why Transformers are mostly static, which doesn’t fit with the idea of continual learning at all. This encouraged Google Research to propose another variant of architecture for this purpose.

HOPE: Google’s architecture for continual learning

All of Google’s new continual learning ideas meet inside one system called HOPE: a Hierarchical, Optimizing, Persistent, Evolving module. It’s a self-referential learning system built around three core elements:

Image Credit: Nested Learning original paper

Titans-style self-modifying sequence models. They combine short-term attention with a neural long-term memory module that can learn and store information even at test time.

Nested Learning view of optimization.

Continuum Memory System (CMS) as a multi-speed knowledge hierarchy.

While a Transformer has only 2 memory systems (attention as fast memory, and MLP as slow memory that never changes after pretraining), HOPE is the system of another level with notable benefits:

Multiple time-scales of memory thanks to many MLPs at different update frequencies.

Self-updating internal mechanisms: It learns its own update rules.

Both fast and slow learning processes.

The ability to continually adapt without catastrophic forgetting.

In addition to this, HOPE architecture achieves lower perplexity and higher accuracy than Transformers and other recurrent models, such as Samba and Google’s own Titans. It also handles extended sequences more effectively.

Image Credit: Introducing Nested Learning: A new ML paradigm for continual learning

Image Credit: Nested Learning original paper

Not without limitations

Though the Nested Learning paradigm and HOPE feel new and highly optimized, they come with their own challenges:

Multiple memory levels with different update frequencies can significantly increase compute cost.

Obviously, HOPE has a more complex architecture than many other models.

With all new elements and different chunks and frequencies needed for the right CMS workflow, HOPE is harder to train and tune.

Continually updating some memory levels may lead to higher memory bandwidth demands.

Fast-update memories might overfit to short-term patterns if not carefully regularized.

It remains unclear how NL method and HOPE behave at very large scale: 100B–1T+ parameters. So results are promising but still early.

Overall, Nested Learning is a fresh variant of continual learning, and HOPE is a great shift to implementing it in a real architecture. Google’s perspective shows how optimizers, attention, and weights all fit under the same learning architecture, proposing something that we haven’t seen earlier.

Another outstanding approach is a more universal one and is focused on solving the main problem of continual learning: How can a model keep updating its parameters without breaking everything it learned before? Let’s see →

Cautious Meta Sparse Memory Fine-Tuning: Targeted Parameter Updates

Researchers from FAIR at Meta propose their solution: memory layers. These are high-capacity layers where only a small number of parameters are active on any given forward pass. The key feature is that updates stay sparse and localized, so they don’t overwrite the model’s core knowledge.

What is a memory layer basically? This layer replaces one of the Transformer’s feedforward networks (FFNs) with a sparse attention lookup into a giant pool of learned keys and values. A memory layer adds a huge table of learned “memory slots” – there can be millions of them. Instead of running a normal MLP, the model queries this memory to pull out the most relevant stored information.

Here is how it works step-by-step:

Image Credit: Memory layer architecture, “The Continual Learning Problem”

The model creates a query.

Then it finds the best-matching memory slots – only the top 32. This is extremely sparse: the model touches only a tiny fraction, about 0.03% to 0.0002% of all memory parameters. These 32 slots are combined into a single “memory output” based on how well each one matches.

Finally, the model gates the memory output. It decides how much of this retrieved memory to use depending on the input. If the memory is helpful, it leans on it; if not, it ignores it.

Here keys and values are parameters, not activations as in standard attention: keys learn when a memory slot should fire, values learn what useful information to output. As a result, the memory acts like a trainable cache inside the model.

Sparse Memory Finetuning

FAIR at Meta researchers followed the key idea: since memory layers are sparse, we can update only a tiny number of parameters when the model learns something new. It is the fundamental idea behind their method called Sparse Memory Finetuning which gives both:

high capacity (lots of room to store new knowledge) and

targeted updates (only touching the parameters that matter).

How to find memory slots that are specific to the new fact the model needs to learn?

Image Credit: Continual Learning via Sparse Memory Finetuning

Researchers borrowed an idea from classic information retrieval: TF-IDF (Term Frequency-Inverse Document Frequency). It measures how important a word is in a document, compared to how common it is across all documents. TF-IDF is high when a term appears often in one document but rarely elsewhere.

In the case of sparse memory finetuning, TF-IDF:

Counts how often each memory slot is accessed for the current batch.

Compares that to how often the same slot was accessed during pretraining.

A slot that is frequently accessed for the current example but rarely accessed elsewhere is treated as highly specific. This means it is likely storing information unique or highly relevant to the new fact.

Then only the small set of high-TF-IDF slots is fine-tuned, while everything else (most of the model) stays frozen.

In experiments, this approach dramatically reduced forgetting. When teaching models new TriviaQA facts:

Full fine-tuning caused NaturalQuestions performance to drop by 89%. It showed that it can learn a lot, but forgets quickly.

LoRA caused a drop of 71%. It gives more control, but still struggles as updates accumulate.

Memory layers caused only an 11% drop, and sparse memory finetuning consistently achieves a better balance.

Image Credit: Continual Learning via Sparse Memory Finetuning

To sum up, here is why memory layers are such a strong fit for lifelong learning:

Millions of memory slots mean room for lots of new knowledge.

Targeted parameter updates: only the relevant memory slots change during training.

Ability to update without destroying prior abilities.

Much less forgetting compared to full fine-tuning or LoRA.

Better long-term integration of knowledge.

But here are several…

Limitations

Sparse updates reduce forgetting in the short term, but it is unclear how memory slots behave after millions of updates and at larger scales.

Memory layers must be included during pretraining for the model to learn how to organize its memory effectively. This is quite expensive.

Dependence on TF-IDF may not always identify the “right” slots.

Despite this Meta’s approach is much more universal and easy to implement in current models.

Conclusion: Why continual learning is important now?

Today’s models still live inside the moment. They operate on the immediate present, while older memories stay frozen in time. That stops them from evolving, because they can’t reliably build new knowledge or new skills during real use. Humans learn through their whole lives, so models should keep learning after deployment too. We’re entering a phase where inference matters more than training. This capability is the missing ingredient, even in the most capable reasoning models.

It provides a balance between:

Plasticity – the ability to learn new things

Stability – the ability to preserve existing knowledge

Stronger lifelong learning would help us build AI that behaves more like humans, correct biases or errors in deployed models without full retraining, and enable on-device learning where constant retraining is impossible. Fortunately, more researchers are now focusing on continual learning and exploring ways to integrate this missing puzzle piece into AI systems.

Nested Learning and sparse memory finetuning push this shift forward. Sparse memory finetuning gives existing systems like Transformers a universal path to adaptation, helping them absorb new knowledge while keeping their older memories stable. Nested Learning adds a new lens on neural networks by treating them as multi-level stacks of memories that learn at different speeds. It ties together many parts of deep learning and opens a path toward more expressive, stable, and biologically inspired learning systems. And this is only the first chapter of a much bigger story.

Sources and further reading

Introducing Nested Learning: A new ML paradigm for continual learning (Google blog post)

The Continual Learning Problem (Jessy Lin blog post)